Spatio-Temporal

Misc

- Packages

- CRAN Task View

- {cubble} (Vignette) - Organizing and wrangling space-time data. Addresses data collected at unique fixed locations with irregularity in the temporal dimension

- Handles sparse grids by nesting the time series features in a tibble or tsibble.

- {glmSTARMA} - (Double) Generalized Linear Models for Spatio-Temporal Data

- {magclass} - Data Class and Tools for Handling Spatial-Temporal Data

- Has datatable under the hood, so it should be pretty fast for larger data

- Conversions, basic calculations and basic data manipulation

- {mlr3spatiotempcv} - Extends the mlr3 machine learning framework with spatio-temporal resampling methods to account for the presence of spatiotemporal autocorrelation (STAC) in predictor variables

- Use

lgr::get_logger("mlr3")$set_threshold("warn")to reduce verbosity of running models (source)

- Use

- {rasterVis} - Provides three methods to visualize spatiotemporal rasters:

hovmollerproduces Hovmöller diagramshorizonplotcreates horizon graphs, with many time series displayed in parallelxyplotdisplays conventional time series plots extracted from a multilayer raster.

- {SemiparMF} - Fits a semiparametric spatiotemporal (regression?) model for data with mixed frequencies, specifically where the response variable is observed at a lower frequency than some covariates.

- Combines a non-parametric smoothing spline for high-frequency data, parametric estimation for low-frequency and spatial neighborhood effects, and an autoregressive error structure

- {sdmTMB} - Implements spatial and spatiotemporal GLMMs (Generalized Linear Mixed Effect Models)

- {sftime} - A complement to {stars}; provides a generic data format which can also handle irregular (grid) spatiotemporal data

- {spStack} - Bayesian inference for point-referenced spatial data by assimilating posterior inference over a collection of candidate models using stacking of predictive densities.

- Currently, it supports point-referenced Gaussian, Poisson, binomial and binary outcomes.

- Highly parallelizable and hence, much faster than traditional Markov chain Monte Carlo algorithms while delivering competitive predictive performance

- Fits Bayesian spatially-temporally varying coefficients (STVC) generalized linear models without MCMC

- {stdbscan} - Spatio-Temporal DBSCAN Clustering

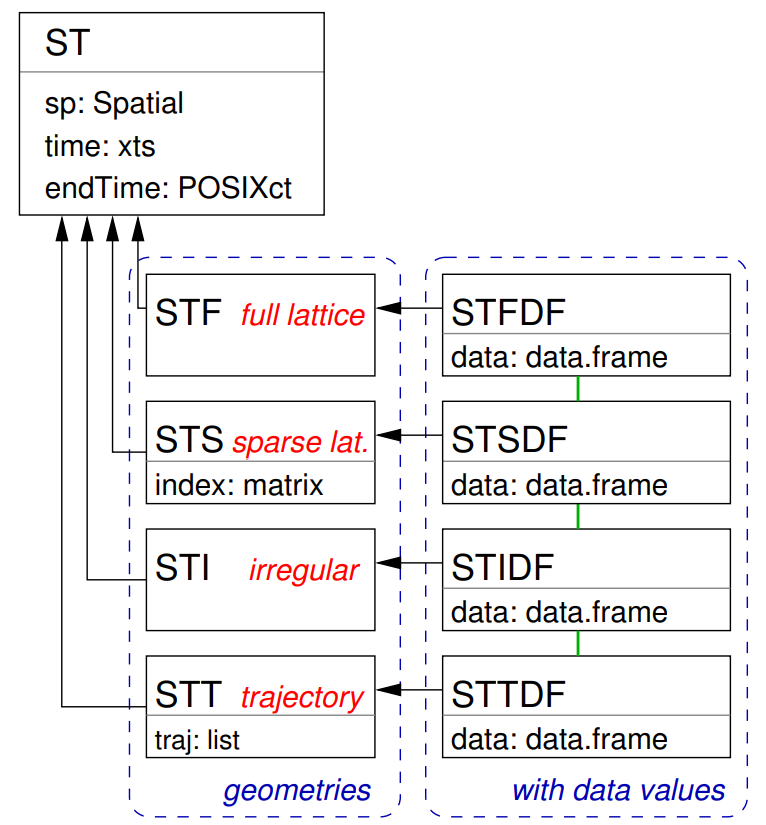

- {spacetime} - Superceded by {stars}. Extends the shared classes defined in {sp} for spatio-temporal data.

- {stars} uses PROJ and GDAL through {sf}.

- {spTimer} (Vignette) - Spatio-Temporal Bayesian Modelling

- Models: Bayesian Gaussian Process (GP) Models, Bayesian Auto-Regressive (AR) Models, and Bayesian Gaussian Predictive Processes (GPP) based AR Models for spatio-temporal big-n problems

- Depends on {spacetime} and {sp}

- {stars} - Reading, manipulating, writing and plotting spatiotemporal arrays (raster and vector data cubes) in ‘R’, using ‘GDAL’ bindings provided by ‘sf’, and ‘NetCDF’ bindings by ‘ncmeta’ and ‘RNetCDF’

- Only handles full lattice/grid data

- It supercedes {spacetime}, which extended the shared classes defined in {sp} for spatio-temporal data. {stars} uses PROJ and GDAL through {sf}.

- Easily convert spacetime objects to stars object with

st_as_stars(spacetime_obj)

- Easily convert spacetime objects to stars object with

st_as_starshas methods for these classes (and others): (docs)- data.frame

- list

- This is the one used for matrices and arrays.

- Data Formats >> Time-wide >> Example 2 (matrix)

- Data Formats >> Long >> Example 4 (array)

- Kriging >> Examples >> Example 3 >> Catboost >> Process Predictions

- sf

- This seems to only allow you to convert the variables of a sf dataframe to attributes with the only dimension being the geometry column.

After the stars object is created, I haven’t found a way to add dimensions to it either. It’s probably because the attributes are 1-D (i.e. not matrices or arrays) - Maybe something involving stars’

mergecan be done to fix this.

- This seems to only allow you to convert the variables of a sf dataframe to attributes with the only dimension being the geometry column.

- bbox

- xts

- cubble_df

- OpenStreetMap

- Raster

- SpatRaster

- Has dplyr verb methods

- {WaveST} - An integrated wavelet-based spatial time series modeling framework designed to enhance predictive accuracy under noisy and nonstationary conditions by jointly exploiting multi-resolution (wavelet) information and spatial dependence

- Resources

- Spatial Data Science With Applications in R

- Geocomputation with R, Ch.12

- Only spatial applications but the workflows for ML prediction with {mlr3} will be similar in the spatio-temporal case

- Geodata and Spatial Regression, Ch. 14

- Spatial Modelling for Data Scientists, Ch. 10

- Spatio-Temporal Statistics in R (See R >> Documents >> Geospatial)

- Spatial and Temporal Statistics

- It’s kind of a brief overview of spatial and temporal modeling separately. The spatio-temporal section at the end is also brief and pretty basic.

- There are things such as HMMs and GPs that I’m not yet familar with which could be interesting.

- Papers

- INLA-RF: A Hybrid Modeling Strategy for Spatio-Temporal Environmental Data

- Integrates a statistical spatio-temporal model with RF in an iterative two-stage framework.

- The first algorithm (INLA-RF1) incorporates RF predictions as an offset in the INLA-SPDE model, while the second (INLA-RF2) uses RF to directly correct selected latent field nodes. Both hybrid strategies enable uncertainty propagation between modeling stages, an aspect often overlooked in existing hybrid approaches.

- A Kullback-Leibler divergence-based stopping criterion.

- Effective Bayesian Modeling of Large Spatiotemporal Count Data Using Autoregressive Gamma Processes

- Introduces spatempBayesCounts package but evidently it hasn’t been publicly released yet

- Amortized Bayesian Inference for Spatio-Temporal Extremes: A Copula Factor Model with Autoregression

- INLA-RF: A Hybrid Modeling Strategy for Spatio-Temporal Environmental Data

- Notes from

- spacetime: Spatio-Temporal Data in R - It’s been superseded by {stars}, but there didn’t seem to be much about working with vector data in {stars} vignettes. So, it seemed like a better place to start for a beginner, and I think the concepts might transfer since the packages were created by the same people. Some packages still use sp and spacetime, so it could be useful in using with those packages

- SDS Chapters 6, 7, 13

- Spatio-Temporal Interpolation using gstat

- Introduction to Spatio-Temporal Variography ({gstat} vignette)

- Spatio-Temporal Kriging in R

- Also shows how to create a prediction grid of roads

- Use Cases

- Interpolating air quality measurements to values on a regular grid

- Estimating density maps from points or lines, for instance estimating the number of flights passing by per week within a range of 1 km

- Aggregating climate model predictions to summary indicators for administrative regions

- Combining Earth observation data from different sensors, such as MODIS (250~m pixels, every 16 days) with Sentinel-2 (10~m pixels, every 5 days)

Terms

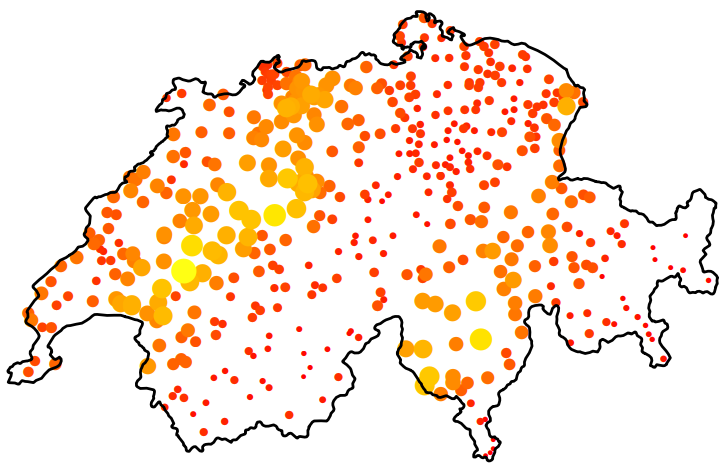

- Anisotropy

Spatial Anisotropy means that spatial correlation depends not only on distance between locations but also direction.

- The size and color of circle represent rainfall values

- The figure (source) demonstrates spatial anisotropy with alternating stripes of large and small circles from left to right, southwest to northeast.

- Standard Kriging assumes isotropy (uniform in all directions) and uses an omnidirectional variogram that averages spatial correlation across all directions. Violation of isotropy can bias predictions; overestimate uncertainty in “stronger” directions (and vice versa); and the predictions will fail to capture patterns.

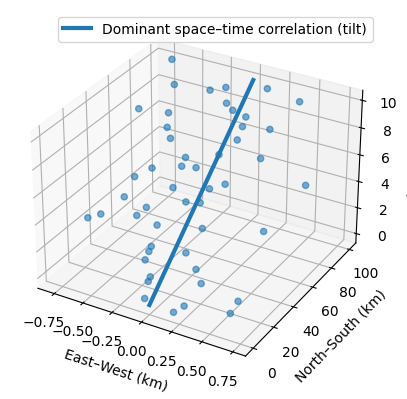

Spatio-Temporal Anisotropy refers to directional dependence in correlation that varies across both space and time dimensions

- Example: If you are mapping a pollution plume moving North at 10 km/h, the correlation structure is not just “longer in the North-South direction.”

- It is tilted in the space-time cube where time is the vertical in the 3d representation.

- A vertical correlation (no anisotropy) would start at the bottom of the line and be parallel to the z-axis (time)

- If you are measuring something that doesn’t move—like soil quality at a specific GPS coordinate—the time correlation is vertical

- With a pollution plume, the time correlation will “tilt” because pollution values will be more similar in different locations as time increases.

- It is tilted in the space-time cube where time is the vertical in the 3d representation.

- Example: If you are mapping a pollution plume moving North at 10 km/h, the correlation structure is not just “longer in the North-South direction.”

- Non-Spatial Microscale Effects - Sources of variability that do not necessarily decay with spatial distance over your sampling resolution, but appear as excess semivariance at short lags (i.e. possible explanations for a nugget effect)

- Measurement Error

- Instrument noise

- Calibration differences across monitors

- Local siting effects (height, enclosure, nearby obstructions

- Sub-Grid Physical Variability

- Street-level pollution vs background air

- Near-road vs off-road effects

- Local point sources (traffic lights, factories, heating units)

- Omitted Covariates

- Elevation

- Land use

- Urban/rural classification

- Meteorology (boundary layer height, wind exposure)

- Regime Mixing in Time

- Seasonal transitions

- Weather regimes

- Policy or emissions changes

- Measurement Error

- UNIX Time - The number of seconds between a particular time and the UNIX epoch, which is January the 1st 1970 GMT.

Should have 10 digits. If it has 13, then milliseconds are also included.

Convert to POSIXlt

data$TIME <- as.POSIXlt(as.numeric(substr( paste(data$generation_time), start = 1, stope = 10)), origin = "1970-01-01")substris subsetting the 1st 10 digitspasteis converting the numeric to a string. I’d tryas.charater.

Grid Layouts

Types

- {spacetime} Classes

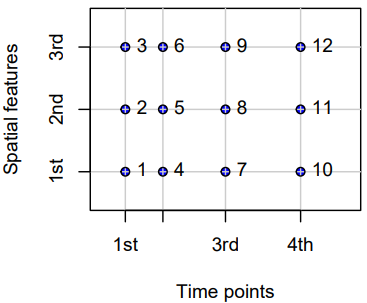

- Full-Grids

- Each coordinate (spatial feature vs. time) for each time point has a value (which can be NA) — i.e. a fixed set of spatial entities and a fixed set of time points.

STFDF(sp, time, data)- sp: A SpatialPoints or maybe a SpatialPointsDataFrame class object

- Requires only unique pairs (lat, lon) of coordinates that are present in the data, e.g. if there are 53 locations, then there should be 53 pairs (lat, long) of coordinates.

- This object’s coordinates has to be ordered the same way as the data (first by time, then by space).

- time: An ordered vector of dates (can be Date or POSIXct)

- Requires only a unique set of dates

- data: A dataframe with the variable(s) of interest.

nrows(data) == nrows(coords) * length(time)where coords is the coordinates matrix in the SpatialPoints object. If this logical isn’t true, you’ll get an error.- It will be true if all the bold instructions above are followed.

- sp: A SpatialPoints or maybe a SpatialPointsDataFrame class object

- Examples

- Regular (e.g., hourly) measurements of air quality at a spatially irregular set of points (measurement stations)

- Yearly disease counts for a set of administrative regions

- A sequence of rainfall images (e.g., monthly sums), interpolated to a spatially regular grid. In this example, the spatial feature (images) are grids themselves.

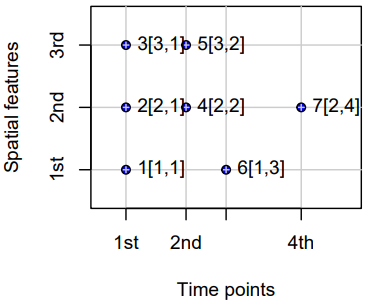

- Sparse Grids

- Only coordinates (spatial feature vs. time) that have a value are included (no NA values)

- Use Cases

- Space-time lattices where there are many missing or trivial values

- e.g. grids where points represent observed fires, and points where no fires are recorded are discarded.

- Each spatial feature has a different set of time points

- e.g. where spatial feaatures are regions and a point indicates when and where a crime takes place. Some regions may have frequent crimes while others hardly any.

- When spatial features vary with time

- Scenarios

- Some locations only exist during certain periods.

- Measurement stations move or appear/disappear.

- Remote sensing scenes shift or have different extents.

- Administrative boundaries change (e.g., counties/tracts splitting or merging).

- Example: Suppose you have monthly satellite images of vegetation over a region for three months

- January: the satellite covers tiles A, B, and C

- February: cloud cover obscures tile B; only A and C are available

- March: a different orbital path covers B and D (a new area)

- Example: Crime locations over time for a city

- In 2018, you have GPS points for all recorded crimes that year (randomly scattered).

- In 2019, you have a completely different set of GPS points (different crimes, different places).

- The number of points per year also varies.

- Scenarios

- Space-time lattices where there are many missing or trivial values

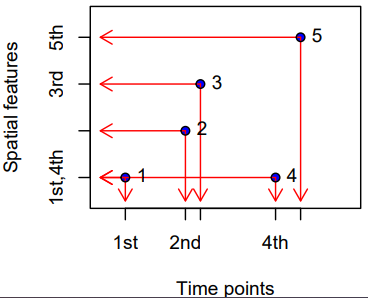

- Irregular Grids

- Time and space points of measured values have no apparent organization: for each measured value the spatial feature and time point is stored, as in the long format.

- No underlying lattice structure or indexes. Each observation is a standalone (space, time) pair. Repeated values are possible.

- Essentially just a dataframe with a geometry column, datetime column, and value column.

STIDF(sp, time, data)- Arguments sp, time and data need to have the same number of records, and regardless of the class of time (xts or POSIXct) have to be in correspoinding order: the triple sp[i], time[i] and data[i, ] refer to the same observation

- I haven’t had any luck with this class in my limited usage (hanging

StVariogram). You can just create a STFDF class object with your data with NAs for your variable(s) at the filled-in time instances (StVariogramworked for me. See Interpolate >> Example 2).

- Use Cases

- Mobile sensors or moving animals: each record has its own location and timestamp — no fixed grid or station network.

- Lightning strikes: purely random events in continuous space-time.

- Trajectory Grids

- Trajectories cover the case where sets of (irregular) space-time points form sequences, and depict a trajectory.

- Their grouping may be simple (e.g., the trajectories of two persons on different days), nested (for several objects, a set of trajectories representing different trips) or more complex (e.g., with objects that split, merge, or disappear).

- Examples

- Human trajectories

- Mobile sensor measurements (where the sequence is kept, e.g., to derive the speed and direction of the sensor)

- Trajectories of tornados where the tornado extent of each time stamp can be reflected by a different polygon

Subsetting

{spacetime}

Notes from Subsetting of Spatio-Temporal Objects

Don’t subset STSDF (or probably STIDF objects too) as it’s not intuitive. You’re subsetting the index inside the object and not spatial locations. After index is subsetted, the resulting structure makes no sense to me. Best to do your subsetting before creating these objects.

Example data

spObj #> SpatialPoints: #> x y #> A 0 2 #> B 2 2 #> C 1 1 #> D 0 0 #> E 2 0 #> Coordinate Reference System (CRS) arguments: NA timeObj #> [1] "2010-08-05 EDT" "2010-08-06 EDT" mydata #> smpl1 smpl2 #> 1 8.798207 8.658649 #> 2 NA NA #> 3 29.764684 28.761798 #> 4 NA NA #> 5 49.808253 49.931965 #> 6 NA NA #> 7 NA NA #> 8 28.308468 29.619051 #> 9 39.334547 40.642147 #> 10 49.174389 51.285906 # the indexing for the STFDF object # 1 (A, t1) # 2 (A, t2) # 3 (B, t1) # 4 (B, t2) # 5 (C, t1) # 6 (C, t2) # 7 (D, t1) # 8 (D, t2) # 9 (E, t1) # 10 (E, t2)- Data used to create the various grid type objects

- When creating one of these objects,

nrow(object@data) == length(object@sp) * nrow(object@time)- In this example, 10 = 5 * 2

- When a STSDF (sparse) is constructed, rows with NA are dropped.

Spatially

Only first 3 locations

STFobj[1:3, ] #> An object of class "STF" #> Slot "sp": #> SpatialPoints: #> x y #> A 0 2 #> B 2 2 #> C 1 1 #> Coordinate Reference System (CRS) arguments: NA #> #> Slot "time": #> timeIndex #> 2010-08-05 1 #> 2010-08-06 2 #> #> Slot "endTime": #> [1] "2010-08-06 EDT" "2010-08-07 EDT"Only first 3 locations in reverse order

# in reverse order STFobj[3:1, ] #> An object of class "STF" #> Slot "sp": #> SpatialPoints: #> x y #> C 1 1 #> B 2 2 #> A 0 2 #> Coordinate Reference System (CRS) arguments: NA #> #> Slot "time": #> timeIndex #> 2010-08-05 1 #> 2010-08-06 2 #> #> Slot "endTime": #> [1] "2010-08-06 EDT" "2010-08-07 EDT"Using a logical vector

STFobj[c(TRUE,TRUE,FALSE,FALSE,TRUE), ] #> An object of class "STF" #> Slot "sp": #> SpatialPoints: #> x y #> A 0 2 #> B 2 2 #> E 2 0 #> Coordinate Reference System (CRS) arguments: NA #> #> Slot "time": #> timeIndex #> 2010-08-05 1 #> 2010-08-06 2 #> #> Slot "endTime": #> [1] "2010-08-06 EDT" "2010-08-07 EDT"Using a location names

STFobj[c("A","B"), ] #> An object of class "STF" #> Slot "sp": #> SpatialPoints: #> x y #> A 0 2 #> B 2 2 #> Coordinate Reference System (CRS) arguments: NA #> #> Slot "time": #> timeIndex #> 2010-08-05 1 #> 2010-08-06 2 #> #> Slot "endTime": #> [1] "2010-08-06 EDT" "2010-08-07 EDT"

Temporally

Uses {xts} for the time series component, so use their syntax to subset different time intervals

By years

STFDFobj[, "2023::2024"]- All data from 2023-01-01 to 2024-12-01

- Was in the {spacetime} vignette but not in xts docs ¯\_(ツ)_/¯

1 month

STFDFobj[,'2023-03'] # ❌ doesn't work STFDFobj[,'2023-03-01'] # ❌ doesn't work STFDFobj[, '2023-03/2023-03'] # worksEverything before August 2023 (includes August)

STFDFobj[,'/2023-08']Everything after August 2023 (doesn’t include August

STFDFobj[,'2023-08/']Subsetting by index is a pain and should be avoided. When you do it, you have to convert the resulting object back to a spacetime object.

{stars}

Used for STFDF layouts

The example data is only a spatial raster, but all the concepts should translate. Time would just be an extra dimension I think.

See

- EDA >> General >> Example 5 (subsets a dimension index)

- Kriging >> Examples >> Example 3 >> krigeST, Catboost (transforms attribute)

-

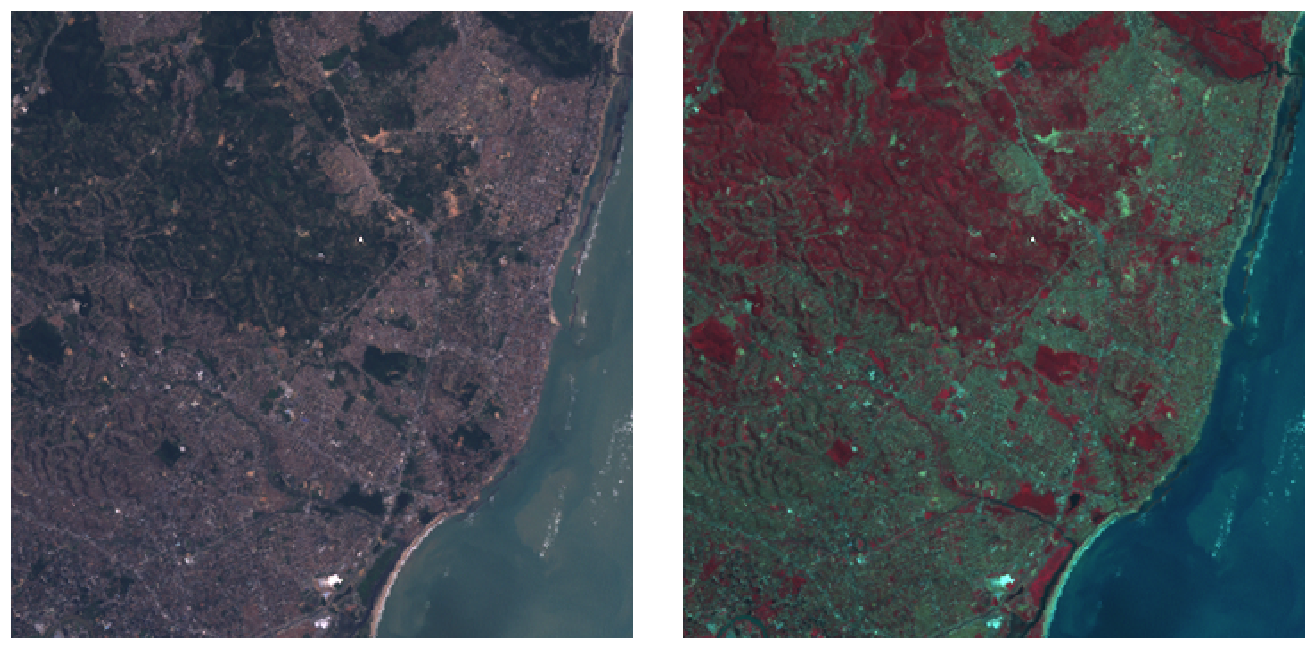

tif <- system.file("tif/L7_ETMs.tif", package = "stars") library(stars) # Loading required package: abind (r <- read_stars(tif)) # stars object with 3 dimensions and 1 attribute # attribute(s): # Min. 1st Qu. Median Mean 3rd Qu. Max. # L7_ETMs.tif 1 54 69 68.9 86 255 # dimension(s): # from to offset delta refsys point x/y # x 1 349 288776 28.5 SIRGAS 2000 / ... FALSE [x] # y 1 352 9120761 -28.5 SIRGAS 2000 / ... FALSE [y] # band 1 6 NA NA NA NA length(r) # [1] 1 class(r[[1]]) # [1] "array" dim(r[[1]]) # x y band # 349 352 6 st_bbox(r) # xmin ymin xmax ymax # 288776 9110729 298723 9120761 plot(r) par(mfrow = c(1, 2)) plot(r, rgb = c(3,2,1), reset = FALSE, main = "RGB") # rgb plot(r, rgb = c(4,3,2), main = "False colour (NIR-R-G)") # false colour- Components

from: starting indexto: ending indexoffset: dimension value at the start (edge) of the first pixeldelta: cell size; negativedeltavalues indicate that pixel index increases with decreasing dimension valuesrefsys: reference systempoint: logical, indicates whether cell values have point support or cell supportx/y: indicates whether a dimension is associated with a spatial raster x- or y-axis

- For regular, rotated, or sheared grids or other regularly discretised dimensions (such as time),

offsetanddeltaare notNA - Irregular cases,

offsetanddeltaareNAand thevaluesproperty contains one of:- In case of a rectilinear spatial raster or irregular time dimension, the sequence of values or intervals

- In case of a vector data cube, geometries associated with the spatial dimension

- In case of a curvilinear raster, the matrix with coordinate values for each raster cell

- In case of a discrete dimension, the band names or labels associated with the dimension values

- Components

By bracket

- Attributes first (by name, index, or logical vector)

- Followed by each dimension.

- Selecting discontinuous ranges is supported only when it is a regular sequence

- By default,

dropis FALSE, when set toTRUEdimensions with a single value are dropped

Example: bracket and dplyr

r[1:2, 101:200, , 5:10] # selecting particular ranges of dimension values filter(r, x > 289000, x < 290000) # stars object with 3 dimensions and 1 attribute # attribute(s): # Min. 1st Qu. Median Mean 3rd Qu. Max. # L7_ETMs.tif 5 51 63 64.3 75 242 # dimension(s): # from to offset delta refsys point x/y # x 1 35 289004 28.5 SIRGAS 2000 / ... FALSE [x] # y 1 352 9120761 -28.5 SIRGAS 2000 / ... FALSE [y] # band 1 6 1 1 NA NA slice(r, band, 3) # stars object with 2 dimensions and 1 attribute # attribute(s): # Min. 1st Qu. Median Mean 3rd Qu. Max. # L7_ETMs.tif 21 49 63 64.4 77 255 # dimension(s): # from to offset delta refsys point x/y # x 1 349 288776 28.5 SIRGAS 2000 / ... FALSE [x] # y 1 352 9120761 -28.5 SIRGAS 2000 / ... FALSE [y]- Selects from r attributes 1-2, index 101-200 for dimension 1, and index 5-10 for dimension 3; omitting dimension 2 means that no subsetting takes place.

- Note in components example, xmin of the bounding box was x’s offset and ymax was y’s offset. Now x’s offset is 289004 after filtering.

- x’s to index has changed

sliceselects the 3rd band and in the summary the band dimension is dropped since there’s only 1 now- Neither to index has changed

mutatecan be used onstarsobjects to add new arrays as functions of existing ones,transmutedrops existing ones

By buffer zone

b <- st_bbox(r) |> st_as_sfc() |> st_centroid() |> st_buffer(units::set_units(500, m)) r[b] # stars object with 3 dimensions and 1 attribute # attribute(s): # Min. 1st Qu. Median Mean 3rd Qu. Max. NA's # L7_ETMs.tif 22 54 66 67.7 78.2 174 2184 # dimension(s): # from to offset delta refsys point x/y # x 157 193 288776 28.5 SIRGAS 2000 / ... FALSE [x] # y 159 194 9120761 -28.5 SIRGAS 2000 / ... FALSE [y] # band 1 6 NA NA NA NA r[b] |> st_normalize() |> st_dimensions() # from to offset delta refsys point x/y # x 1 37 293222 28.5 SIRGAS 2000 / ... FALSE [x] # y 1 36 9116258 -28.5 SIRGAS 2000 / ... FALSE [y] # band 1 6 NA NA NA NA # alternatively st_crop(r, b)- This object still has dimension indexes relative to the offset and delta values of r

st_normalizeandst_dimensionsset the object with the new offset- i.e. the indexes are reset so this r[b] is it’s own independent stars object (if it was assigned to a variable). It’s now been cropped

Sample and Extract

set.seed(115517) pts <- st_bbox(r) |> st_as_sfc() |> st_sample(20) (e <- st_extract(r, pts)) # stars object with 2 dimensions and 1 attribute # attribute(s): # Min. 1st Qu. Median Mean 3rd Qu. Max. # L7_ETMs.tif 12 41.8 63 61 80.5 145 # dimension(s): # from to refsys point # geometry 1 20 SIRGAS 2000 / ... TRUE # band 1 6 NA NA # values # geometry POINT (293002 ...,...,POINT (290941 ... # band NULL- The output is a vector data cube

Data Formats

Misc

- {spacetime} supported Time Classes: Date, POSIXt, timeDate ({timeDate}), yearmon ({zoo}), and yearqtr ({zoo})

- Data and Spatial Classes:

- Points: Data having points support should use the SpatialPoints class for the spatial feature

- Polygons: Values reflect aggregates (e.g., sums, or averages) over the polygon (SpatialPolygonsDataFrame, SpatialPolygons)

- Grids: Values can be point data or aggregates over the cell.

stConstructcreates “a STFDF (full lattice/full grid) object if all space and time combinations occur only once, or else an object of class STIDF (irregular grid), which might be coerced into other representations.”- STFDF and {stars} objects require dimension elements to have the same order as the columns or rows in the attribute matrix or array.

Order the space dimension to be the same as the attribute object (Long >> Example 4)

bristol_longer <- bristol_od |> select(-all) |> pivot_longer(3:6, names_to = "mode", values_to = "n") # unique origin locations od <- bristol_tidy |> pull("o") |> unique() # order of the rows of the eventual attribute order <- match(od, bristol_zones$geo_code) # reorders eventual space dimension to match attribute order zones <- st_geometry(bristol_zones)[order]

- Latitude and Longitude to using {sp}

Example: (source)

# Create a SpatialPointsDataFrame coordinates(df) <- ~ LON + LAT projection(df) <- CRS("+init=epsg:4326") # Transform into Mercator Projection # SpatialPointsDataFrame with coordinates in meters. ozone.UTM <- spTransform(df, CRS("+init=epsg:3395")) # SpatialPoints ozoneSP <- SpatialPoints(ozone.UTM@coords, CRS("+init=epsg:3395"))

Long

The full spatio-temporal information (i.e. response value) is held in a single column, and location and time are also single columns.

Example 1: Private capital stock (?)

#> state year region pcap hwy water util pc gsp #> 1 ALABAMA 1970 6 15032.67 7325.80 1655.68 6051.20 35793.80 28418 #> 2 ALABAMA 1971 6 15501.94 7525.94 1721.02 6254.98 37299.91 29375 #> 3 ALABAMA 1972 6 15972.41 7765.42 1764.75 6442.23 38670.30 31303 #> 4 ALABAMA 1973 6 16406.26 7907.66 1742.41 6756.19 40084.01 33430 #> 5 ALABAMA 1974 6 16762.67 8025.52 1734.85 7002.29 42057.31 33749- Each row is a single time unit and space unit combination.

- This is likely not the row order you want though. I think these spatio-temporal object creation functions want ordered by time, then by space. (See Example)

Example 2: Create full grid spacetime object from a long table (7.2 Panel Data in {spacetime} vignette)

head(df_data); tail(df_data) #> state year region pcap hwy water util pc gsp emp unemp #> 1 ALABAMA 1970 6 15032.67 7325.80 1655.68 6051.20 35793.80 28418 1010.5 4.7 #> 18 ARIZONA 1970 8 10148.42 4556.81 1627.87 3963.75 23585.99 19288 547.4 4.4 #> 35 ARKANSAS 1970 7 7613.26 3647.73 644.99 3320.54 19749.63 15392 536.2 5.0 #> 52 CALIFORNIA 1970 9 128545.36 42961.31 17837.26 67746.79 172791.92 263933 6946.2 7.2 #> 69 COLORADO 1970 8 11402.52 4403.21 2165.03 4834.28 23709.75 25689 750.2 4.4 #> 86 CONNECTICUT 1970 1 15865.66 7237.14 2208.10 6420.42 24082.38 38880 1197.5 5.6 #> state year region pcap hwy water util pc gsp emp unemp #> 731 VERMONT 1986 1 2936.44 1830.16 335.51 770.78 6939.39 7585 234.4 4.7 #> 748 VIRGINIA 1986 5 28000.68 14253.92 4786.93 8959.83 71355.78 88171 2557.7 5.0 #> 765 WASHINGTON 1986 9 41136.36 11738.08 5042.96 24355.32 66033.81 67158 1769.9 8.2 #> 782 WEST_VIRGINIA 1986 5 10984.38 7544.99 834.01 2605.38 35781.74 21705 597.5 12.0 #> 799 WISCONSIN 1986 3 26400.60 10848.68 5292.62 10259.30 60241.65 70171 2023.9 7.0 #> 816 WYOMING 1986 8 5700.41 3400.96 565.58 1733.88 27110.51 10870 196.3 9.0 yrs <- 1970:1986 vec_time <- as.POSIXct(paste(yrs, "-01-01", sep=""), tz = "GMT") head(vec_time) #> [1] "1970-01-01 GMT" "1971-01-01 GMT" "1972-01-01 GMT" "1973-01-01 GMT" "1974-01-01 GMT" "1975-01-01 GMT" head(spatial_geom_ids) #> [1] "alabama" "arizona" "arkansas" "california" "colorado" "connecticut" class(geom_states) #> [1] "SpatialPolygons" #> attr(,"package") #> [1] "sp"- The names of the objects above don’t the match the ones in the example, but I wanted names that were more informative about what types of objects were needed. The package documentation and vignette are spotty with their descriptions and explanations.

- The row order of the spatial geometry object should match the row order of the spatial feature column (in each time section) of the dataframe.

- The data_df only has the state name (all caps) and the year as space and time features.

- spatial_geom_ids shows the order of the spatial geometry object (state polygons) which are state names in alphabetical order.

- At least in this example, the geometry object (geom_states) didn’t store the actual state names. It just used a id index (e.g. ID1, ID2, etc.)

st_data <- STFDF(sp = geom_states, time = vec_time, data = df_data) length(st_data) #> [1] 816 nrow(df_data) #> [1] 816 class(st_data) #> [1] "STFDF" #> attr(,"package") #> [1] "spacetime" df_st_data <- as.data.frame(st_data) head(df_st_data[1:6]); tail(df_st_data[1:6]) #> V1 V2 sp.ID time endTime timeIndex #> 1 -86.83042 32.80316 ID1 1970-01-01 1971-01-01 1 #> 2 -111.66786 34.30060 ID2 1970-01-01 1971-01-01 1 #> 3 -92.44013 34.90418 ID3 1970-01-01 1971-01-01 1 #> 4 -119.60154 37.26901 ID4 1970-01-01 1971-01-01 1 #> 5 -105.55251 38.99797 ID5 1970-01-01 1971-01-01 1 #> 6 -72.72598 41.62566 ID6 1970-01-01 1971-01-01 1 #> V1 V2 sp.ID time endTime timeIndex #> 811 -72.66686 44.07759 ID44 1986-01-01 1987-01-01 17 #> 812 -78.89655 37.51580 ID45 1986-01-01 1987-01-01 17 #> 813 -120.39569 47.37073 ID46 1986-01-01 1987-01-01 17 #> 814 -80.62365 38.64619 ID47 1986-01-01 1987-01-01 17 #> 815 -90.01171 44.63285 ID48 1986-01-01 1987-01-01 17 #> 816 -107.55736 43.00390 ID49 1986-01-01 1987-01-01 17- So combining of these objects into a spacetime object doesn’t seemed be based any names (e.g. a joining variable) of the elements of these separate objects.

- STFDF arguments:

- sp: An object of class Spatial, having n elements

- time: An object holding time information, of length m;

- data: A data frame with n*m rows corresponding to the observations

- Converting back to a dataframe adds 6 new columns to df_data:

- V1, V2: Latitude, Longitude

- sp.ID: IDs within the spatial geomtry object (geom_states)

- time: Time object values

- endTime: Used for intervals

- timeIndex: An index of time “sections” in the data dataframe. (e.g. 1:17 for 17 unique year values)

Example 3: Convert a STFDF to Long Format {sf} dataframe (source >> Example 1)

library(dplyr) data(air, package = "spacetime") # get rural location time series from 2005 to 2010 rural <- spacetime::STFDF( sp = stations, time = dates, data = data.frame(PM10 = as.vector(air)) ) rr <- rural[,"2005::2010"] # remove station ts w/all NAs unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x))) ) r5to10 <- rr[-unsel,] # Convert to Long Format crs_r5 <- sp::proj4string(r5to10@sp) sf_r5 <- as.data.frame(r5to10) |> sf::st_as_sf(coords = c("coords.x1", "coords.x2"), crs = crs_r5) |> sf::st_transform(crs = 25832) |> # projected, UTM zone 32N filter(!is.na(PM10)) |> rename( date = time, pm10 = PM10, station_id = sp.ID ) |> select(-endTime, -timeIndex) tib_pm10 <- sf_r5 |> mutate( date_feat = date, # extra for feat eng X = sf::st_coordinates(geometry)[, 1], Y = sf::st_coordinates(geometry)[, 2] ) |> sf::st_drop_geometry()- The essential elements here are pulling the CRS using {sp}

proj4stringand converting to a dataframe using the {spacetime}as.data.framemethod

- The essential elements here are pulling the CRS using {sp}

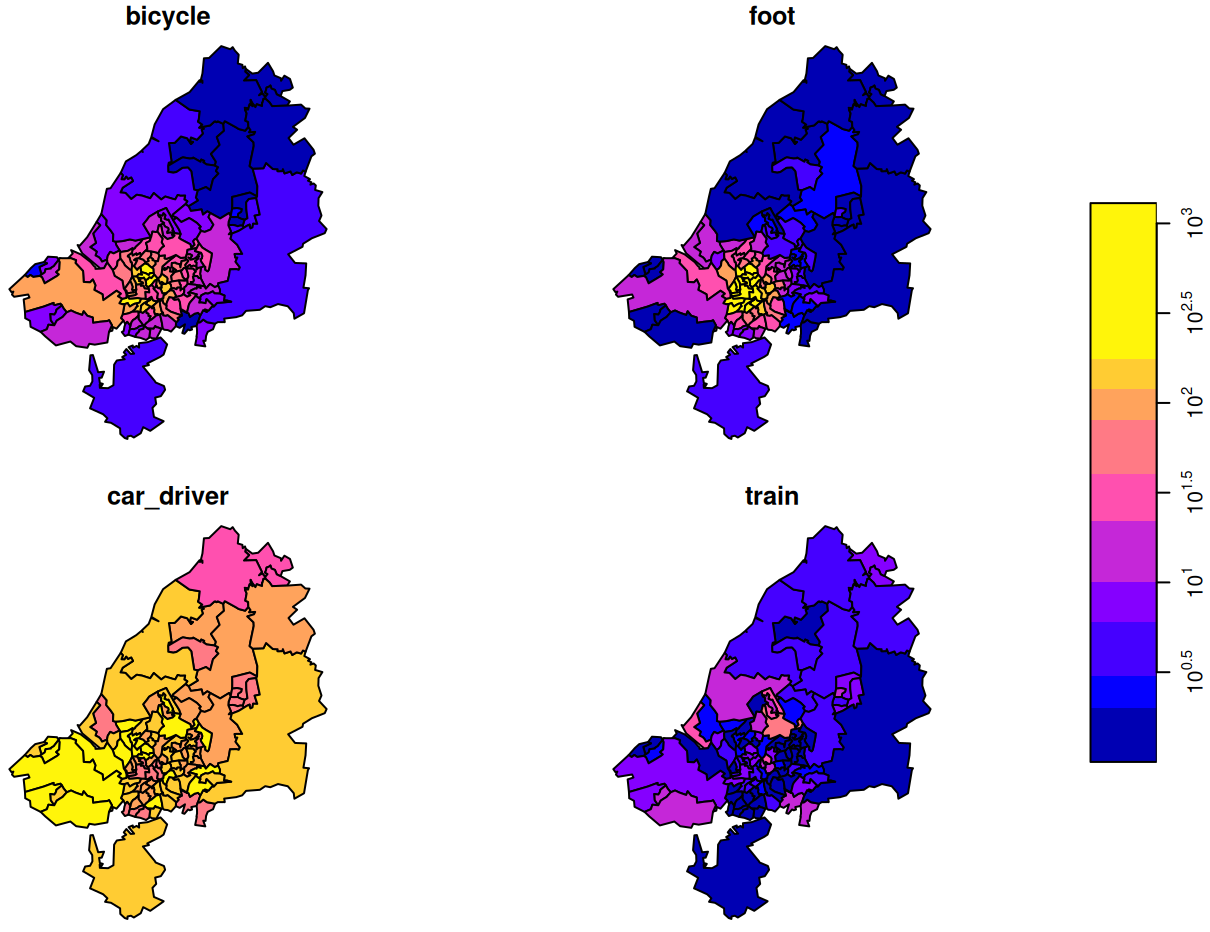

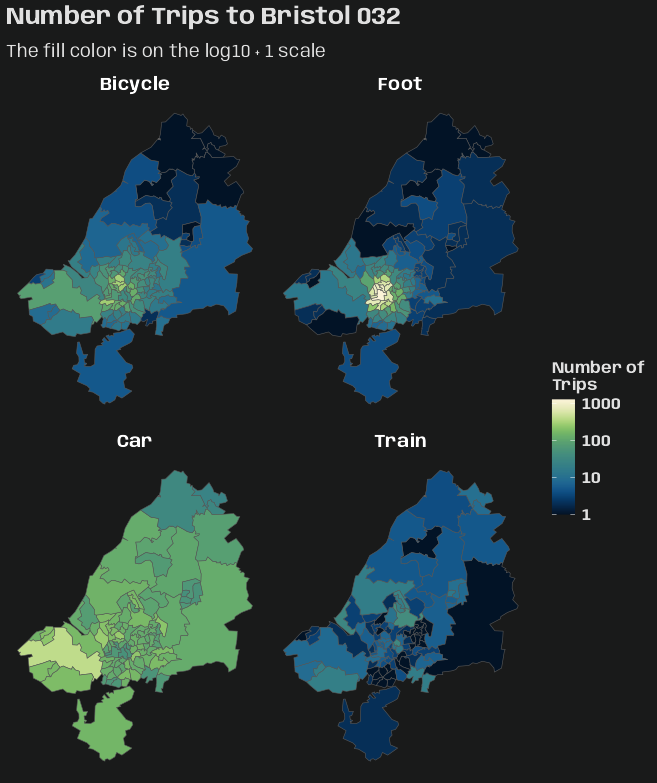

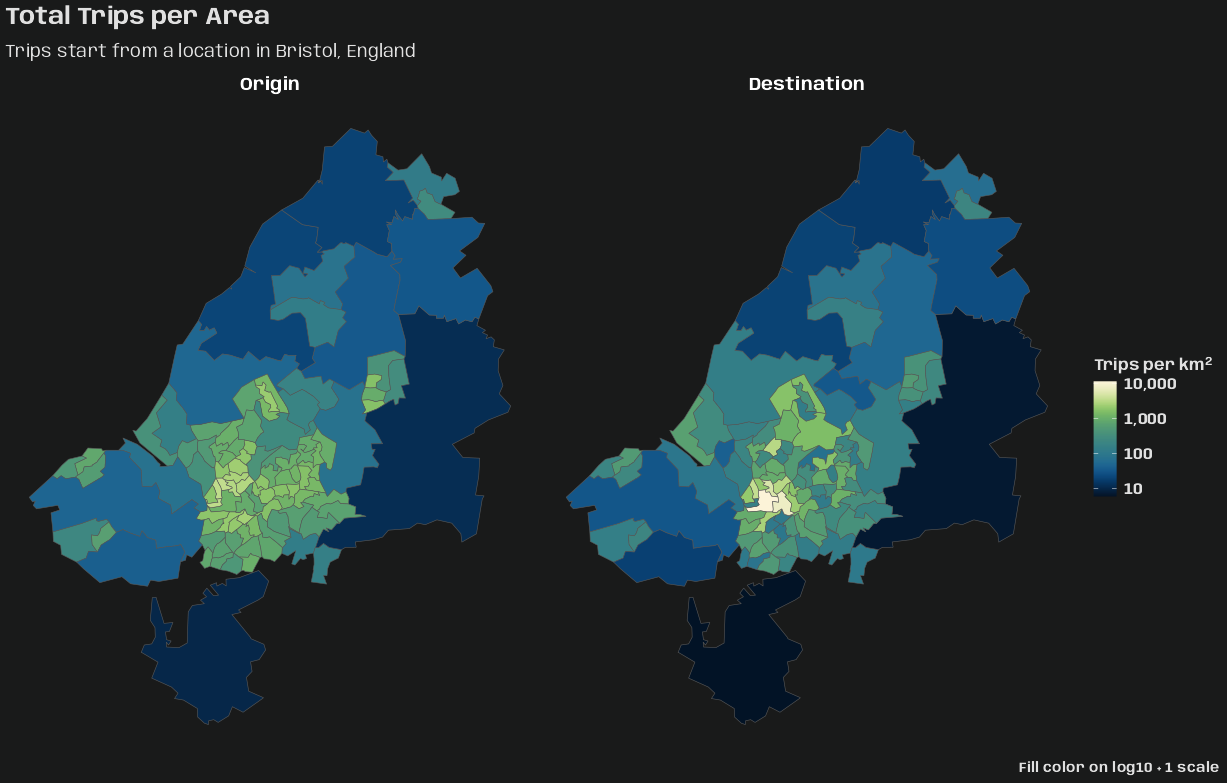

Example 4: Origin-Destination (no time) Table to {stars}

A table which counts trips from different origins to destinations using various modes of transportation gets converted to a {stars} object

Also see Time-wide >> Example 2 for an example of space-time matrix being converted to a {stars} object

library(dplyr); library(stars) data("bristol_zones", package = "spDataLarge") data("bristol_od", package = "spDataLarge") head(bristol_od) #> # A tibble: 6 × 7 #> o d all bicycle foot car_driver train #> <chr> <chr> <dbl> <dbl> <dbl> <dbl> <dbl> #> 1 E02002985 E02002985 209 5 127 59 0 #> 2 E02002985 E02002987 121 7 35 62 0 #> 3 E02002985 E02003036 32 2 1 10 1 #> 4 E02002985 E02003043 141 1 2 56 17 #> 5 E02002985 E02003049 56 2 4 36 0 #> 6 E02002985 E02003054 42 4 0 21 0 class(bristol_zones) #> [1] "sf" "data.frame" head(bristol_zones) #> Simple feature collection with 6 features and 2 fields #> Geometry type: MULTIPOLYGON #> Dimension: XY #> Bounding box: xmin: -2.707892 ymin: 51.28248 xmax: -2.460934 ymax: 51.54443 #> Geodetic CRS: WGS 84 #> geo_code name geometry #> 2905 E02002985 Bath and North East Somerset 001 MULTIPOLYGON (((-2.510462 5... #> 2907 E02002987 Bath and North East Somerset 003 MULTIPOLYGON (((-2.476122 5... #> 2925 E02003005 Bath and North East Somerset 021 MULTIPOLYGON (((-2.55073 51... #> 2932 E02003012 Bristol 001 MULTIPOLYGON (((-2.595763 5... #> 2933 E02003013 Bristol 002 MULTIPOLYGON (((-2.593783 5... #> 2934 E02003014 Bristol 003 MULTIPOLYGON (((-2.639581 5... nrow(bristol_zones) #> [1] 102 # number origin-destination pairs not present in the data nrow(bristol_zones)^2 - nrow(bristol_od) #> [1] 7494- This doesn’t have a time column, but I thought it was a good example of creating a {stars} object with multiple space dimensions and a categorical dimension

- To see how to do this with coordinates and a time dimension, see Kriging >> Examples >> Example 3 >> Catboost >> Process Predictions

# index used to fill the array bristol_longer <- bristol_od |> select(-all) |> tidyr::pivot_longer(3:6, names_to = "mode", values_to = "n") head(bristol_tidy) #> # A tibble: 6 × 4 #> o d mode n #> <chr> <chr> <chr> <dbl> #> 1 E02002985 E02002985 bicycle 5 #> 2 E02002985 E02002985 foot 127 #> 3 E02002985 E02002985 car_driver 59 #> 4 E02002985 E02002985 train 0 #> 5 E02002985 E02002987 bicycle 7 #> 6 E02002985 E02002987 foot 35 bris_trans_modes <- bristol_longer |> pull("mode") |> unique() # create zero-array with appropriate dims and dimnames arr_bris = array( 0L, dim = c( length(bristol_zones$geo_code), length(bristol_zones$geo_code), length(bris_trans_modes) ), dimnames = list( origin = bristol_zones$geo_code, dest = bristol_zones$geo_code, trans_mode = bris_trans_modes) ) dim(arr_bris) #> [1] 102 102 4 arr_bris_idx <- as.matrix(bristol_longer[c("o", "d", "mode")]) # fill in counts at these array indexes. arr_bris[arr_bris_idx] <- bristol_longer$n- A three dimensional array is created with the origin, destination, and mode of transportation as dimensions.

- I feel like bristol_zones is a sort of look-up table. So, I’m using it to create dim sizes and dim names in arr_bris, because bristol_od could be a monthly table that may not include one of the zones as an origin or destination in the future.

- In this case, bristol_od does happen to have all zones in o and d.

- This could also be the case with mode, but there is nothing that looks like a mode of transportation look-up table in {spDataLarge}. So, I’m just trusting all possible modes are included in bristol_od.

- The array is filled with counts (n) according to the index (bristol_longer).

- The order of the columns in the bristol_longer are the same as the order of the dims and dimnames specified in the zero-array.

- If it were the case that bristol_od didn’t have one of the possible zones, this filling procedure would still be fine. The zone-to-zone combination in the zero-array that didn’t get filled with a n value would just remain 0.

(star_dims_bris <- st_dimensions( origin = st_geometry(bristol_zones), dest = st_geometry(bristol_zones), trans_mode = bris_trans_modes )) #> from to refsys point values #> origin 1 102 WGS 84 FALSE MULTIPOLYGON (((-2.510462...,...,MULTIPOLYGON (((-2.55007 ... #> dest 1 102 WGS 84 FALSE MULTIPOLYGON (((-2.510462...,...,MULTIPOLYGON (((-2.55007 ... #> trans_mode 1 4 NA FALSE bicycle,...,train (star_bris_od <- st_as_stars( list(n_trips = arr_bris), dimensions = star_dims_bris )) #> stars object with 3 dimensions and 1 attribute #> attribute(s): #> Min. 1st Qu. Median Mean 3rd Qu. Max. #> n_trips 0 0 0 4.801591 0 1296 #> dimension(s): #> from to refsys point values #> origin 1 102 WGS 84 FALSE MULTIPOLYGON (((-2.510462...,...,MULTIPOLYGON (((-2.55007 ... #> dest 1 102 WGS 84 FALSE MULTIPOLYGON (((-2.510462...,...,MULTIPOLYGON (((-2.55007 ... #> trans_mode 1 4 NA FALSE bicycle,...,train- The method of

st_as_starsused for an array is the same as for matrix which is list. - The two space dimensions have geometries which is what we expect for space variables, but a grouping variable (3rd array dimension) is also included

- As with creating STFDF objects, all space (and time if it were here) dimensions are unique vectors.

- This doesn’t have a time column, but I thought it was a good example of creating a {stars} object with multiple space dimensions and a categorical dimension

Space-wide

Each space unit is a column

Example 1: Wind speeds

#> year month day RPT VAL ROS KIL SHA BIR DUB CLA MUL #> 1 61 1 1 15.04 14.96 13.17 9.29 13.96 9.87 13.67 10.25 10.83 #> 2 61 1 2 14.71 16.88 10.83 6.50 12.62 7.67 11.50 10.04 9.79 #> 3 61 1 3 18.50 16.88 12.33 10.13 11.17 6.17 11.25 8.04 8.50 #> 4 61 1 4 10.58 6.63 11.75 4.58 4.54 2.88 8.63 1.79 5.83 #> 5 61 1 5 13.33 13.25 11.42 6.17 10.71 8.21 11.92 6.54 10.92 #> 6 61 1 6 13.21 8.12 9.96 6.67 5.37 4.50 10.67 4.42 7.17- Each row is a unique time unit

Example 2: Create full grid spacetime object from a space-wide table (7.3 Interpolating Irish Wind in {spacetime} vignette)

class(mat_wind_velos) #> [1] "matrix" "array" dim(mat_wind_velos) #> [1] 6574 12 mat_wind_velos[1:6, 1:6] #> RPT VAL ROS KIL SHA BIR #> [1,] 0.47349183 0.4660816 0.29476767 -0.12216857 0.37172544 -0.0549353 #> [2,] 0.43828072 0.6342761 0.04762928 -0.48431251 0.23530275 -0.3264873 #> [3,] 0.76797566 0.6297610 0.20133157 -0.03446898 0.07989186 -0.5358676 #> [4,] 0.01118683 -0.4751407 0.13684623 -0.78709720 -0.79381717 -1.1049744 #> [5,] 0.29298091 0.2851083 0.09805298 -0.54439303 0.02147079 -0.2707680 #> [6,] 0.27715281 -0.2860777 -0.06624749 -0.47759668 -0.66795421 -0.8085874 class(wind$time) #> [1] "POSIXct" "POSIXt" wind$time[1:5] #> [1] "1961-01-01 12:00:00 GMT" "1961-01-02 12:00:00 GMT" "1961-01-03 12:00:00 GMT" "1961-01-04 12:00:00 GMT" "1961-01-05 12:00:00 GMT" class(geom_stations) #> [1] "SpatialPoints" #> attr(,"package") #> [1] "sp"- See Long >> Example >> Create full grid spacetime object for a more detailed breakdown of creating these objects

- The order of station geometries in geom_stations should match the order of the columns in mat_wind_velos

- In the vignette, the data used to create the geometry object is ordered according to the matrix using

match. See R, Base R >> Functions >>matchfor the code.

- In the vignette, the data used to create the geometry object is ordered according to the matrix using

st_wind = stConstruct( # space-wide matrix x = mat_wind_velos, # index for spatial feature/spatial geometries space = list(values = 1:ncol(mat_wind_velos)), # datetime column from original data time = wind$time, # spatial geometry SpatialObj = geom_stations, interval = TRUE ) class(st_wind) #> [1] "STFDF" #> attr(,"package") #> [1] "spacetime" df_st_wind <- as.data.frame(st_wind) dim(df_st_wind) #> [1] 78888 7 head(df_st_wind); tail(df_st_wind) #> coords.x1 coords.x2 sp.ID time endTime timeIndex values #> 1 551716.7 5739060 1 1961-01-01 12:00:00 1961-01-02 12:00:00 1 0.4734918 #> 2 414061.0 5754361 2 1961-01-01 12:00:00 1961-01-02 12:00:00 1 0.4660816 #> 3 680286.0 5795743 3 1961-01-01 12:00:00 1961-01-02 12:00:00 1 0.2947677 #> 4 617213.8 5836601 4 1961-01-01 12:00:00 1961-01-02 12:00:00 1 -0.1221686 #> 5 505631.2 5838902 5 1961-01-01 12:00:00 1961-01-02 12:00:00 1 0.3717254 #> 6 574794.2 5882123 6 1961-01-01 12:00:00 1961-01-02 12:00:00 1 -0.0549353 #> coords.x1 coords.x2 sp.ID time endTime timeIndex values #> 78883 682680.1 5923999 7 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 0.8600970 #> 78884 501099.9 5951999 8 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 0.1589576 #> 78885 608253.8 5932843 9 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 0.1536922 #> 78886 615289.0 6005361 10 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 0.1325158 #> 78887 434818.6 6009945 11 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 0.2058466 #> 78888 605634.5 6136859 12 1978-12-31 12:00:00 1979-01-01 12:00:00 6574 1.0835638- space has a confusing description. Here it’s just a numerical index for the number of columns of mat_wind_velos which would match an index/order for the geometries in geom_stations.

- interval = TRUE, since the values are daily mean wind speed (aggregation)

- See Time and Movement section

- df_st_wind is in long format

Time-wide

Each time unit is a column

Example 1: Counts of SIDS

#> NAME BIR74 SID74 NWBIR74 BIR79 SID79 NWBIR79 #> 1 Ashe 1091 1 10 1364 0 19 #> 2 Alleghany 487 0 10 542 3 12 #> 3 Surry 3188 5 208 3616 6 260 #> 4 Currituck 508 1 123 830 2 145 #> 5 Northampton 1421 9 1066 1606 3 1197- SID74 contains to the infant death syndrome cases for each county at a particular time period (1974-1984). SID79 are SIDS deaths from 1979-84.

- NWB is non-white births, BIR is births.

- Each row is a spatial unit and unique

- SID74 contains to the infant death syndrome cases for each county at a particular time period (1974-1984). SID79 are SIDS deaths from 1979-84.

Example 2: Space × Time Matrix to {stars} Object (i.e. STFDF)

A table with German PM10 air polution meausrements at different stations over multiple year period gets converted to a {stars} object

Also see Long >> Example 4 for an example of creating a {stars} object with three dimensions (array)library(stars) data(air, package = "spacetime") # where space is the dimname for stations (rows) # and time is the dimname for dates (columns) dim(air) #> space time #> 70 4383 air[1:10, 1000:1010] #> [,1] [,2] [,3] [,4] [,5] [,6] [,7] [,8] [,9] [,10] [,11] #> DESH001 25.521 34.833 25.854 36.021 52.562 59.833 29.458 26.146 22.312 32.789 15.729 #> DENI063 30.083 45.795 35.979 36.087 73.167 79.792 34.354 37.104 33.000 36.625 14.417 #> DEUB038 NA NA NA NA NA NA NA NA NA NA NA #> DEBE056 NA NA NA NA NA NA NA NA NA NA NA #> DEBE062 NA NA NA NA NA NA NA NA NA NA NA #> DEBE032 NA NA NA NA NA NA NA NA NA NA NA #> DEHE046 24.562 21.479 25.771 29.646 48.688 24.542 13.479 9.958 14.333 17.625 13.333 #> DEUB007 NA NA NA NA NA NA NA NA NA NA NA #> DENW081 NA NA NA NA NA NA NA NA NA NA NA #> DESH008 30.875 35.542 32.042 36.417 54.625 60.000 41.583 24.292 22.542 34.250 16.750 class(stations) #> [1] "SpatialPoints" #> attr(,"package") #> [1] "sp" head(stations) #> SpatialPoints: #> coords.x1 coords.x2 #> DESH001 9.585911 53.67057 #> DENI063 9.685030 53.52418 #> DEUB038 9.791584 54.07312 #> DEBE056 13.647013 52.44775 #> DEBE062 13.296353 52.65315 #> DEBE032 13.225856 52.47309 #> Coordinate Reference System (CRS) arguments: +proj=longlat +datum=WGS84 +no_defs sfc_stations <- stations |> sf::st_as_sf(coords = c("coords.x1", "coords.x2"), crs = 4326) |> sf::st_geometry() class(sfc_stations) #> [1] "sfc_POINT" "sfc" head(sfc_stations) #> Geometry set for 6 features #> Geometry type: POINT #> Dimension: XY #> Bounding box: xmin: 9.585911 ymin: 52.44775 xmax: 13.64701 ymax: 54.07312 #> Geodetic CRS: +proj=longlat +datum=WGS84 +no_defs #> First 5 geometries: #> POINT (9.585911 53.67057) #> POINT (9.68503 53.52418) #> POINT (9.791584 54.07312) #> POINT (13.64701 52.44775) #> POINT (13.29635 52.65315) class(dates) #> [1] "Date" summary(dates) #> Min. 1st Qu. Median Mean 3rd Qu. Max. #> "1998-01-01" "2000-12-31" "2004-01-01" "2004-01-01" "2006-12-31" "2009-12-31" star_dims <- st_dimensions( station = sfc_stations, time = dates ) star_air <- st_as_stars( .x = list(pm10 = air), dimensions = star_dims ) star_air #> stars object with 2 dimensions and 1 attribute #> attribute(s): #> Min. 1st Qu. Median Mean 3rd Qu. Max. NA's #> pm10 0 9.921 14.792 17.69728 21.992 274.333 157659 #> dimension(s): #> from to offset delta refsys point #> station 1 70 NA NA +proj=longlat +datum=WGS8... TRUE #> time 1 4383 1998-01-01 1 days Date FALSE #> values #> station POINT (9.585911 53.67057),...,POINT (9.446661 49.24068) #> time NULL- Similar contruction to a STFDF object. Guessing order of dates and stations object need to have the same order as they are in the space-time matrix

- See Long >> Example 2 and Space-wide >> Example 2 for more details on what this means.

- sfc_stations is the extracted geometry column of a sf dataframe. It’s essentially just a coordinates matrix with attributes.

str(stars_air) #> List of 1 #> $ pm10: num [1:70, 1:4383] NA NA NA NA NA NA NA NA NA NA ... #> ..- attr(*, "dimnames")=List of 2 #> .. ..$ : chr [1:70] "DESH001" "DENI063" "DEUB038" "DEBE056" ... #> .. ..$ : NULL #> - attr(*, "dimensions")=List of 2 #> ..$ station:List of 7 #> .. ..$ from : num 1 #> .. ..$ to : int 70 #> .. ..$ offset: num NA #> .. ..$ delta : num NA #> .. ..$ refsys:List of 2 #> .. .. ..$ input: chr "+proj=longlat +datum=WGS84 +no_defs" #> .. .. ..$ wkt : chr "GEOGCRS[\"unknown\",\n DATUM[\"World Geodetic System 1984\",\n ELLIPSOID[\"WGS 84\",6378137,298.25722"| __truncated__ #> .. .. ..- attr(*, "class")= chr "crs" #> .. ..$ point : logi TRUE #> .. ..$ values:sfc_POINT of length 70; first list element: 'XY' num [1:2] 9.59 53.67 #> .. ..- attr(*, "class")= chr "dimension" #> ..$ time :List of 7 #> .. ..$ from : num 1 #> .. ..$ to : int 4383 #> .. ..$ offset: Date[1:1], format: "1998-01-01" #> .. ..$ delta : 'difftime' num 1 #> .. .. ..- attr(*, "units")= chr "days" #> .. ..$ refsys: chr "Date" #> .. ..$ point : logi FALSE #> .. ..$ values: NULL #> .. ..- attr(*, "class")= chr "dimension" #> ..- attr(*, "raster")=List of 4 #> .. ..$ affine : num [1:2] 0 0 #> .. ..$ dimensions : chr [1:2] NA NA #> .. ..$ curvilinear: logi FALSE #> .. ..$ blocksizes : NULL #> .. ..- attr(*, "class")= chr "stars_raster" #> ..- attr(*, "class")= chr "dimensions" #> - attr(*, "class")= chr "stars"- Similar contruction to a STFDF object. Guessing order of dates and stations object need to have the same order as they are in the space-time matrix

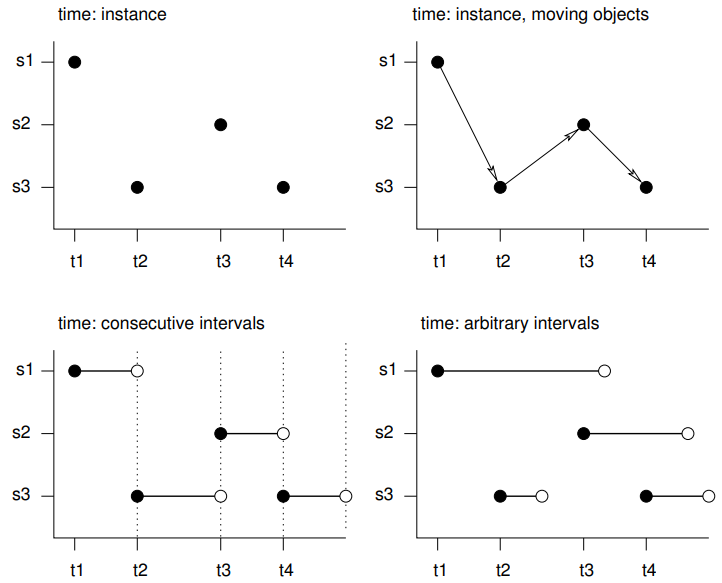

Time and Movement

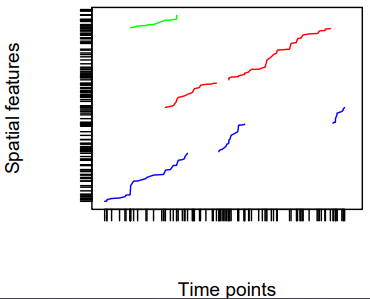

- s1 refers to the first feature/location, t1 to the first time instance or interval, thick lines indicate time intervals, arrows indicate movement. Filled circles denote start time, empty circles end times, intervals are right-closed

- Spatio-temporal objects essentially needs specification of the spatial, the temporal, and the data values

- Intervals - The spatial feature (e.g. location) or its associated data values does not change during this interval, but reflects the value or state during this interval (e.g. an average)

- e.g. yearly mean temperature at a set of locations

- Instants - Reflects moments of change (e.g., the start of the meteorological summer), or events with a zero or negligible duration (e.g., an earthquake, a lightning).

- Movement - Reflects objects may change location during a time interval. For time instants. locations are at a particular moment, and movement occurs between registered (time, feature) pairs and must be continuous.

- Trajectories - Where sets of (irregular) space-time points form sequences, and depict a trajectory.

- Their grouping may be simple (e.g., the trajectories of two persons on different days), nested (for several objects, a set of trajectories representing different trips) or more complex (e.g., with objects that split, merge, or disappear).

- Examples

- Human trajectories

- Mobile sensor measurements (where the sequence is kept, e.g., to derive the speed and direction of the sensor)

- Trajectories of tornados where the tornado extent of each time stamp can be reflected by a different polygon

EDA

Misc

- Also see EDA, Time Series

- Recommended workflow prior to starting spatio-temporal analysis (source)

- Go from simple analysis to complex (analysis) to get a feel for your data first. A lot of times, you probably will not need to do a spatio-temporal analysis at all.

- Steps

- Run univariate/multivariate analysis while ignoring space and time variables

- Aggregate over locations (e.g. average across all time points for each location). Then run spatial analysis (e.g. spatial regression, kriging, etc.).

- Looking for patterns, hotspots, etc.

group_by(location) |> summarize(median_val = median(val))- e.g. min, mean, median, or max depending on the project

- Aggregate over time (e.g. average across all locations for each time point). Then run time series analysis (e.g. moving average, arima, etc.).

- Looking trend, seasonality, shocks, etc.

group_by(date) |> summarize(max_val = max(val))

- Select interesting or important locations (e.g. top sales store, crime hotspot) and run a time series analysis on that location’s data

- Select interesting or important time points (e.g. holiday, noon) and run a spatial analysis on that time point

- Run a spatio-temporal analysis

- Check for duplicate locations:

Kriging cannot handle duplicate locations and returns an error, generally in the form of a “singular matrix”.

Examples:

dupl <- sp::zerodist(spatialpoints_obj) # sf dup <- duplicated(st_coordinates(sf_obj)) log_mat <- st_equals(sf_obj) # terra (or w/spatraster, spatvector) d <- distance(lon_lat_mat) log_mat <- which(d == 0, arr.ind = TRUE) # base r dup_indices <- duplicated(df[, c("lat", "long")]) # within a tolerance # Find points within 0.01 distance dup_pairs <- st_is_within_distance(sf_obj, dist = 0.01, sparse = FALSE)

General

-

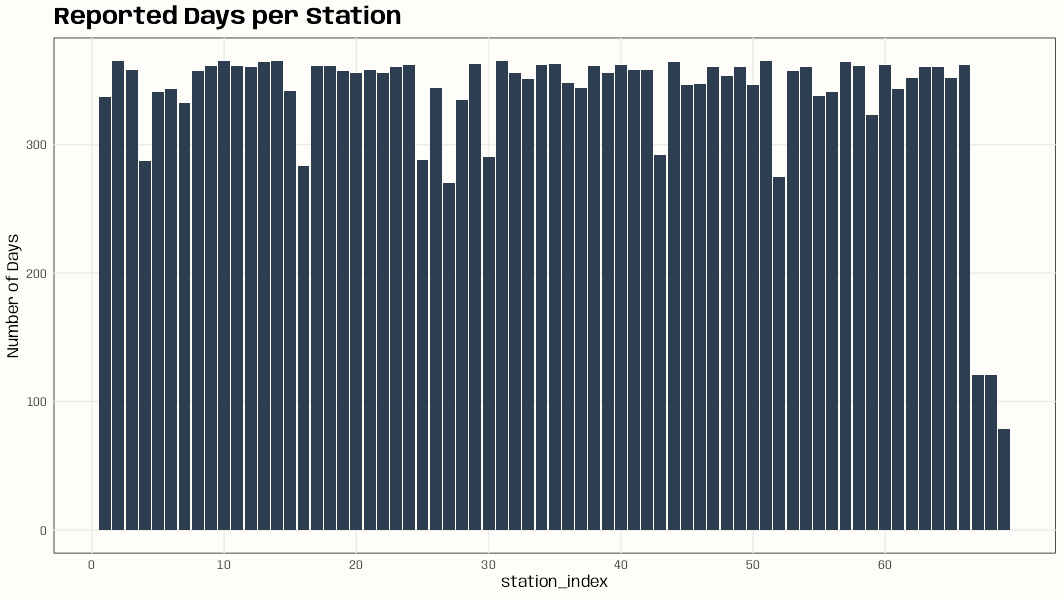

Code

library(dplyr); library(ggplot2) data(DE_RB_2005, package = "gstat") class(DE_RB_2005) #> [1] "STSDF" tibble(station_index = DE_RB_2005@index[,1]) |> count(station_index) |> ggplot(aes(x = station_index, y = n)) + geom_col(fill = "#2c3e50") + scale_x_continuous(breaks = seq.int(0, length(unique(DE_RB_2005@index[,1])), 10)) + ylab("Number of Days") + ggtitle("Reported Days per Station") + theme_notebook() # station names for some indexes w/low number of days row.names(DE_RB_2005@sp)[c(4, 16, 52, 67, 68, 69)] #> [1] "DEUB038" "DEUB039" "DEUB026" "DEHE052.1" "DEHE042.1" "DEHE060"- If there’s there are locations with few observations on too many days, this can result in volatile variograms.

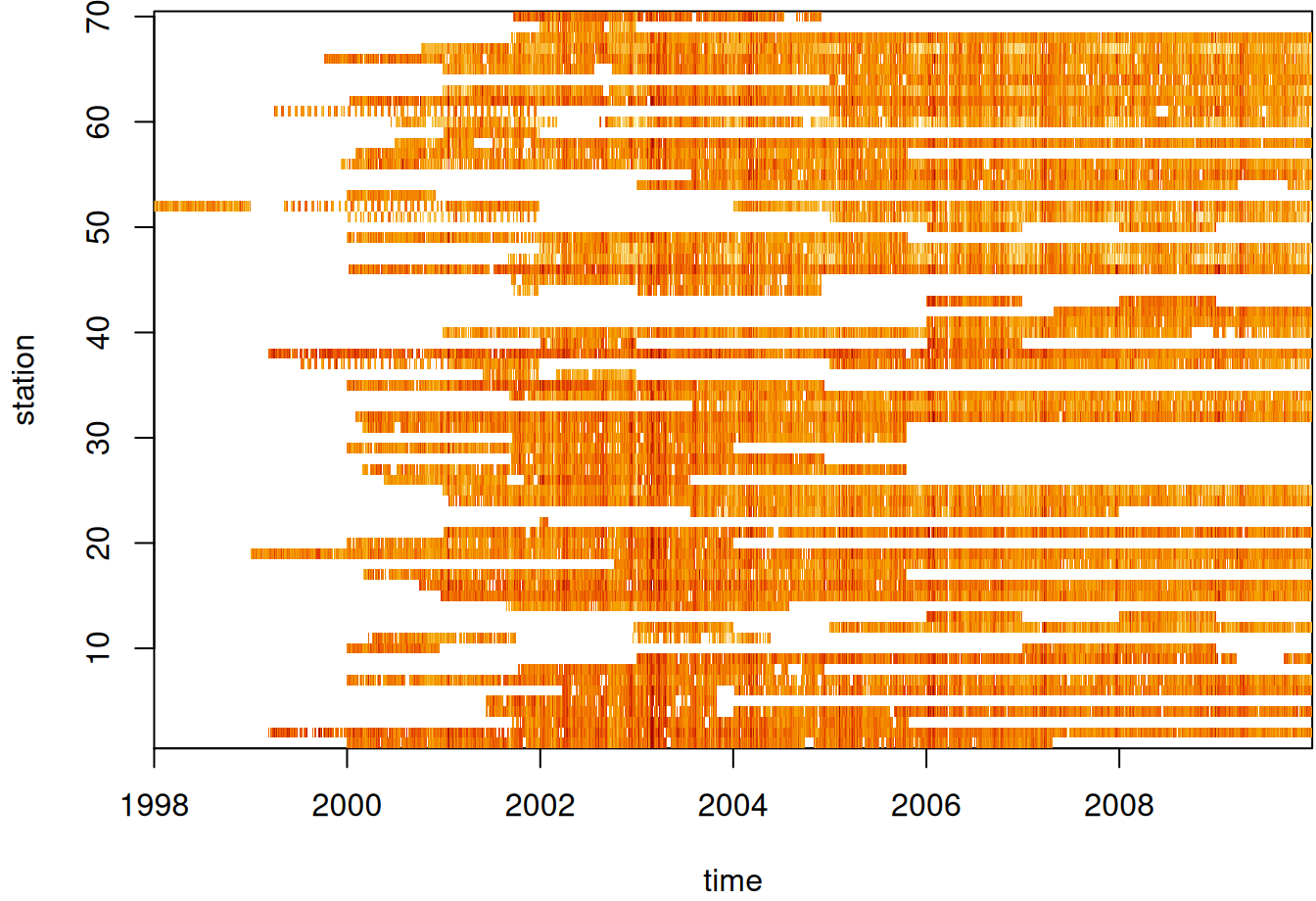

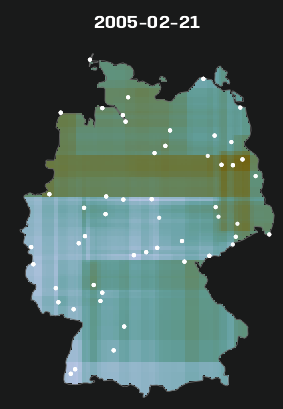

Example 2: Station vs. Days (source)

par(mar = c(5.1, 4.1, 0.3, 0.1)) image(aperm(log(star_pm10), 2:1), main = NULL)- See Data Formats >> Time-wide >> Example 2 for the code for star_pm10

- Missing values in white I assume.

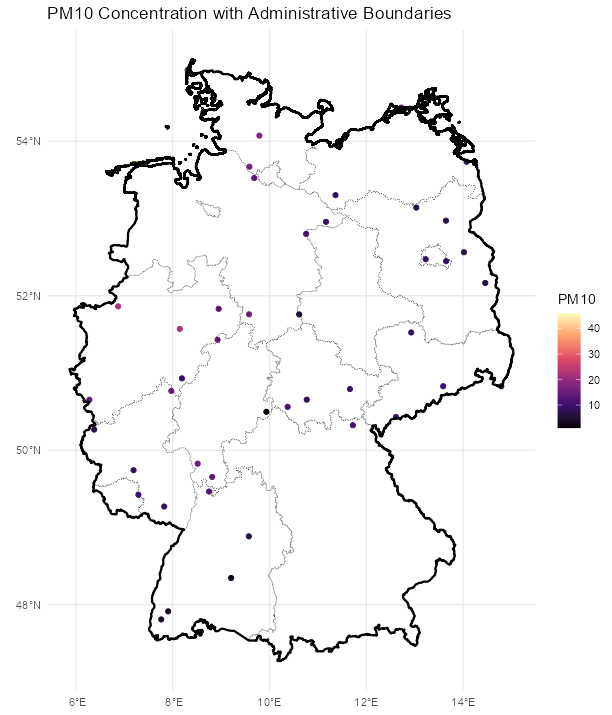

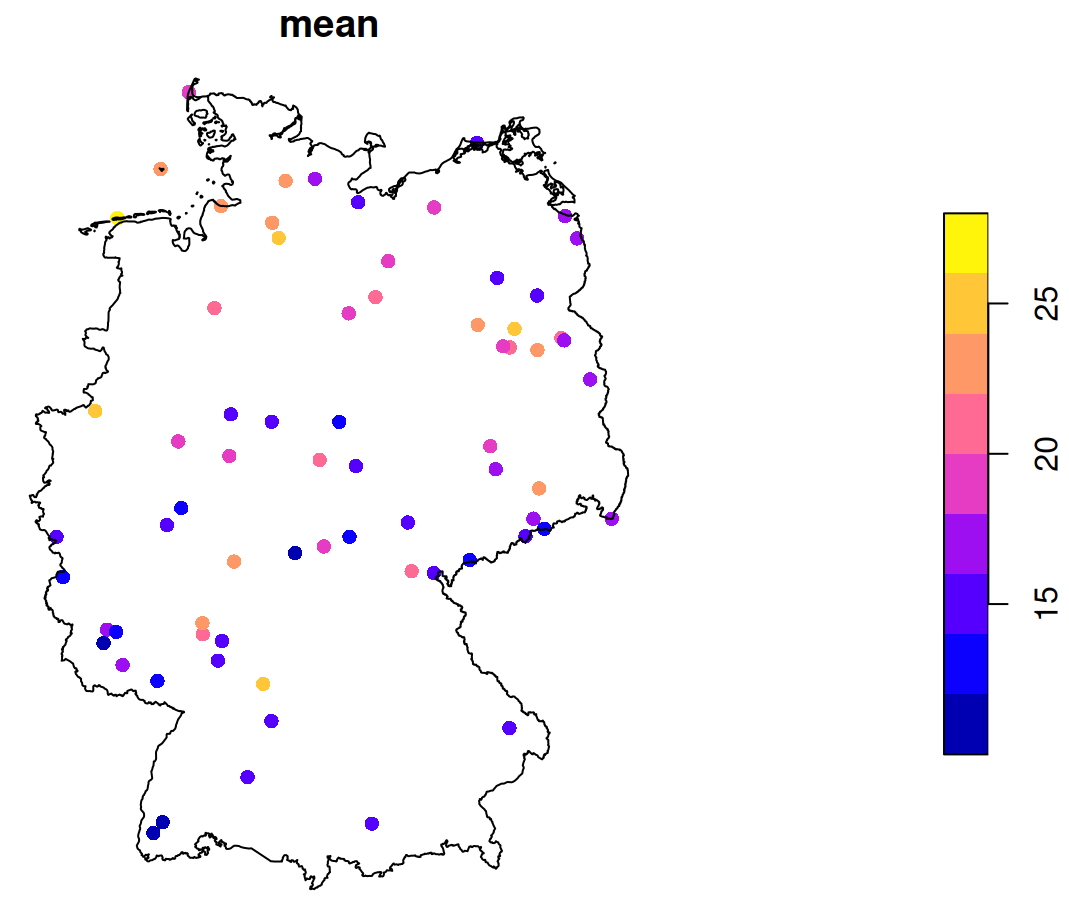

Example 3: Mean Value by Location (source)

st_as_sf(st_apply(star_pm10, 1, mean, na.rm = TRUE)) |> plot(reset = FALSE, pch = 16, extent = de_nuts1) st_union(de_nuts1) |> plot(add = TRUE)- See Data Formats >> Time-wide >> Example 2 for the code for star_pm10

- de_nuts1 also loads with

data(air, package = "spacetime")

- de_nuts1 also loads with

- See Data Formats >> Time-wide >> Example 2 for the code for star_pm10

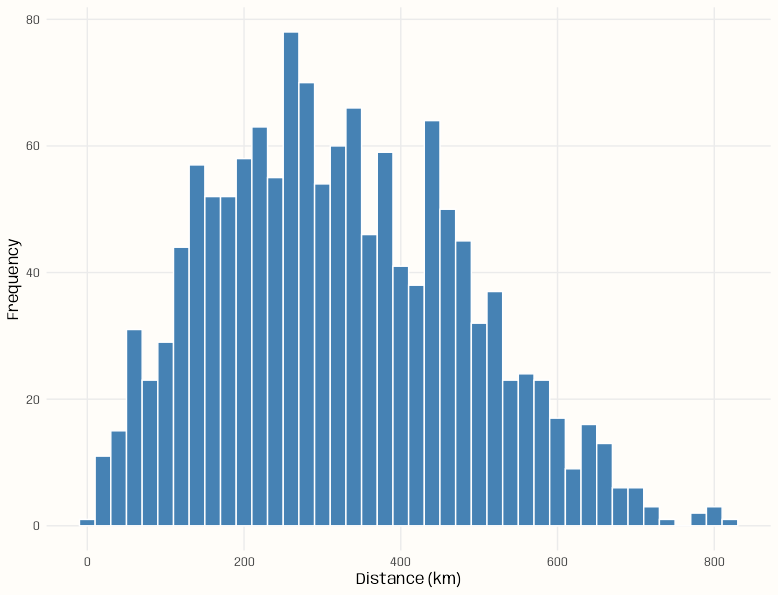

Example 4: Counts of pairwise distances between locations

Code

pacman::p_load( spacetime, sf, ggplot2 ) data(air) # get rural location time series from 2005 to 2010 rural <- STFDF( sp = stations, time = dates, data = data.frame(PM10 = as.vector(air)) ) rr <- rural[,"2005::2010"] # remove station ts w/all NAs unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x))) ) r5to10 <- rr[-unsel,] sf_locs <- st_as_sf(r5to10@sp) # Calculate pairwise distances mat_dist_sf <- st_distance(sf_locs) # Convert to kilometers mat_dist_sf_km <- units::set_units(mat_dist_sf, km) |> units::drop_units() dim(mat_dist_sf_km) #> [1] 53 53 tibble::tibble( distance = mat_dist_sf_km[upper.tri(mat_dist_sf_km)] ) |> ggplot(aes(x = distance)) + geom_histogram( binwidth = 20, fill = "steelblue", color = "#FFFDF9FF") + labs(x = "Distance (km)", y = "Frequency") + theme_notebook() summary(mat_dist_sf_km[upper.tri(mat_dist_sf_km)]) #> Min. 1st Qu. Median Mean 3rd Qu. Max. #> 7.027 197.849 308.533 321.976 439.895 813.742- The bin width is set to 20 km (which is the setting for the variogram model in the {gstat} vignette)

- For kriging, ideally we’d want at least around 100 pairwise distances in each of the first few bins (short pairwise distances) for a stable range estimate, but no pairwise distance bin has that many. (See Geospatial, Kriging >> Bin Width)

- This is due to there only being 53 locations and the selection of only rural locations

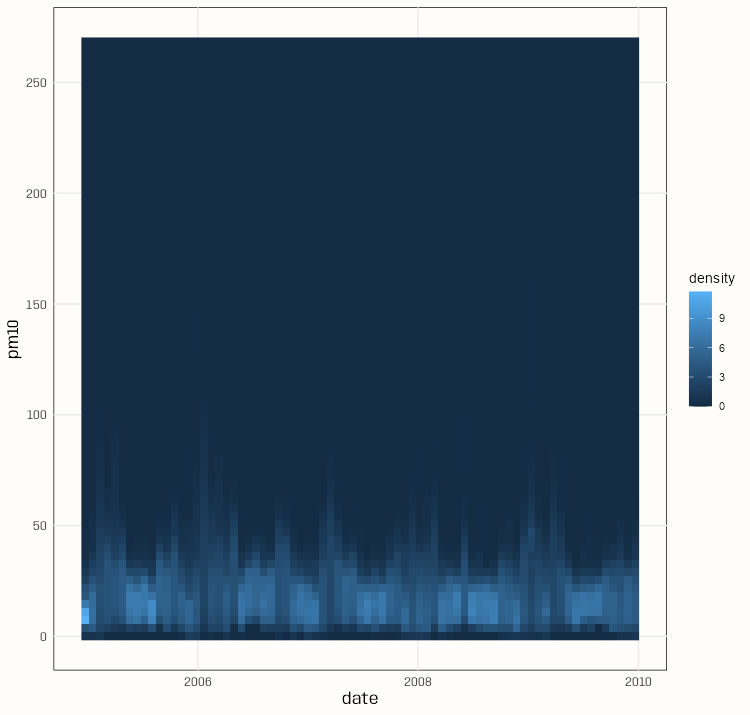

-

Code

pacman::p_load( spacetime, dplyr, gglinedensity, ggplot2 ) data(air) rural <- STFDF( sp = stations, time = dates, data = data.frame(PM10 = as.vector(air)) ) rr <- rural[,"2005::2010"] # remove station ts w/all NAs unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x))) ) r5to10 <- rr[-unsel,] pm10_tbl <- as_tibble(as.data.frame(r5to10)) |> rename(station = sp.ID, date = time, pm10 = PM10) |> mutate(date = as.Date(date)) |> select(date, station, pm10) summary(pm10_tbl) #> date station pm10 #> Min. :2005-01-01 DESH001: 1826 Min. : 0.560 #> 1st Qu.:2006-04-02 DENI063: 1826 1st Qu.: 9.275 #> Median :2007-07-02 DEUB038: 1826 Median : 13.852 #> Mean :2007-07-02 DEBE056: 1826 Mean : 16.261 #> 3rd Qu.:2008-10-01 DEBE032: 1826 3rd Qu.: 20.333 #> Max. :2009-12-31 DEHE046: 1826 Max. :269.079 #> (Other):85822 NA's :21979 ggplot( pm10_tbl, aes(x = date, y = pm10, group = station)) + stat_line_density( bins = 75, drop = FALSE, na.rm = TRUE) + scale_y_continuous(breaks = seq.int(0, 250, 50)) + theme_notebook()- With a bunch of time series, it’s difficult to distinguish a primary trend to the data (e.g. in a spaghetti line chart). This density gives a sense of the seasonality and the trend of most of the locations.

- The summary says the median is around 14 and the max is around 270.

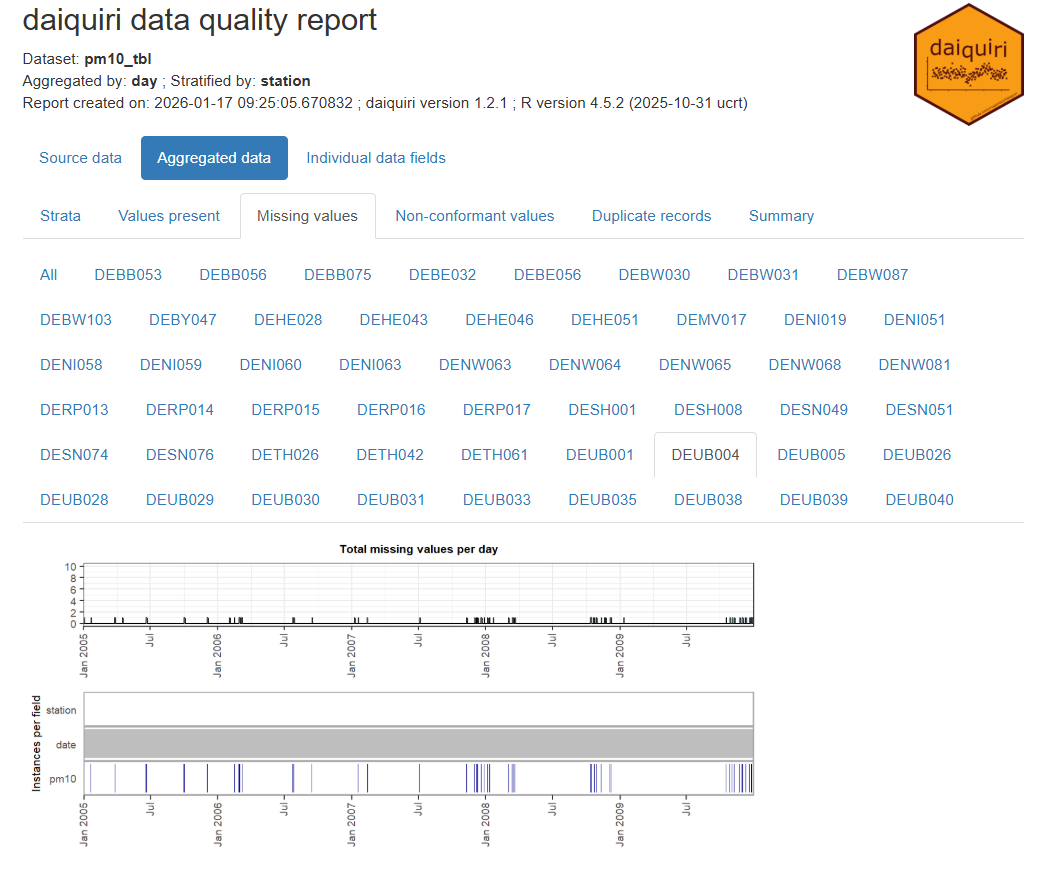

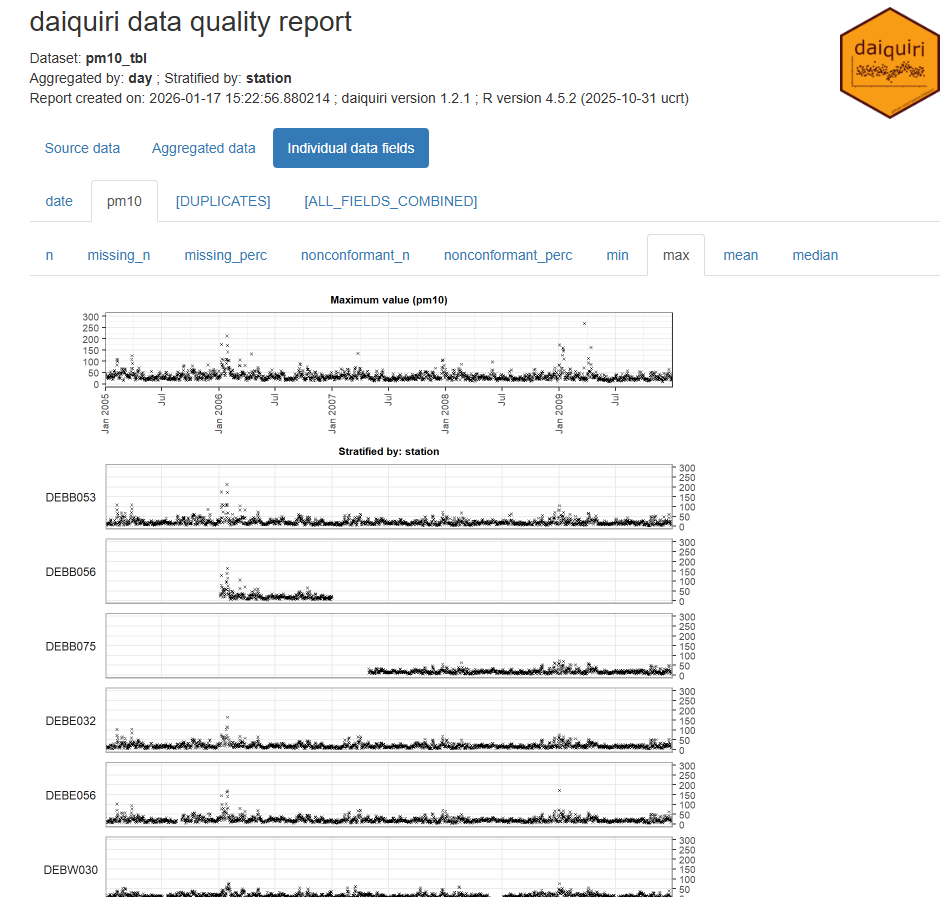

Example 6: {daiquiri}

Code

library(spacetime); library(dplyr); library(daiquiri) data(air) rural <- STFDF( sp = stations, time = dates, data = data.frame(PM10 = as.vector(air)) ) rr <- rural[,"2005::2010"] # remove station ts w/all NAs unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x))) ) r5to10 <- rr[-unsel,] pm10_tbl <- as_tibble(as.data.frame(r5to10)) |> rename(station = sp.ID, date = time, pm10 = PM10) |> mutate(date = as.Date(date)) |> select(date, station, pm10) fts <- field_types( station = ft_strata(), date = ft_timepoint(includes_time = FALSE), pm10 = ft_numeric() ) daiq_pm10 <- daiquiri_report( pm10_tbl, fts )- Shows missingness and basic descriptive statistics over time for each station

- Note that if your data is daily and there’s only 1 measurement per day, then all these statistics will the same (duh, but one time I forgot and thought the package was broken)

- Shows missingness and basic descriptive statistics over time for each station

Temporal Dependence

Check characteristics of autocorrelation. Can the analysis be done in a purely spatial manner (a purely spatial model for each time step) or is adding a temporal aspect necessary?

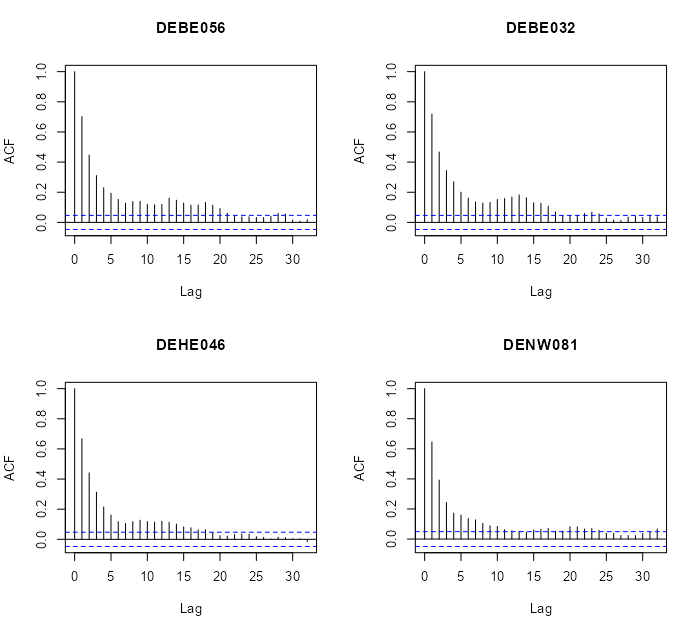

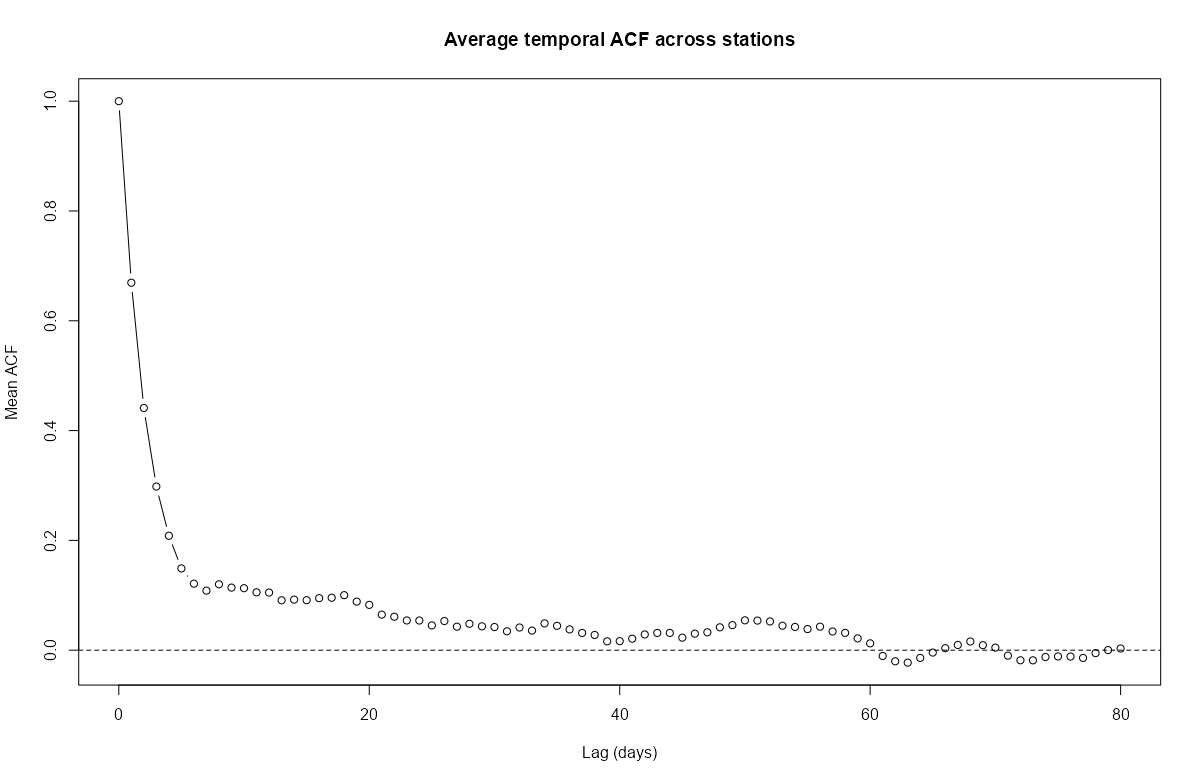

Autocorrelation Function (ACF)

- For each location, is there correlation between time \(t\) and lag \(t + h\)

- Does autocorrelation rapidly or gradually decrease?

- If gradually, then the series isn’t stationary and probably needs differencing (See EDA, Time Series >> Stationarity)

- Is there a scalloped shape to autocorrelation?

- If so, it might need seasonal differencing.

- At which time lag (max, avg) does autocorrelation become insignificant?

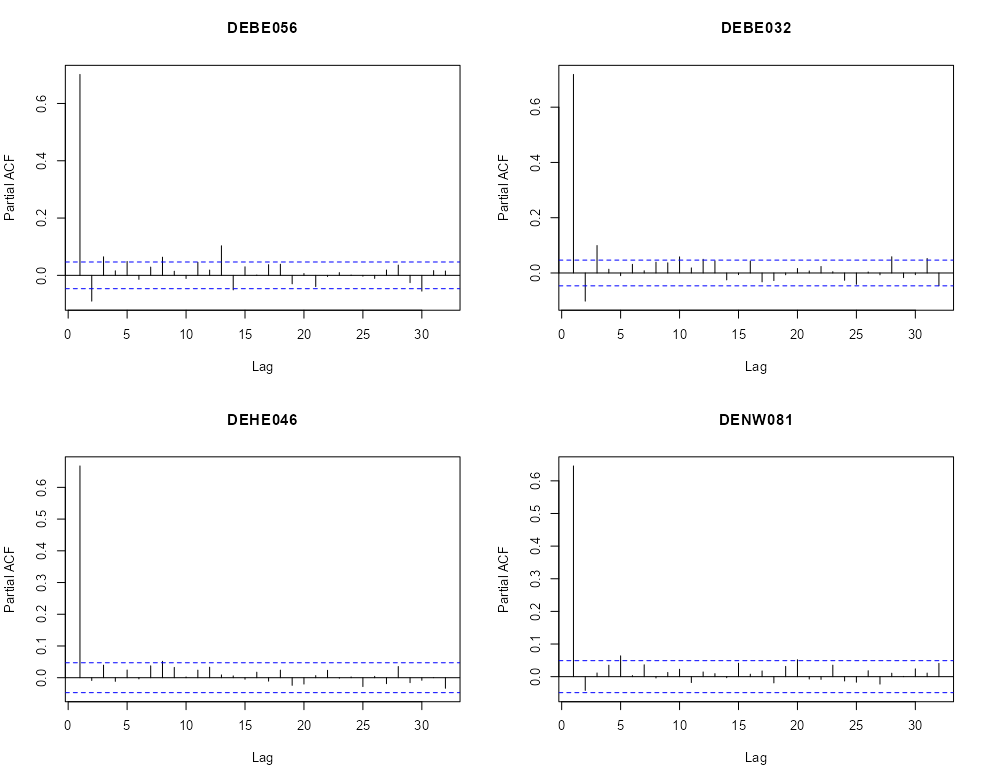

Partial Autocorrelation Function (PACF)

- Are there significant spikes in the PACF?

- Could indicate the frequency of the seasonality?

- Are there significant spikes in the PACF?

Cross-Correlations

- For each pair of locations, is there autocorrelation between location \(a\) at time \(t\) and location \(b\) at time \(t + h\)

- Cross-correlations can be asymmetric and they do not have to be 1 at lag 0 like autocorrelations

- If you’re looking at rainfall at two locations, strong correlation when upstream location A leads downstream location B by 2 hours, but weak correlation when B “leads” A, tells you about the direction of storm movement and typical travel time.

- The strength of the asymmetry itself (how different the two plots look) can indicate how strong or consistent these directional relationships are in your data.

- Potential Interpretations of Strong Asymmetry:

- Directional or causal relationships: If the cross-correlation is stronger when A leads B (positive lags in A vs B plot) than when B leads A, this suggests A might influence B more than the reverse. This doesn’t prove causation, but it’s often consistent with one variable driving changes in the other.

- Different response times: The asymmetry can reveal that one location responds to shared drivers faster than the other, or that the propagation time of some influence differs depending on direction.

- Lead-lag relationships: Strong asymmetry with a clear peak at a non-zero lag indicates one series systematically precedes the other, which can be important for forecasting or understanding the physical mechanisms at play.

- Is there an approximate distance between many pairs of locations at which autocorrelation is insignificant?

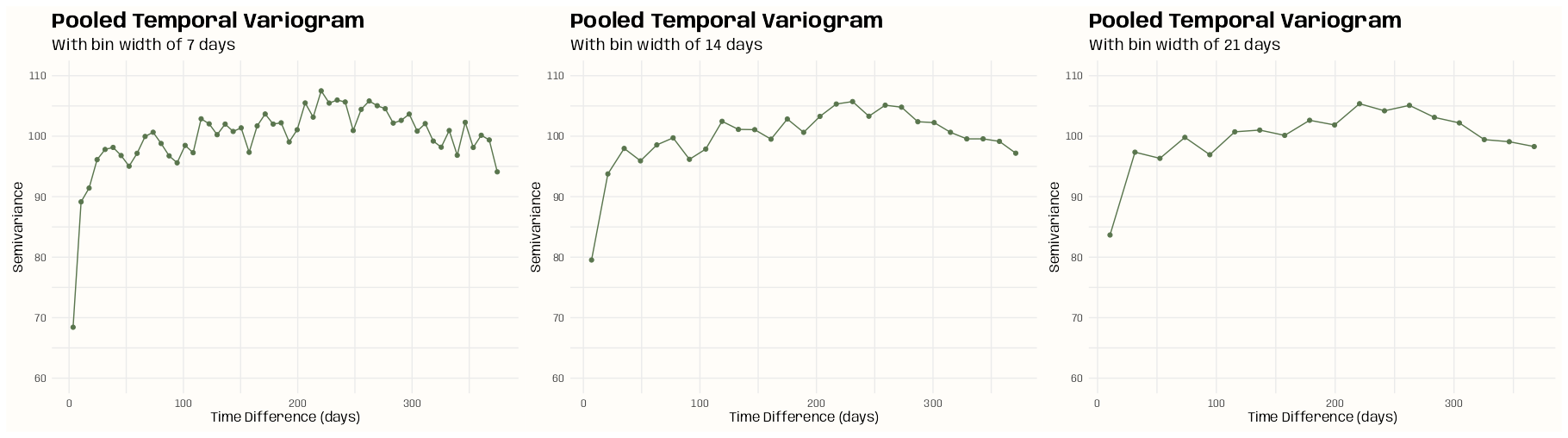

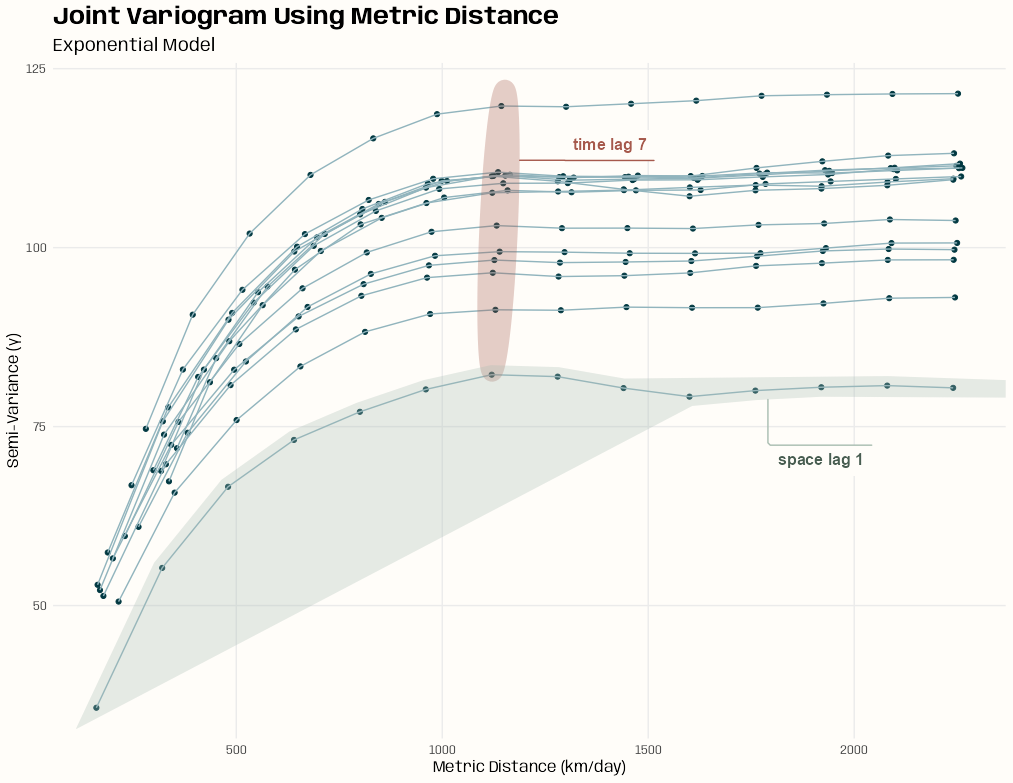

Pooled Temporal Variogram

A pooled temporal variogram is computed by holding space fixed and pooling squared temporal differences across spatial locations.

It can be used with spatio-temporal data to estimate the strength of temporal dependence of various time differences.

- We can also use to get reasonable starting values for the time portions of spatio-temporal variogram models (e.g. separable, metric, product-sum, etc.)

Let \(Y_{s,t}\) denote the observation at location \(s = 1,\dots,S\) and time \(t = 1,\dots,T\).

The pooled estimator is:

\[\hat{\gamma}(h_t) = \frac{1}{2 N_k(h_t)} \sum_{k=1}^{K} \sum_{s=1}^{S} \left( Y_{s,t} - Y_{s,t+u} \right)^2 \]

- \(h_t\) is a temporal bin

- \(N_k(h_t)\) is the number of valid (not NA) time difference pairs in that temporal bin

- \(K\) is the number of time difference pairs

- \(S\) is number of spatial locations

- \(u\) is temporal separation

Example 1: PM10 Pollution ({gstat} vignette, section 2)

Set-Up

pacman::p_load( spacetime, stars, sf, dplyr, ggplot2 ) data(air) # get rural location time series from 2005 to 2010 rural_log <- STFDF( sp = stations, time = dates, data = data.frame(PM10 = log(as.vector(air))) ) rr <- rural_log[,"2005::2010"] # remove station ts w/all NAs unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x))) ) r5to10 <- rr[-unsel,]- r5to10 is a STFDF (full grid) object

r5to10@spis a SpatialPoints ({sp}) class object with location names and coordinates

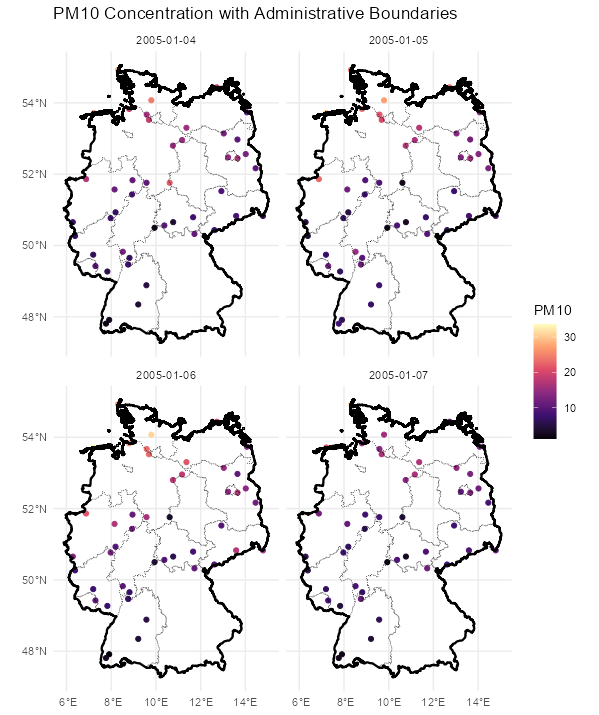

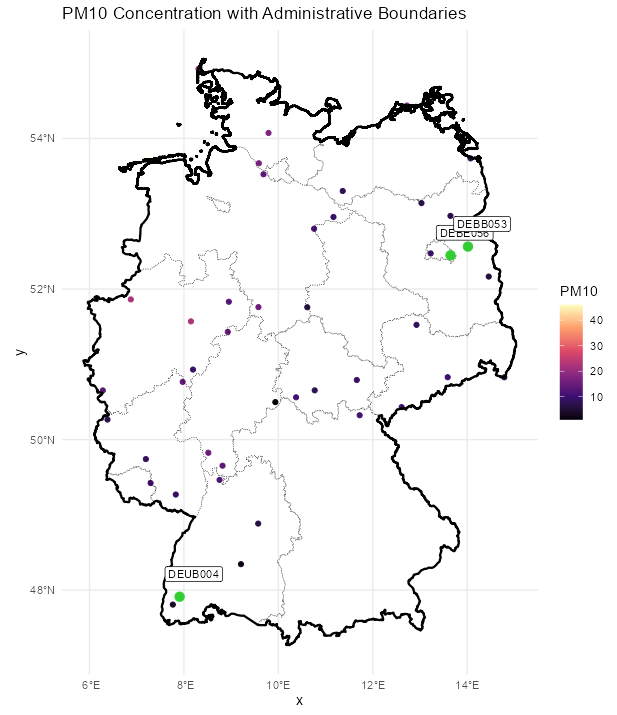

Map

Code

stars_r5to10 <- st_as_stars(r5to10) # stars has dplyr methods plot_slice <- slice(stars_r5to10, index = 3, # 3rd time step, so a location doesn't have NA along = "time") # country boundary de_sf <- st_as_sf(DE) # states (provinces) boundaries nuts1_sf <- st_as_sf(DE_NUTS1) ggplot() + # location points geom_stars( data = plot_slice, aes(color = PM10), fill = NA) + scale_color_viridis_c( option = "magma", na.value = "transparent") + # states geom_sf( data = nuts1_sf, fill = NA, color = "black", size = 0.2, linetype = "dotted") + # country geom_sf( data = de_sf, fill = NA, color = "black", size = 0.8) + # Ensures coordinates align correctly coord_sf() + labs( title = "PM10 Concentration with Administrative Boundaries", fill = "PM10" ) + theme_minimal() # facet multiple time steps plot_slices <- slice(stars_r5to10, index = 4:7, along = "time") plot_slices_sf <- st_as_sf(plot_slices, as_points = TRUE) plot_slices_long <- plot_slices_sf |> pivot_longer(cols = !sfc, names_to = "time", values_to = "PM10") ggplot() + geom_sf( data = plot_slices_long, aes(color = PM10)) + scale_color_viridis_c( option = "magma", na.value = "transparent") + geom_sf( data = nuts1_sf, fill = NA, color = "black", size = 0.2, linetype = "dotted") + geom_sf( data = de_sf, fill = NA, color = "black", size = 0.8) + facet_wrap(~time) + coord_sf() + labs( title = "PM10 Concentration with Administrative Boundaries", color = "PM10" ) + theme_minimal()Average ACF per Location

# ACF for each station mat_acf <- apply( as(r5to10, "xts"), # coerce to matrix of time series columns 2, function(x) { acf( x, na.action = na.pass, plot = FALSE, lag.max = 80)$acf } ) # locations are rows acf_mean <- rowMeans(mat_acf, na.rm = TRUE) plot( 0:80, # 80 days acf_mean, type = "b", # points and lines xlab = "Lag (days)", ylab = "Mean ACF", main = "Average temporal ACF across stations" ) abline(h = 0, lty = 2)- The average autocorrelation gets close to zero around 40 days and then crosses zero at around 60 days. After 60 days, there’s only a little noisy oscillation around zero.

Specific Locations

# select locations 4, 5, 6, 7 rn = row.names(r5to10@sp)[4:7] par(mfrow=c(2,2)) for (i in rn) { x <- na.omit(r5to10[i,]$PM10) acf(x, main = i) }- There does seem to be a gradual-ness and scalloped pattern with these locations.

- Looks like at around lag 20, autocorrelation is not significant for most of these locations

par(mfrow=c(2,2)) for (i in rn) { x <- na.omit(r5to10[i,]$PM10) pacf(x, main = i) }- We see one or two significant spikes at each location, but nothing that would indicate a consistent seasonality among the locations.

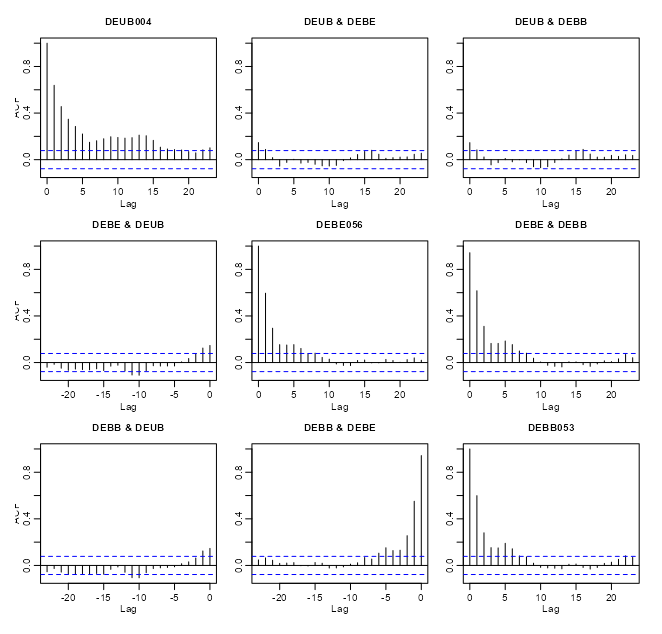

# coerce to xts class xts_pm10 <- as(r5to10, "xts") # extract numeric cols mat_pm10 <- zoo::coredata(xts_pm10) # find and remove cols with NAs > 20% na_cols <- colnames(mat_pm10)[colMeans(is.na(mat_pm10)) > 0.20] mat_pm10_nacols <- mat_pm10[, !colnames(mat_pm10) %in% na_cols] mat_pm10_clean <- na.omit(mat_pm10_nacols) # all data acf(mat_pm10_clean) # vs "DEUB004" no_ac_locs <- c("DEUB004", "DEBE056", "DEBB053") mat_pm10_naac <- mat_pm10_clean[, no_ac_locs] acf(mat_pm10_naac)- r5to10 is a STFDF (full grid) object. It’s coerced, using

as, into a xts object. Since it’s now a multivariate time series object,acfwill give you cross-correlations. - From the acf of all the data, there were a couple interesting locations that had very little cross-correlation between them.

- When running

acffor a fairly large number of locations there will be a large number of charts (due to all the combinations). After one chart is rendered, in the R console, it’ll ask you to hit enter before it will render the next chart.- Note that within the cross-correlation charts, the titles of some of the location names get cut off.

If there is no or little autocorrelation between a pair of locations, is it largely because there’s a large distance between those locations? Maybe this can give a hint about the appropriate neighborhood size or area

Distance Matrix

# select low cross-corr locations and random locations # indices for the low cross-corr locations no_ac_idx <- which(row.names(r5to10@sp) %in% no_ac_locs) # indices for the column names (locations) of the cleaned matrix mat_clean_idx <- which(row.names(r5to10@sp) %in% colnames(mat_pm10_clean)) # sample 5 location indices that aren't low cross-corr locations mat_clean_idx_samp <- sort(sample(mat_clean_idx[-no_ac_idx], 5)) # combine sample indices and low cross-corr indices mat_clean_idx_comb <- c(no_ac_idx, mat_clean_idx_samp) # convert the SpatialPoints (sp) to sf sf_locs <- st_as_sf(r5to10[mat_clean_idx_comb,]@sp) # pairwise distances (in meters for geographic coordinates) mat_dist_sf <- st_distance(sf_locs) # convert to kilometers mat_dist_sf_km <- round(units::set_units(mat_dist_sf, km), 2) colnames(mat_dist_sf_km) <- row.names(r5to10[mat_clean_idx_comb,]@sp) rownames(mat_dist_sf_km) <- row.names(r5to10[mat_clean_idx_comb,]@sp) mat_dist_sf_km #> Units: [km] #> DEBE056 DEBB053 DEUB004 DEBE056 DETH026 DEUB004 DEBW031 DENW068 #> DEBE056 0.00 28.07 648.60 235.43 357.82 541.62 198.99 557.63 #> DEBB053 28.07 0.00 674.84 256.26 374.67 569.64 221.21 585.50 #> DEUB004 648.60 674.84 0.00 441.77 660.90 209.86 579.71 173.85 #> DEBE056 235.43 256.26 441.77 0.00 458.62 393.98 292.91 396.71 #> DETH026 357.82 374.67 660.90 458.62 0.00 467.91 173.13 500.94 #> DEUB004 541.62 569.64 209.86 393.98 467.91 0.00 420.84 36.08 #> DEBW031 198.99 221.21 579.71 292.91 173.13 420.84 0.00 446.54 #> DENW068 557.63 585.50 173.85 396.71 500.94 36.08 446.54 0.00 # using sp mat_dist_sp <- round(sp::spDists(r5to10[mat_clean_idx_comb,]@sp), 2) colnames(mat_dist_sp) <- row.names(r5to10[mat_clean_idx_comb,]@sp) rownames(mat_dist_sp) <- row.names(r5to10[mat_clean_idx_comb,]@sp)- The 3rd column (DEUB004) and first two rows (DEBE056, DEBB053) shows the distances between the low cross-correlation locations. The other locations are included for context.

- The distances for those low cross-correlations location pairs do seem to be quite a bit larger than the other 5 randomly sampled locations when paired with DEUB004. So the low cross-correlations are likely due to larger spatial distances between the locations.

- Might be interesting to check the cross-correlation between DEUB004 and DETH026 and maybe DEBW031 just to see how low those cross-correlations are in comparison.

- The {sp} and {sf} numbers are slightly different, because {sp} uses spherical and {sf} uses elliptical distances. The elliptical should be more accurate, but either should be fine for most applications (fraction of a percentage difference)

Map

Code

labeled_points <- st_as_sf(plot_slice, as_points = TRUE) |> mutate(station = rownames(r5to10@sp@coords)) |> filter(station %in% c("DEUB004", "DEBE056", "DEBB053")) ggplot() + # location points geom_stars( data = plot_slice, aes(color = PM10), fill = NA) + scale_color_viridis_c( option = "magma", na.value = "transparent") + # states geom_sf( data = nuts1_sf, fill = NA, color = "black", size = 0.2, linetype = "dotted") + # country geom_sf( data = de_sf, fill = NA, color = "black", size = 0.8) + # Ensures coordinates align correctly coord_sf() + # color labelled points differently geom_sf( data = labeled_points, color = "limegreen", size = 3, shape = 21, fill = "limegreen") + geom_sf_label( data = labeled_points, aes(label = station), nudge_x = 0.3, nudge_y = 0.3, size = 3) + labs( title = "PM10 Concentration with Administrative Boundaries", fill = "PM10") + theme_minimal()- Pretty much on the other side of the country, but there are locations further away. Again, it’d be interesting to look at the cross-correlations between some of the pairs of locations that are just as far or farther away.

Example 2: Pooled Temporal Variogram

This is the PM10 air pollution dataset for Germany that’s from {spacetime} and used in many of my examples.

The goal is to find starting values for temporal

vgmportions of spatio-temporal variogram models.pacman::p_load( ebtools, # pooled_temporal_variogram ggplot2, patchwork, gstat ) data(air, package = "spacetime") keep_cols <- dates >= as.Date("2005-01-01") & dates <= as.Date("2010-12-31") air_sub <- air[, keep_cols] # Remove locations with all NAs keep_rows <- rowSums(!is.na(air_sub)) > 0 air_sub <- air_sub[keep_rows, ] # add dates as column names keep_dates <- as.character(dates[keep_cols]) colnames(air_sub) <- keep_dates air_sub_log <- log(air_sub)pooled_temporal_variogramis from my personal package and requires a space-time matrix with date or datetime strings as column names.

ls_vario_time <- purrr::map( c(7, 14, 21), # 1wk, 2wks, 3wks \(x) { pooled_temporal_variogram( Y = air_sub_log, bin_width = x, max_time_diff = 375 # slightly more than a year in case there's noise ) } ) ls_plots <- purrr::map2( ls_vario_time, c(7, 14, 21), \(vario_obj, binwidth) { ggplot(vario_obj, aes(x = dist, y = gamma)) + geom_point(color = "#59754D") + geom_line(color = "#59754D") + expand_limits(y = 0:0.35) + labs( x = "Time Difference (days)", y = "Semivariance", title = "Pooled Temporal Variogram", subtitle = glue::glue("With bin width of {binwidth} days") ) + theme_notebook() + theme(panel.grid.minor = element_line()) } ) ls_plots[[1]] + ls_plots[[2]] + ls_plots[[3]]- The rapid rise and leveling indicates short-term temporal dependence

- The bump could indicate some seasonality. It begins at around 200 days and lasts until around 300 days.

- The (temporal) variogram assumes stationarity. So, if strong enough, it could substantially bias the estimation.

- If there is seasonality, some seasonal differencing may be useful

- Internally subtract location-wise mean or seasonal mean (rows in the matrix)

- The dip after ~275 days suggests one of:

- Finite sample noise; i.e. not enough pairs

- Seasonality (annual structure)

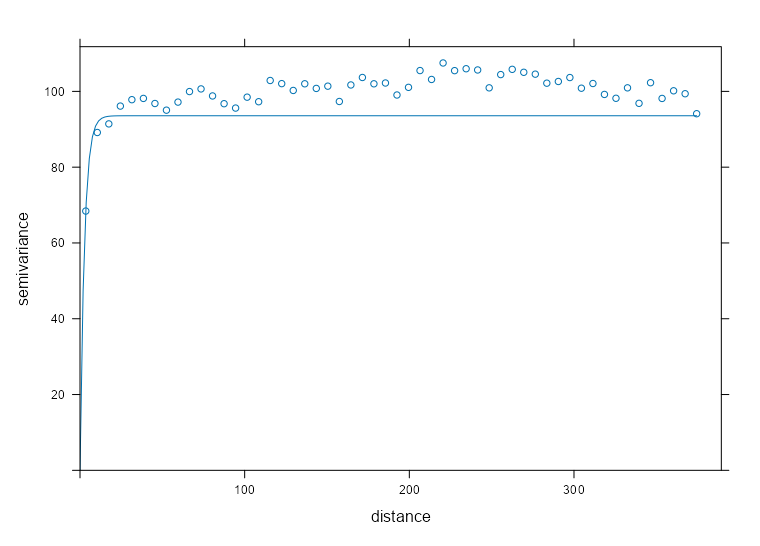

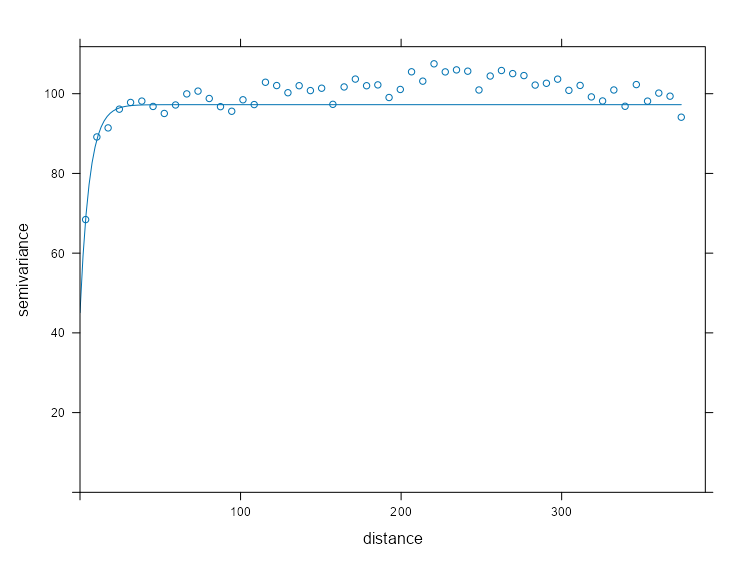

Without Nugget

mod_vario_time_exp <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Exp", psill = 0.30, # units range = 120 # days ) ) mod_vario_time_exp #> model psill range #> 1 Exp 0.2934582 2.590029 plot( x = ls_vario_time[[1]], mod_vario_time_exp ) mod_vario_time_sph <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Sph", psill = 0.30, # units range = 120 # days ) ) mod_vario_time_sph #> model psill range #> 1 Sph 0.2903385 6.27746 plot( x = ls_vario_time[[1]], mod_vario_time_sph ) mod_vario_time_mat <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Mat", psill = 0.30, # units range = 120, # days kappa = 0.12 ) ) mod_vario_time_mat #> model psill range kappa #> 1 Mat 0.3125646 13.20735 0.12 plot( x = ls_vario_time[[1]], mod_vario_time_mat )- Both exponential and spherical clearly undershoot the plateau, so matern is the choice here.

- For the matern model, the default kappa is 0.5 which made the curve look closer to the exponential and spherical results.

- For the exponential model, I tried an initial range of 200 and it didn’t converge. When you see the model’s estimate, you can see why. Both exponential and spherical have single digit ranges and the matern is around 14. I was off in my guess pretty badly.

- I used the finer bin width (7 days) variogram, because there are still plenty of time difference pairs for semivariance estimation and there’s no danger of overfitting these models.

- Note that we have many more time points (and therefore time difference pairs per bin) as compared to spatial locations in the pooled spatial variogram case (example in next section), so smaller bin widths aren’t an issue.

- There are many models avaiable in gstat, but a lot are for specialized situations and the rest errored or didn’t converge. I didn’t investigate why some errored or didn’t converge, so I just ended up with these three.

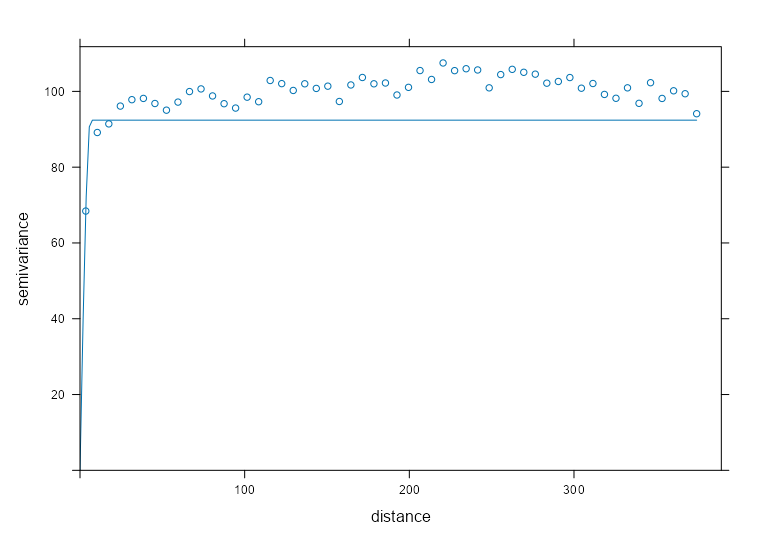

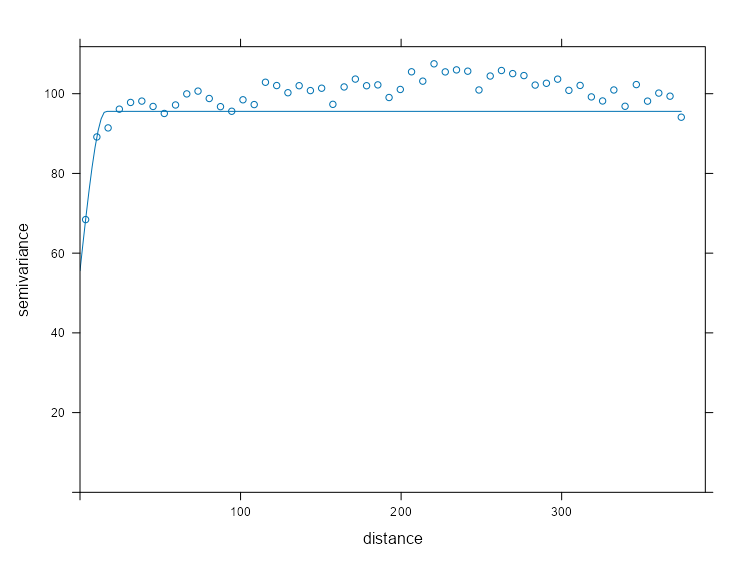

With Nugget

mod_vario_time_exp_nug <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Exp", psill = 0.20, # units range = 40, # days nugget = 0.10 ) ) mod_vario_time_exp_nug #> model psill range #> 1 Nug 0.1401896 0.000000 #> 2 Exp 0.1628611 5.416927 plot( x = ls_vario_time[[1]], mod_vario_time_exp_nug, ) mod_vario_time_sph_nug <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Sph", psill = 0.20, # units range = 40, # days nugget = 0.10 ) ) mod_vario_time_sph_nug #> model psill range #> 1 Nug 0.1769656 0.00000 #> 2 Sph 0.1215215 15.40921 plot( x = ls_vario_time[[1]], mod_vario_time_sph_nug ) mod_vario_time_mat_nug <- fit.variogram( object = ls_vario_time[[1]], model = vgm( model = "Mat", psill = 0.20, # units range = 40, # days kappa = 0.12, nugget = 0.10 ) ) mod_vario_time_mat_nug #> model psill range kappa #> 1 Nug 0.0000000 0.00000 0.00 #> 2 Mat 0.3125647 13.20737 0.12 plot( x = ls_vario_time[[1]], mod_vario_time_mat_nug )- Adding the nugget leads to a little better fit for the exponential and spherical models, but not enough to overtake the matern.

- The matern didn’t want the nugget when offered. Therefore, we have a better nugget-sill ratio which potentially means better kriging results, but a zero nugget doesn’t sound too realistic in this case (i.e. no microscale variation or measurement erro).

Spatial Dependence

Is there meaningful spatial correlation (relative to noise)?

- Spatio-temporal variograms can appear structured even when spatial dependence is negligible—especially when temporal correlation is strong. So, we need to test whether spatial dependence exits independently.

- If there were no spatial correlation, then for almost all spatial lags (i.e. pure nugget behavior), semi-variance is constant.

- With spatial correlation, there should be a monotonic increase as distance increases which eventually plateaus.

- Tells us that spatial dependence is not drowned out by temporal variability

On what distance scale does spatial correlation operate?

- At what distance does the variogram level off?

- Realize the empirical range (calculated in the variogram model) and the practical range (using your eyes) and the effective range (where spatial semi-variance is higher than some threshold) are different things.

- In general does this distance suggest a State-wide spatial dependence? County-wide? Region-wide? etc.

- At what distance does the variogram level off?

Is the spatial structure smooth or erratic?

- Can one curve fit the data well or are there groups of bins that stand out as not being fit well by the model curve.

- Does monotonicity of the bins fail? (i.e. a bin at greater distance than another but having a smaller semi-variance)

- Try spatial detrending (e.g. regress on coordinates, covariates) or temporal detrending can restore a monotone variogram.

- If variance or mean changes over time and you pool time slices, then differencing in time (or demeaning by time) can stabilize variance. Then, the short-lag spatial structure can become clearer.

Is fitting a full spatio-temporal variogram likely to succeed?

- Flat variogram → Don’t bother with spatial-temporal modeling when there’s no need for even spatial modeling.

- Extremely short range → Metric models likely degenerate.

- Wild instability → Spatio-Temporal fitting will be numerically meaningless. (too much noise → too much uncertainty → predictions essentially meaningless)

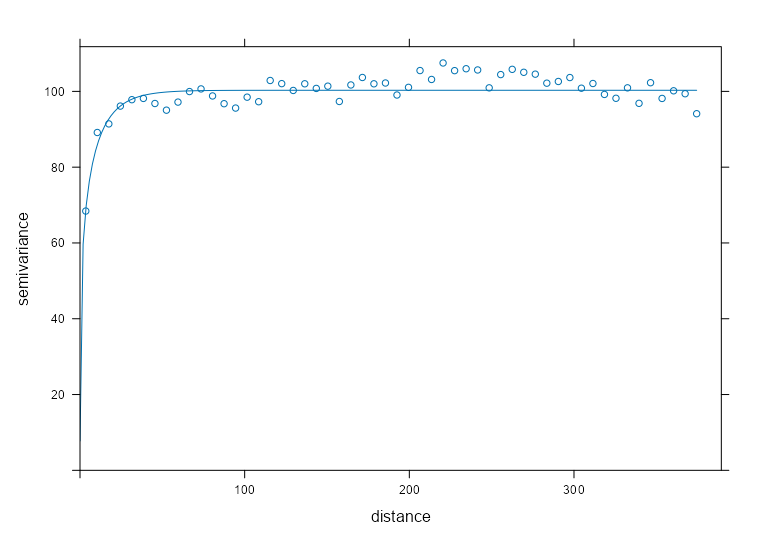

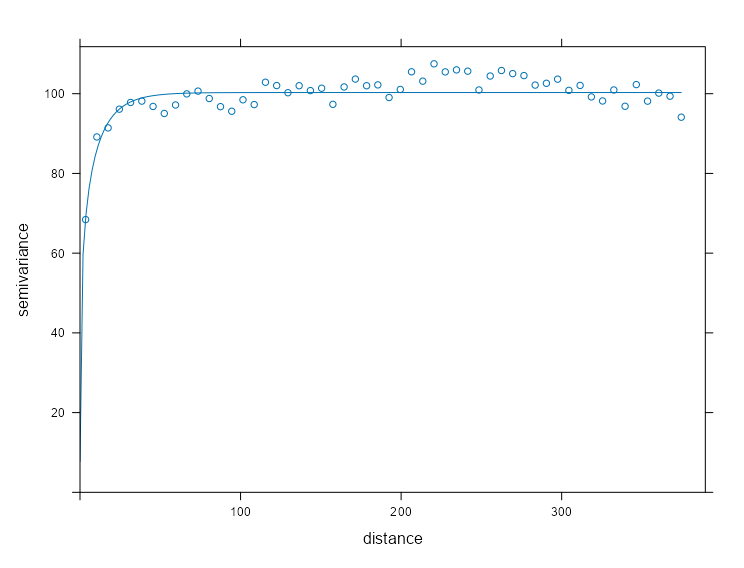

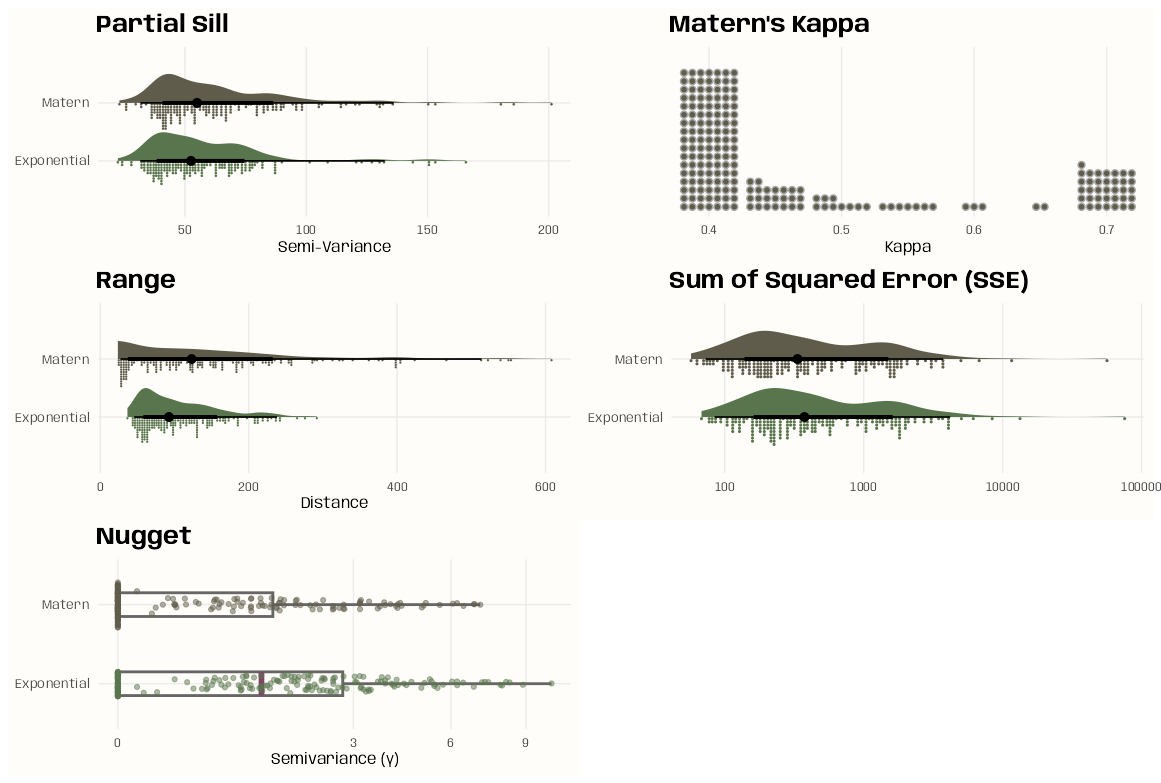

Example: Pooled Spatial Variogram

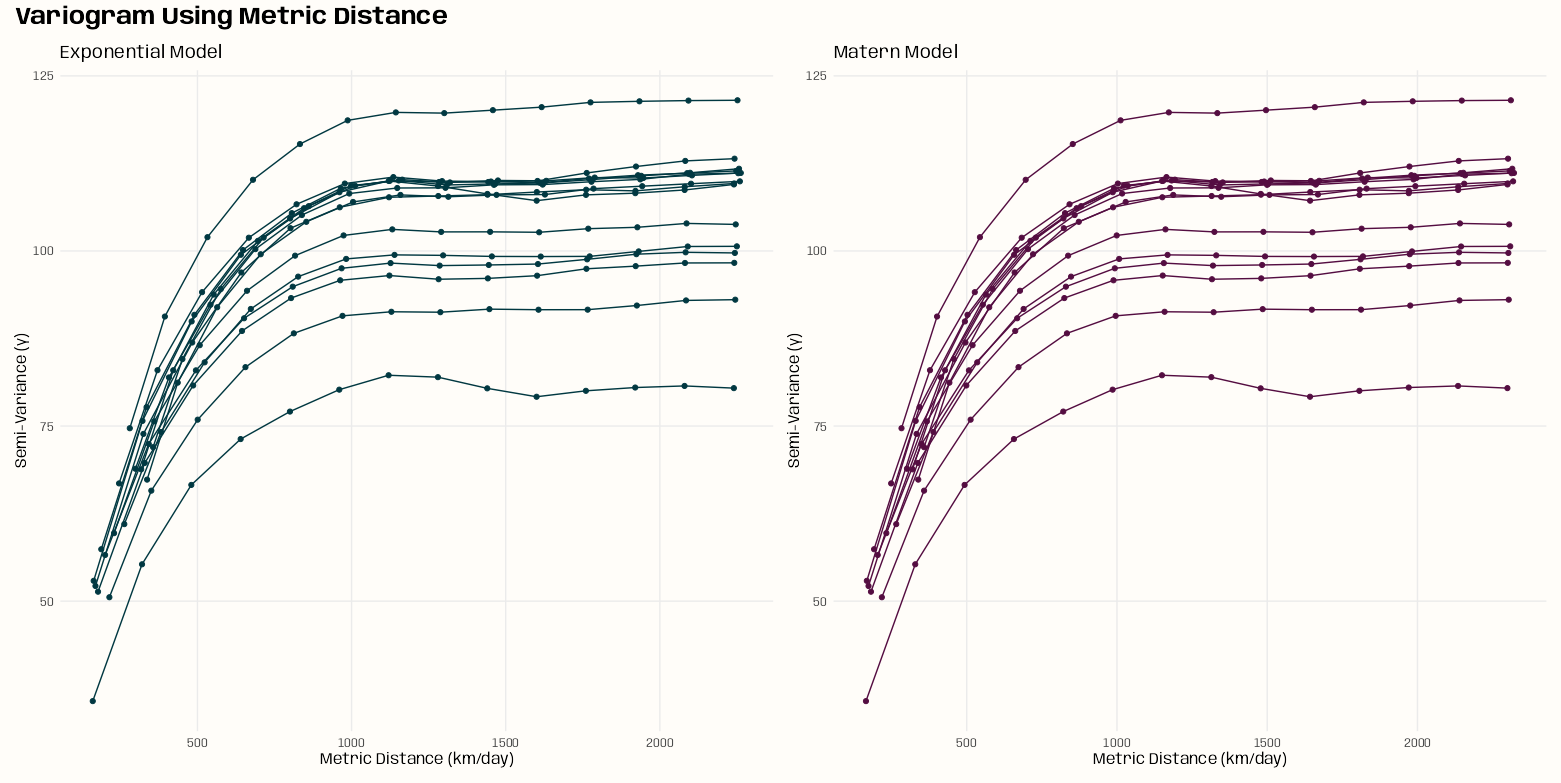

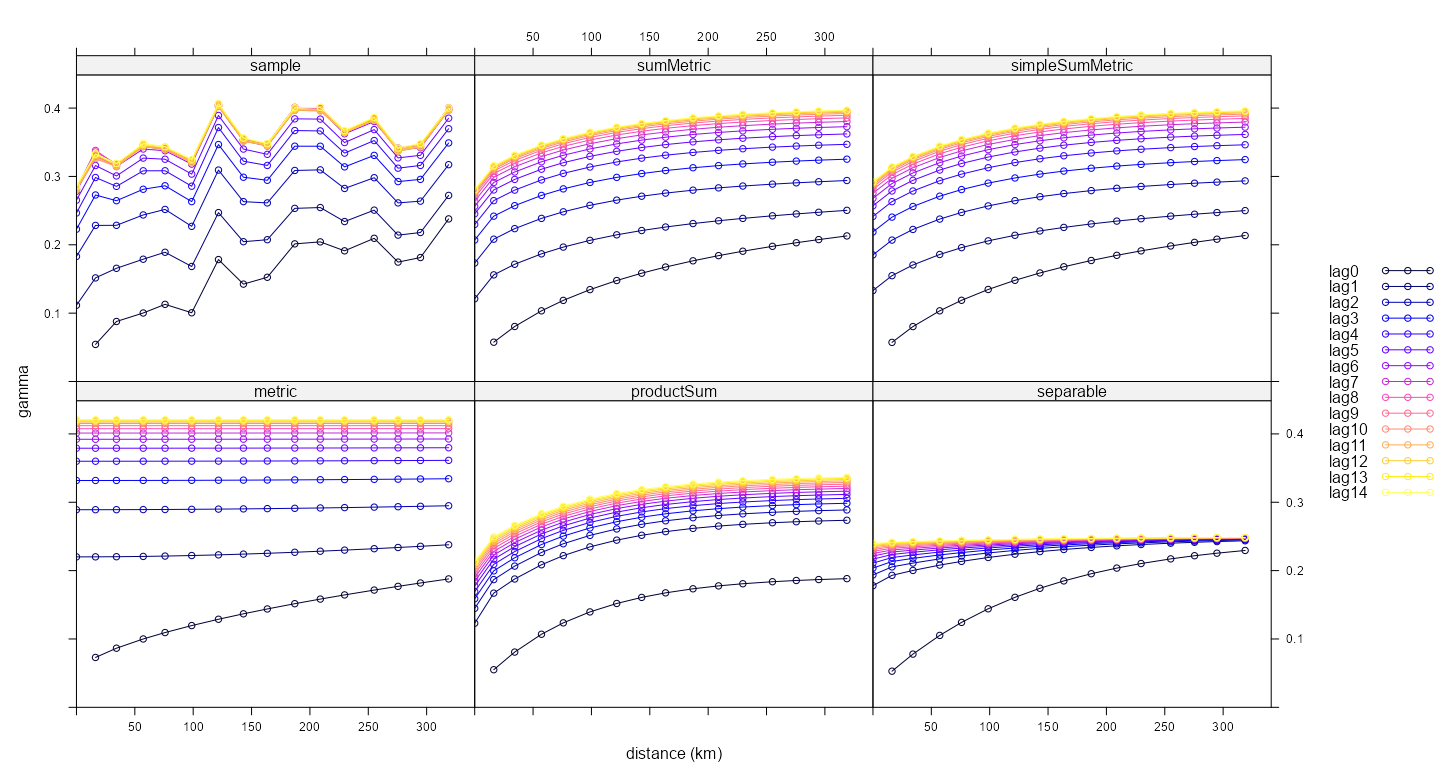

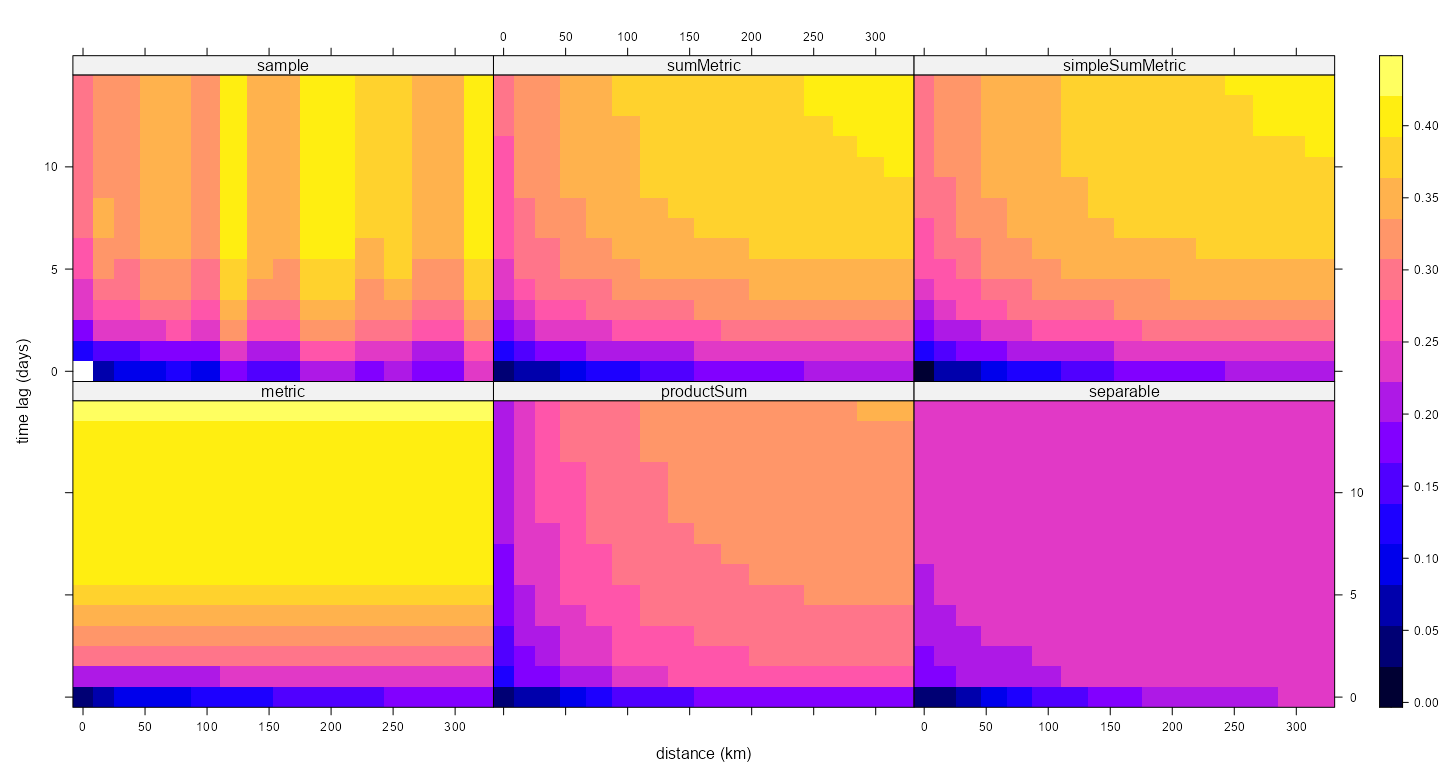

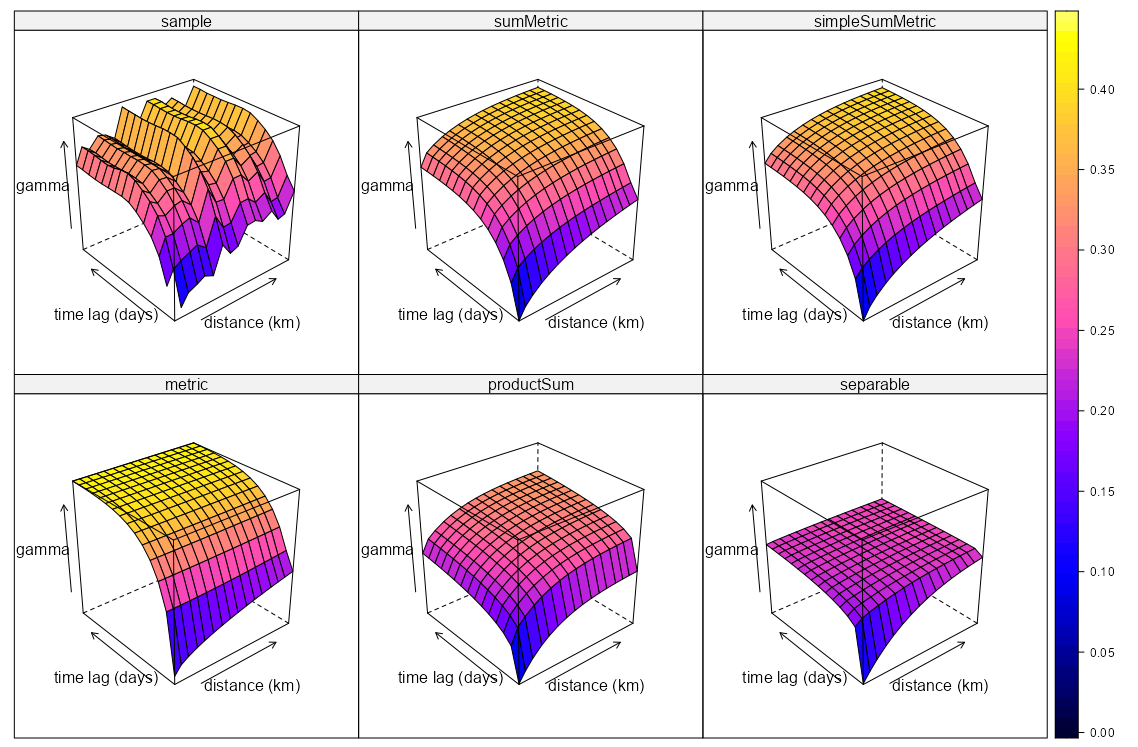

- The data, r5to10, is PM10 measurements across 53 measurement stations in Germany.

- Pooled spatial variograms are analyzed. Semivariance is computed between station pairs within each time slice, and then those within-slice spatial relationships are pooled across slices. The partial sill and range estimates can be used as starting values in a spatio-temporal variogram..

- Bootstrap distributions for the partial sill and range are calculated. These could give us a potential range of starting values if there are fitting issues with the spatio-temporal variogram.

- Takes around 3hrs and 30 min on 10 workers

- This example is from the {gstat} vignette (Sect. 2.2) which they seem to have specified the model incorrectly given the package documentation. The vignette uses dX = 0 which I can only guess is because they thought dX is less than equal to the 2-norm when it’s actually strictly less than (See Geospatial, Kriging >> Variograms >> Example). Consequently, they produced a bad fit.

- The correct specification dX = 0.5 with a factored time variable produced good fits for all models.

pacman::p_load( dplyr, ggplot2, gstat, futurize ) data(air, package = "spacetime") rural_log <- spacetime::STFDF( sp = stations, time = dates, data = data.frame(PM10 = log(as.vector(air))) ) rr <- rural_log[, "2005::2010"] unsel <- which(apply( as(rr, "xts"), 2, function(x) all(is.na(x)) )) r5to10 <- rr[-unsel, ] # sample 200 time instances (days) set.seed(2026) vec_time <- sample( x = dim(r5to10)[2], size = 200 ) ls_samp <- lapply( vec_time, function(i) { x = r5to10[,i] x$time_index = i rownames(x$coords) = NULL x } ) spdf_samp <- do.call( what = rbind, args = ls_samp )- Unlike the vignette, I’ve logged the PM10 variable.

- 200 time instances (days) are sampled

- For each time instance, the STFDF is subsetted by that day. Then that day is added to a new variable, time_index.

- Each element of ls_samp is a SpatialPointsDataFrame and

rbindcombines the elements into one SpatialPointsDataFrame

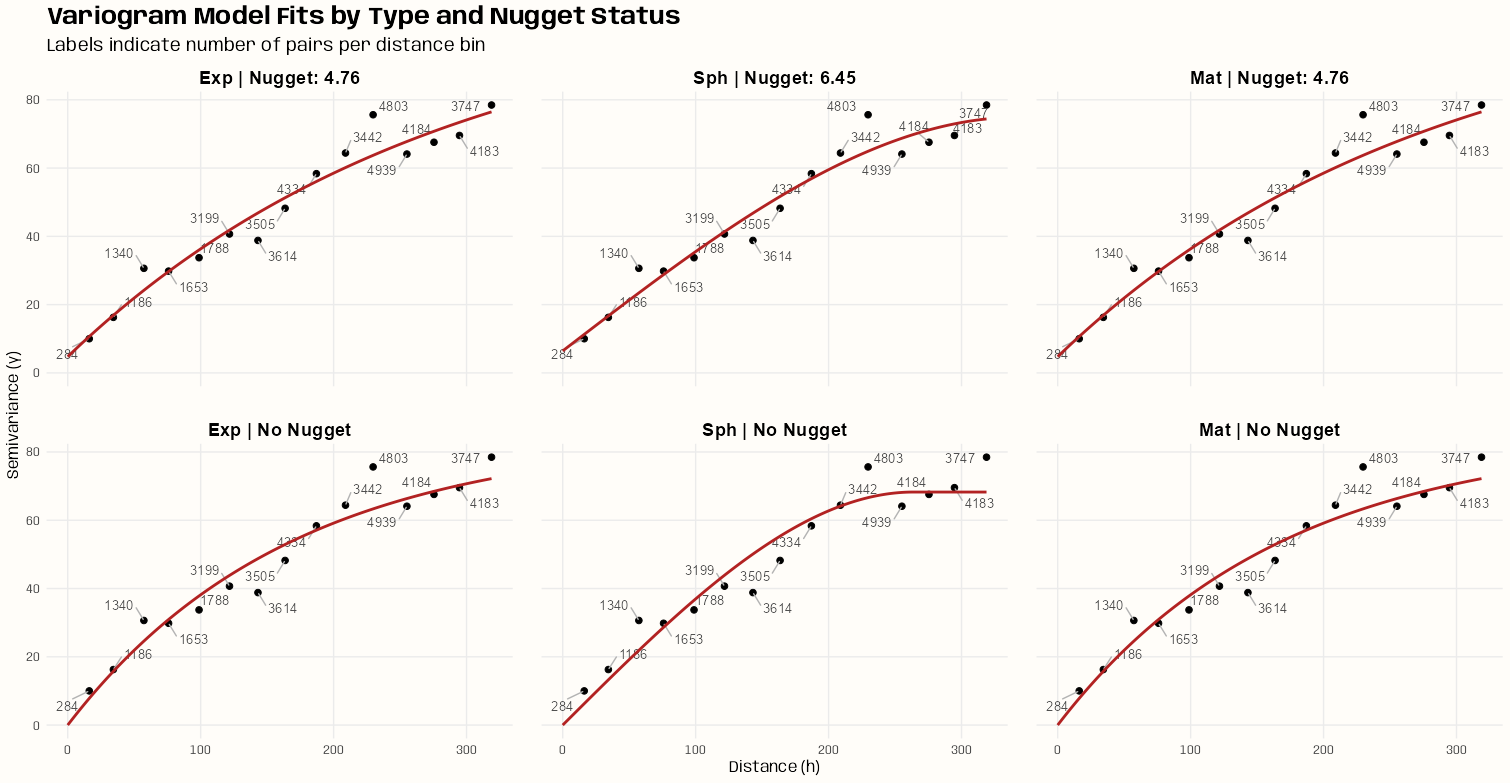

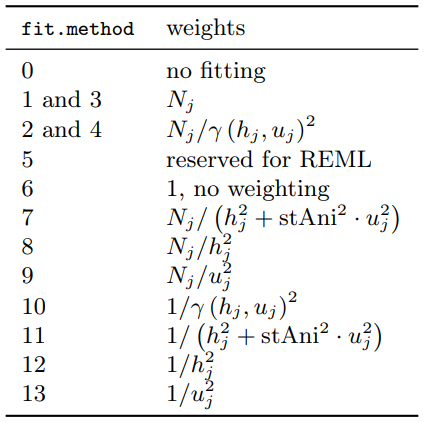

Fit the Models

spdf_samp$time_index <- as.factor(spdf_samp$time_index) vario_samp <- variogram( object = PM10 ~ time_index, locations = spdf_samp[!is.na(spdf_samp$PM10),], dX = 0.5 # select pairs only within same factor level (time instance) ) fit_vario_mod <- function( mod_type, is_nug, vario_samp, gamma_max, dist_max) { if (is_nug) { # w/nugget mod_vario <- fit.variogram( object = vario_samp, model = vgm( # heuristic method psill = gamma_max * 0.85, model = mod_type, range = dist_max * 0.25, nugget = gamma_max * 0.15 ) ) } else { # no nugget mod_vario <- fit.variogram( object = vario_samp, model = vgm( psill = gamma_max * 0.85, model = mod_type, range = dist_max * 0.25, ) ) } # cleaning up model output nugget_val <- mod_vario$psill[mod_vario$model == "Nug"] model_res <- mod_vario[mod_vario$model != "Nug", ] tib_res <- tibble( mod_type = model_res$model, psill = model_res$psill, range = model_res$range, nugget = ifelse(length(nugget_val) == 0, NA, nugget_val), sse = attr(mod_vario, "SSErr"), model = list(mod_vario) ) return (tib_res) } # input for the mod fitting loop # theoretical model types and w or w/o nugget # empircal variogram plus data for starting values # used in heuristic for starting values gamma_max <- max(vario_samp$gamma) dist_max <- max(vario_samp$dist) df_mods_nug <- expand.grid( mod_type = c("Exp", "Sph", "Mat"), is_nug = c(TRUE, FALSE), stringsAsFactors = FALSE, KEEP.OUT.ATTRS = FALSE ) |> as_tibble() |> mutate( vario_samp = list(vario_samp), gamma_max = gamma_max, dist_max = dist_max, ) tib_vario_mods <- purrr::pmap( df_mods_nug, fit_vario_mod ) |> purrr::list_rbind() tib_vario_mods #> # A tibble: 6 × 6 #> mod_type psill range nugget sse model #> <fct> <dbl> <dbl> <dbl> <dbl> <list> #> 1 Exp 0.192 92.4 0.0191 0.00210 <vrgrmMdl [2 × 9]> #> 2 Sph 0.160 216. 0.0381 0.00247 <vrgrmMdl [2 × 9]> #> 3 Mat 0.192 92.4 0.0191 0.00210 <vrgrmMdl [2 × 9]> #> 4 Exp 0.196 67.5 NA 0.00230 <vrgrmMdl [1 × 9]> #> 5 Sph 0.179 127. NA 0.00393 <vrgrmMdl [1 × 9]> #> 6 Mat 0.196 67.5 NA 0.00230 <vrgrmMdl [1 × 9]>- No warnings from any of the model fittings, so the starting values from the heuristic method seem to work fine in this case.

- Each model fitted a nugget when offered the opportunity

- The Exponential and Matern (both w/nugget) delivered the best performances with SSEs of 0.00210.

Visualize

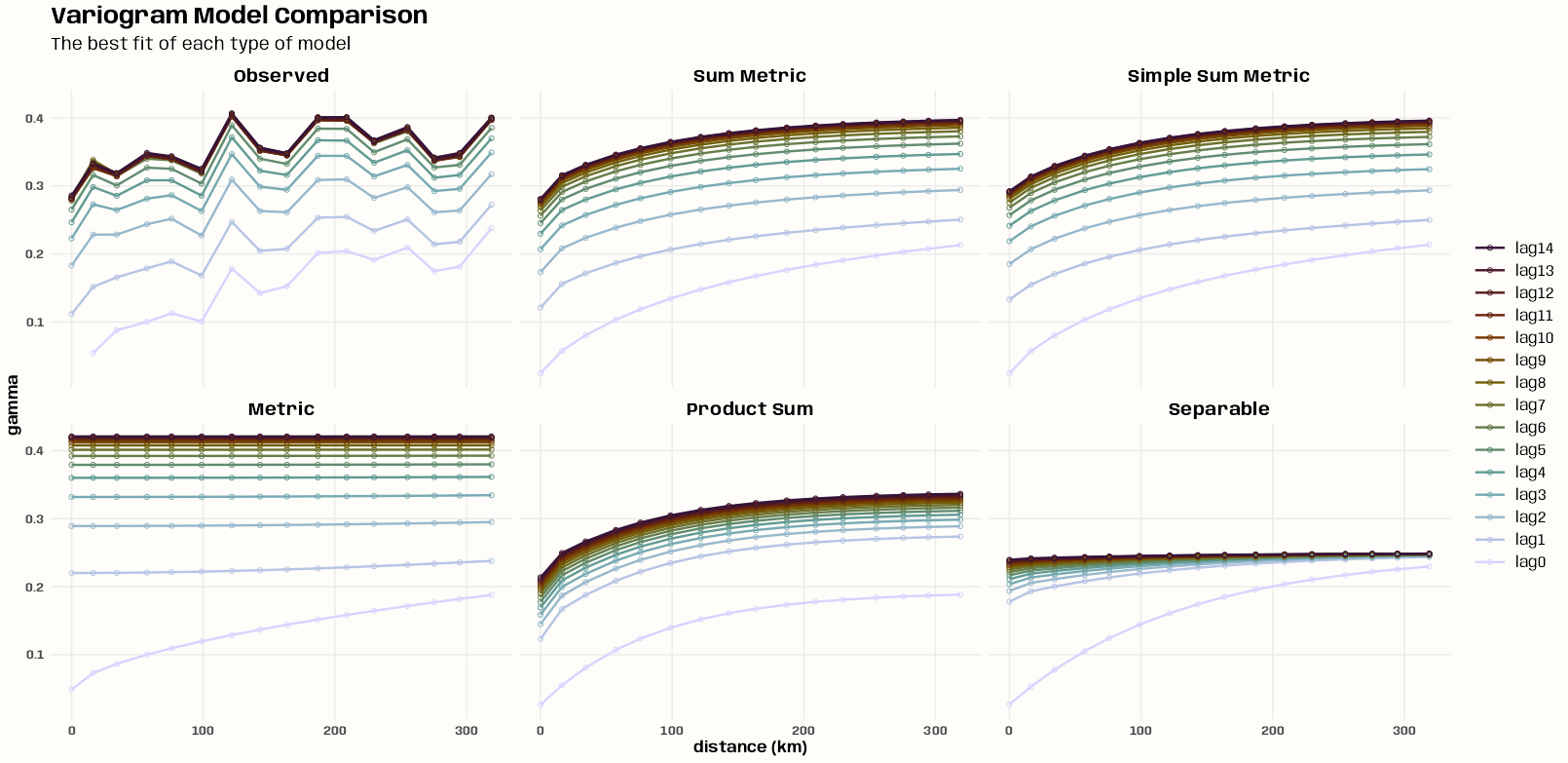

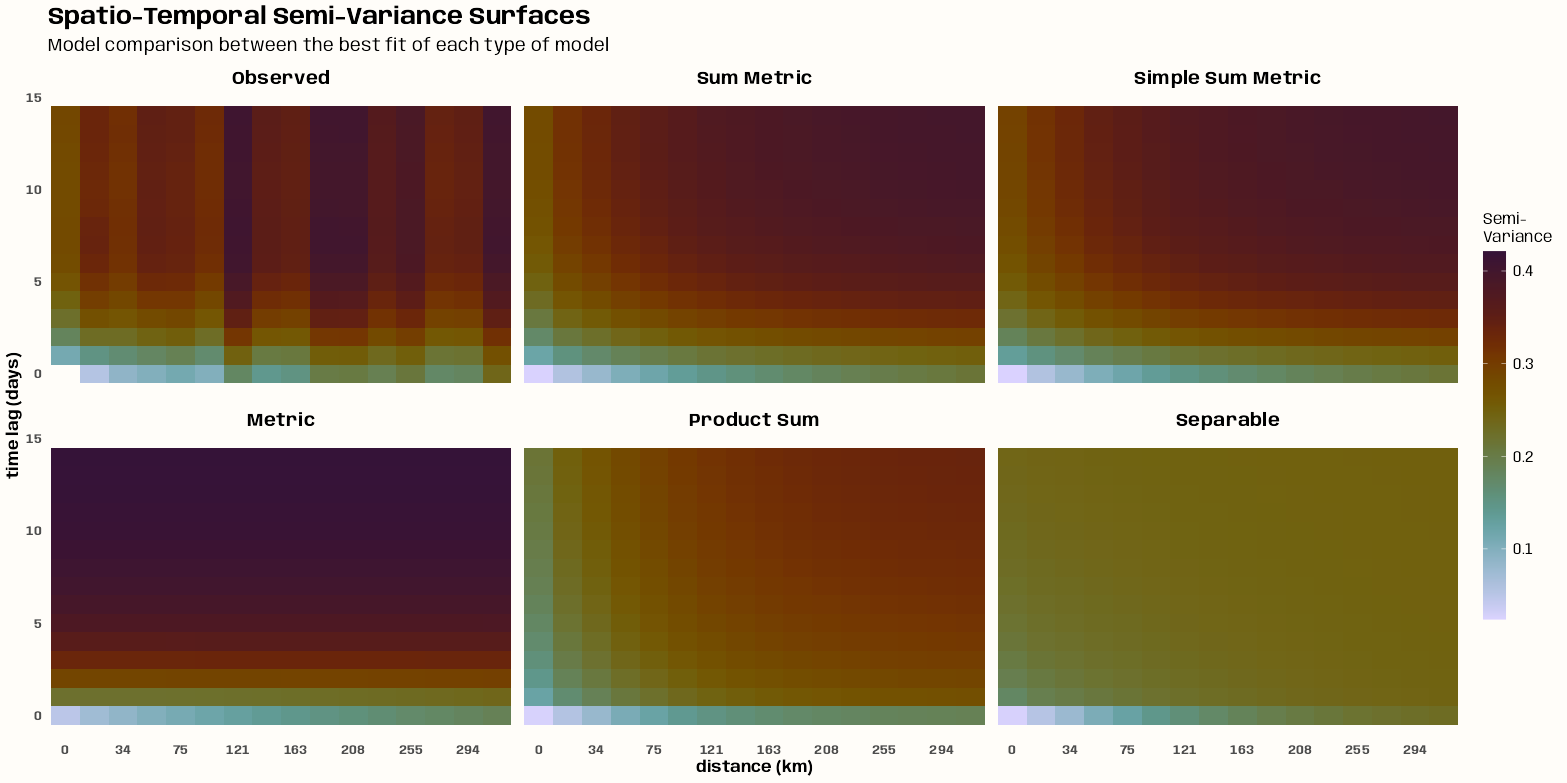

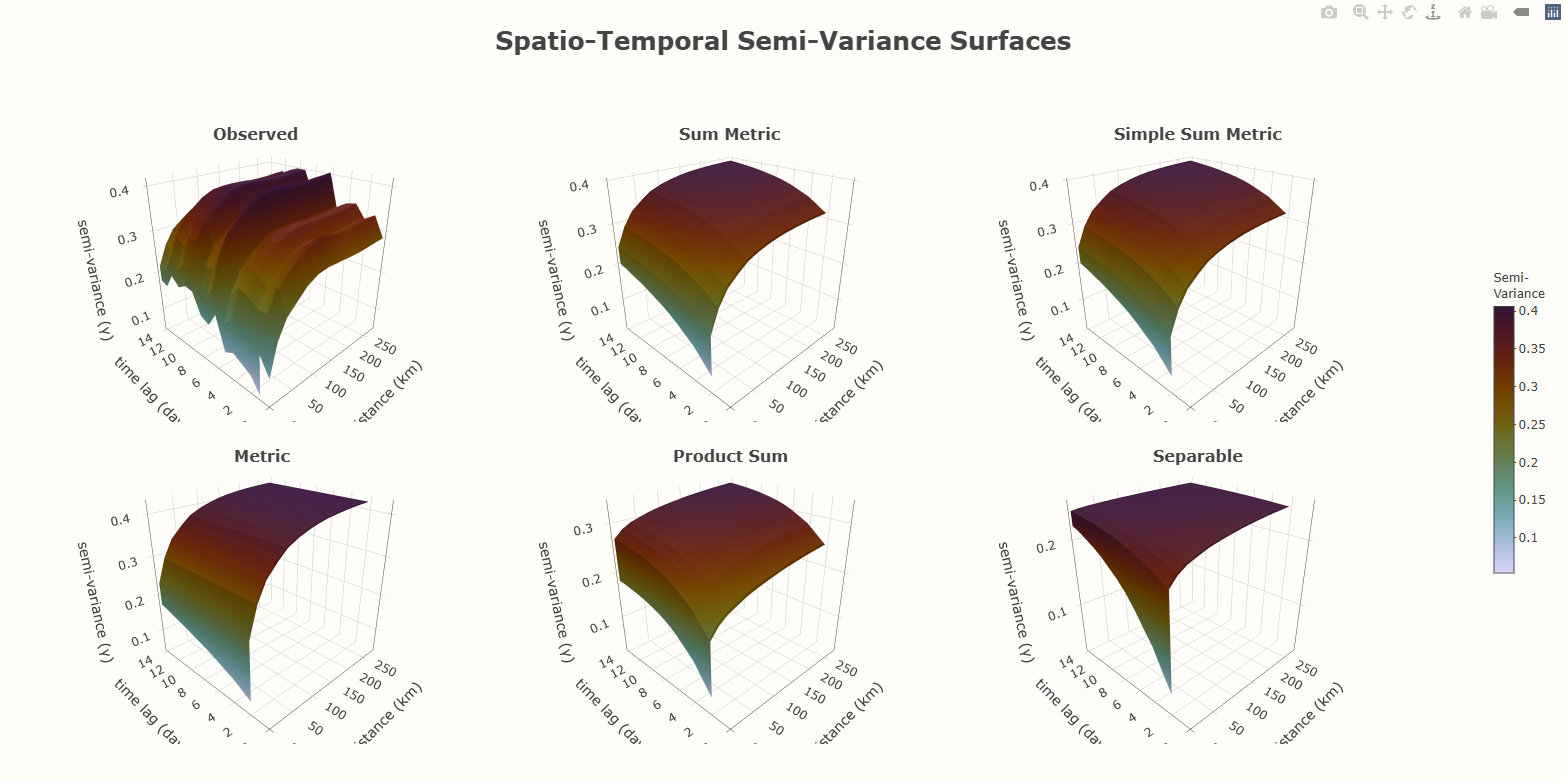

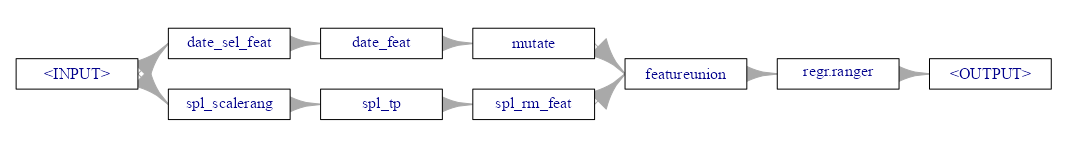

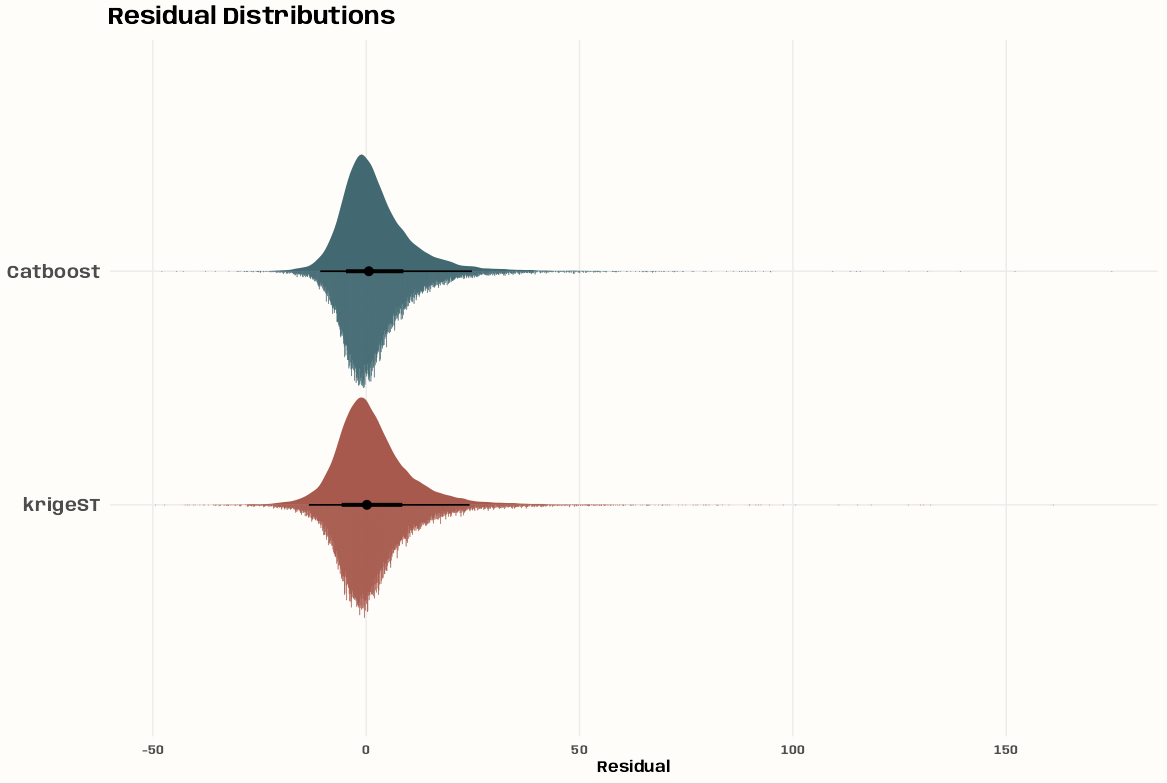

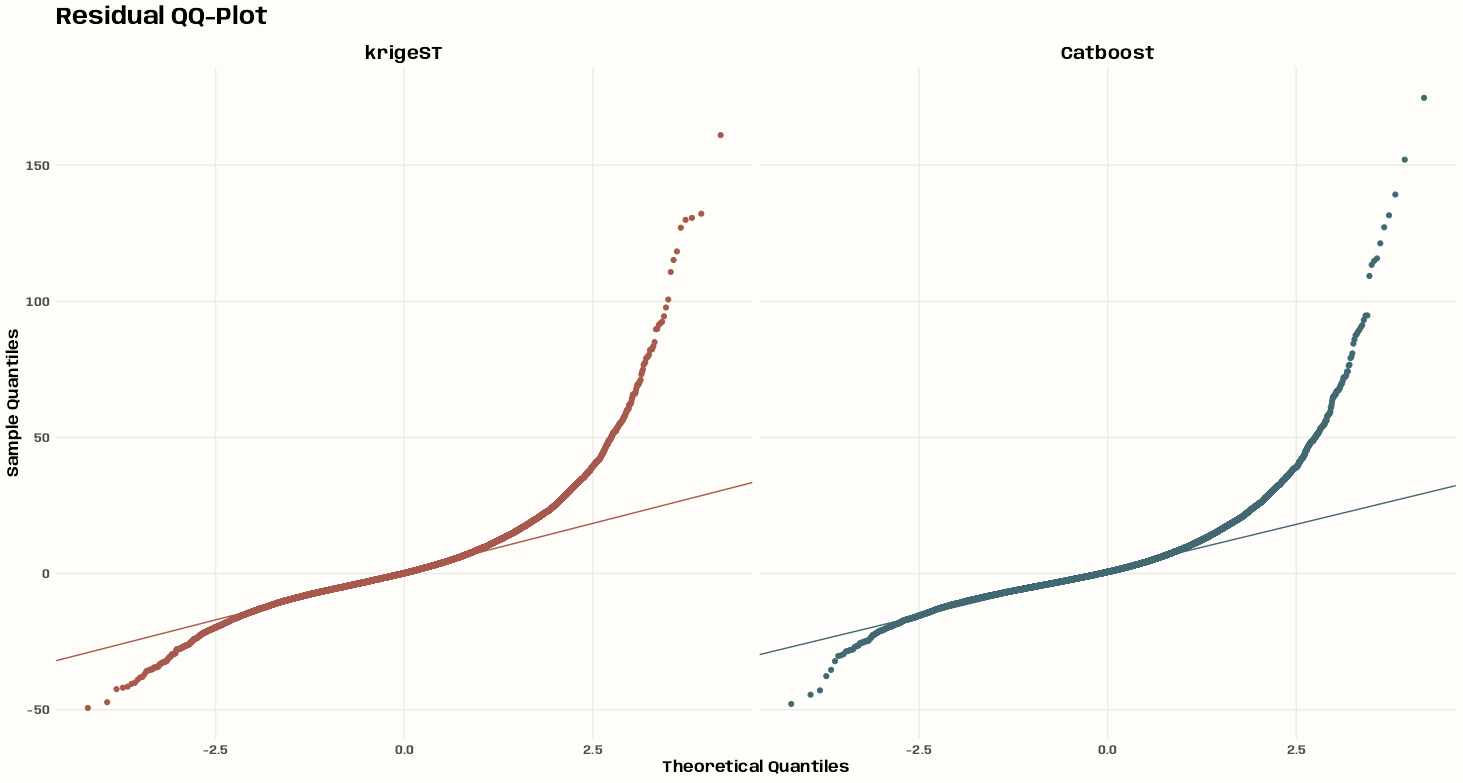

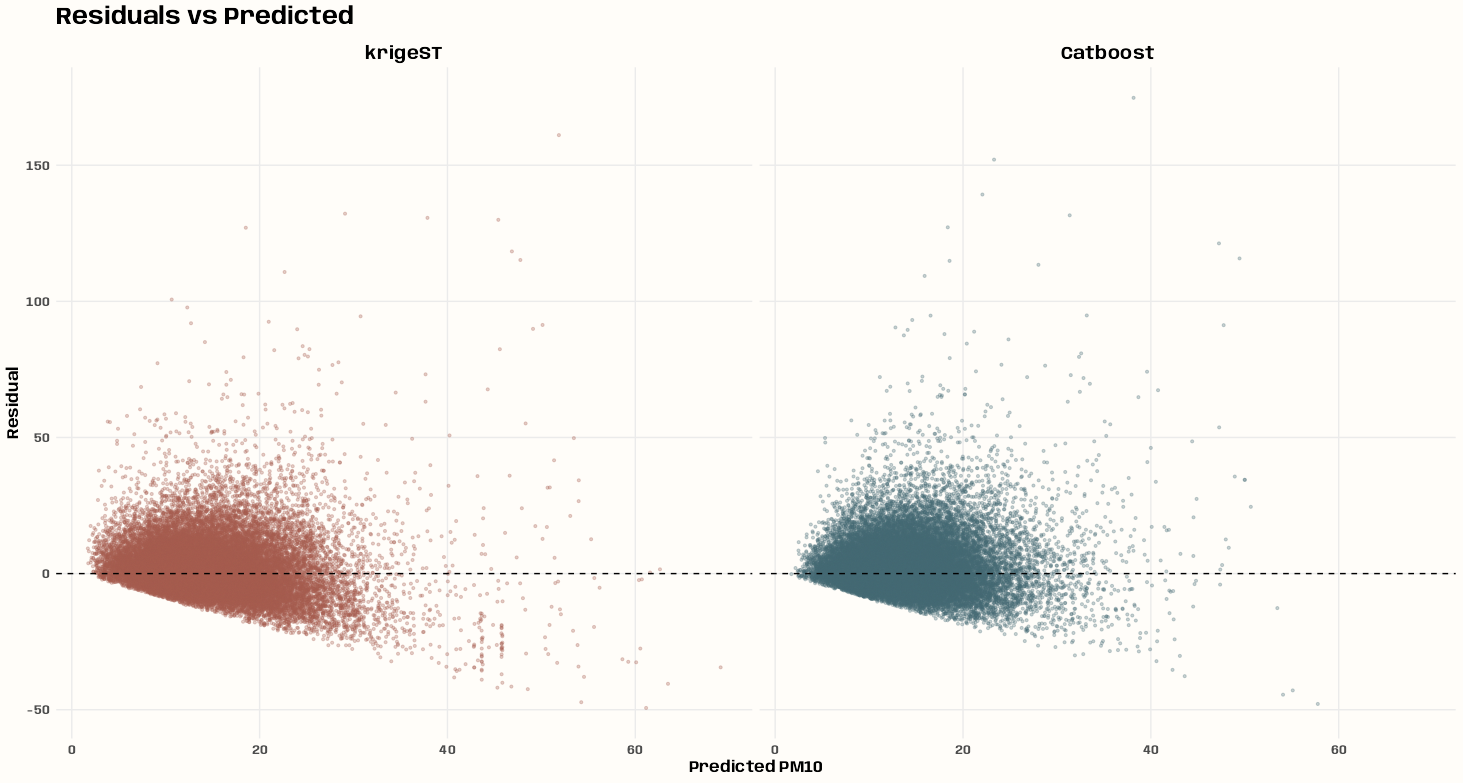

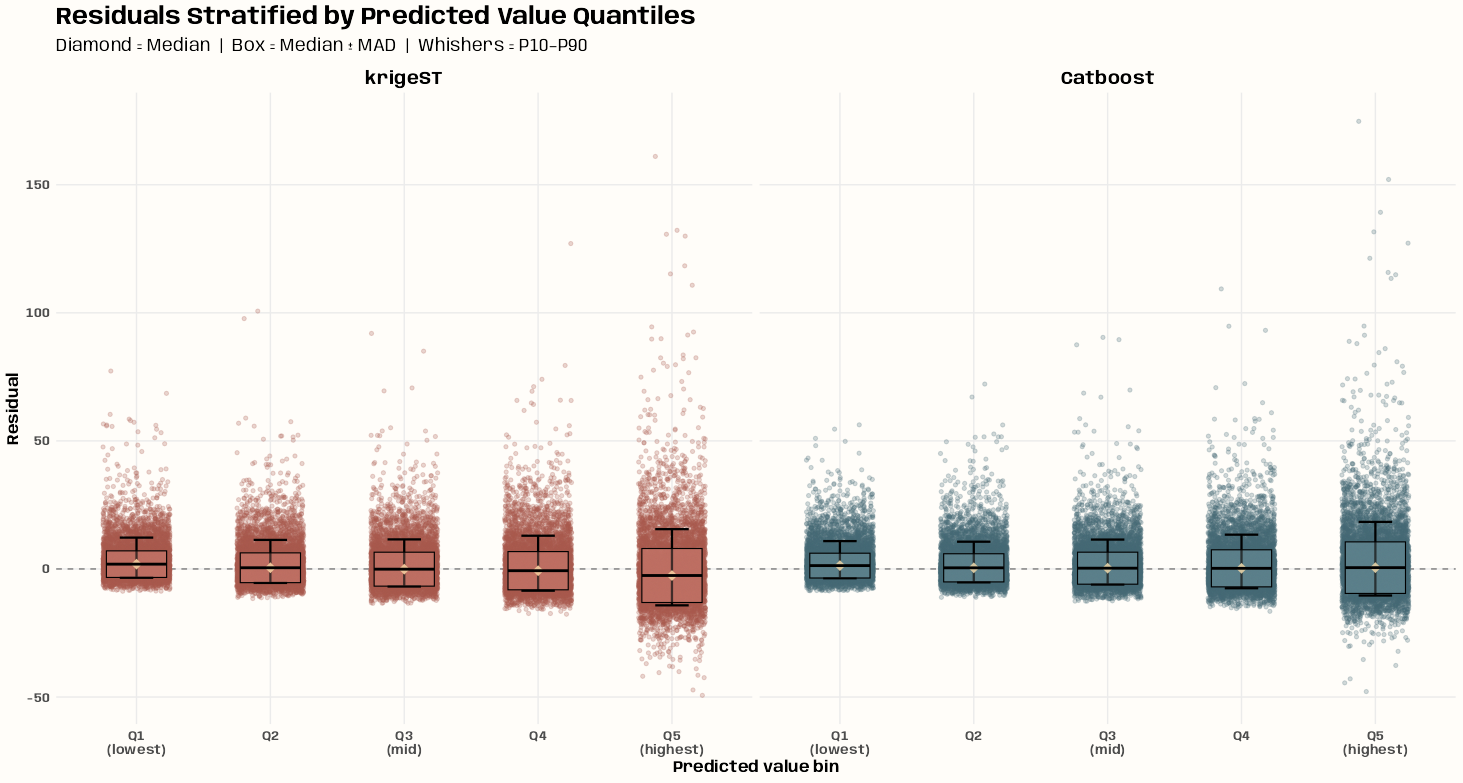

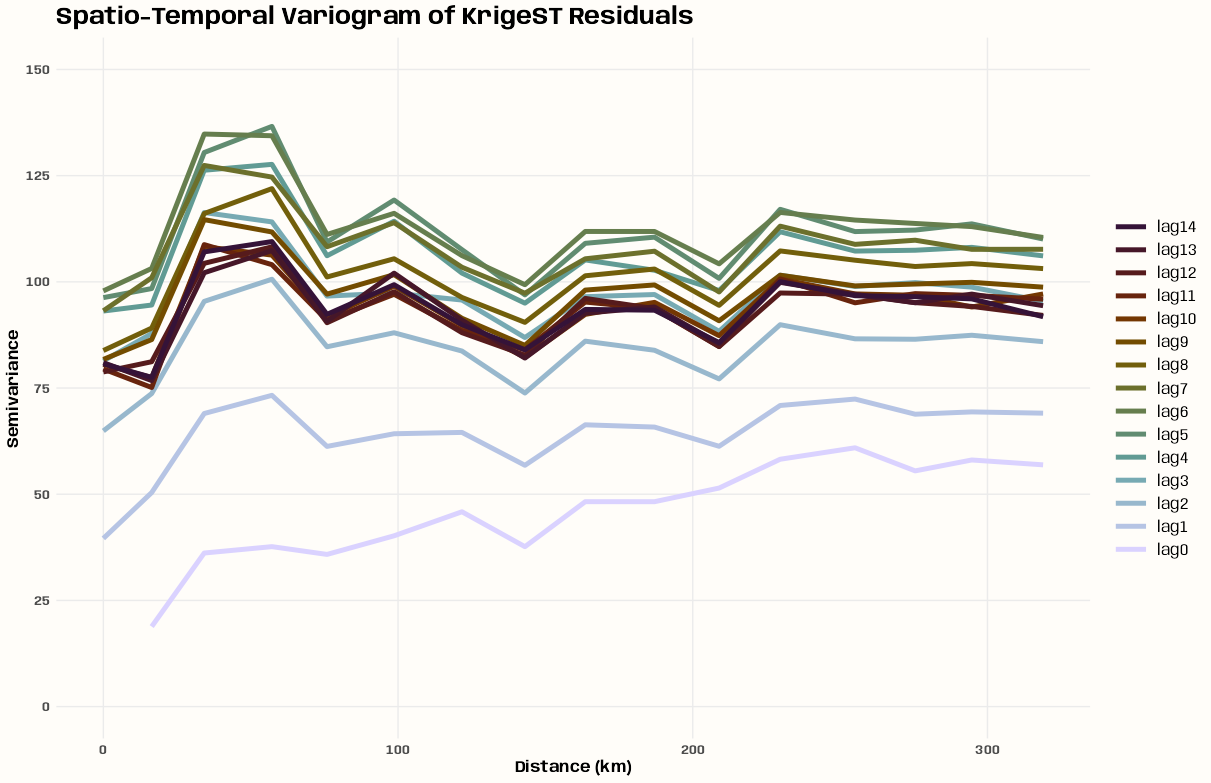

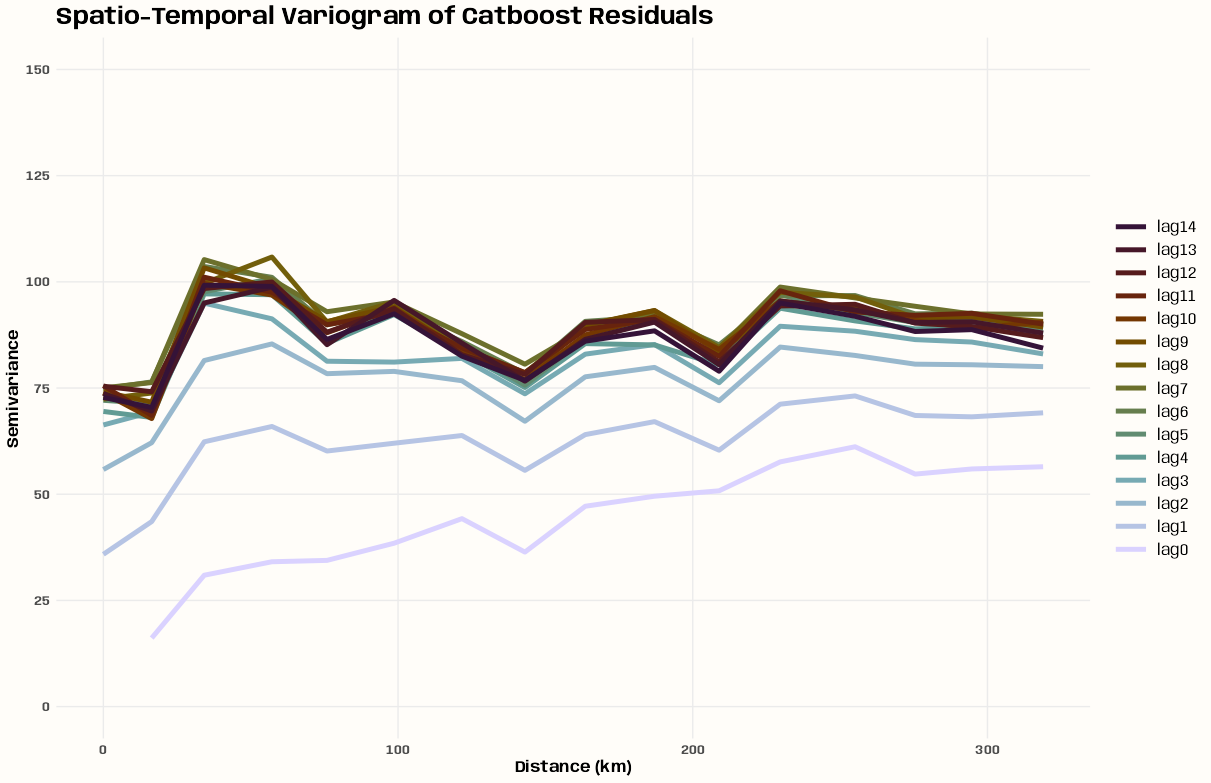

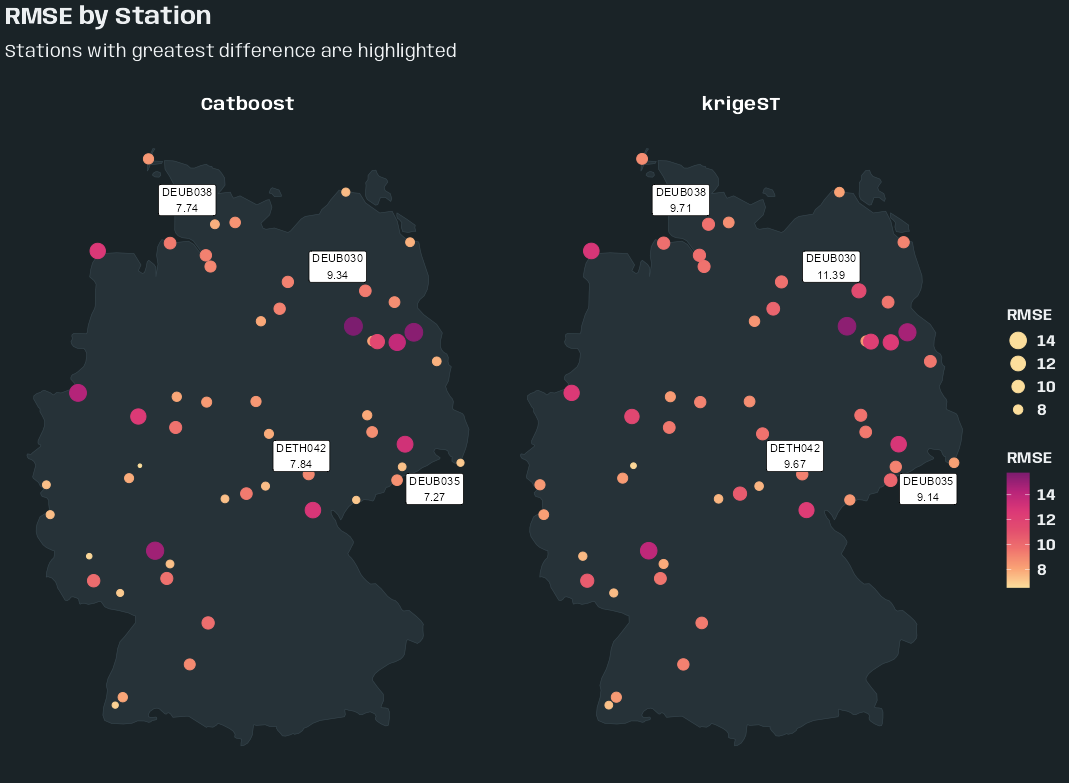

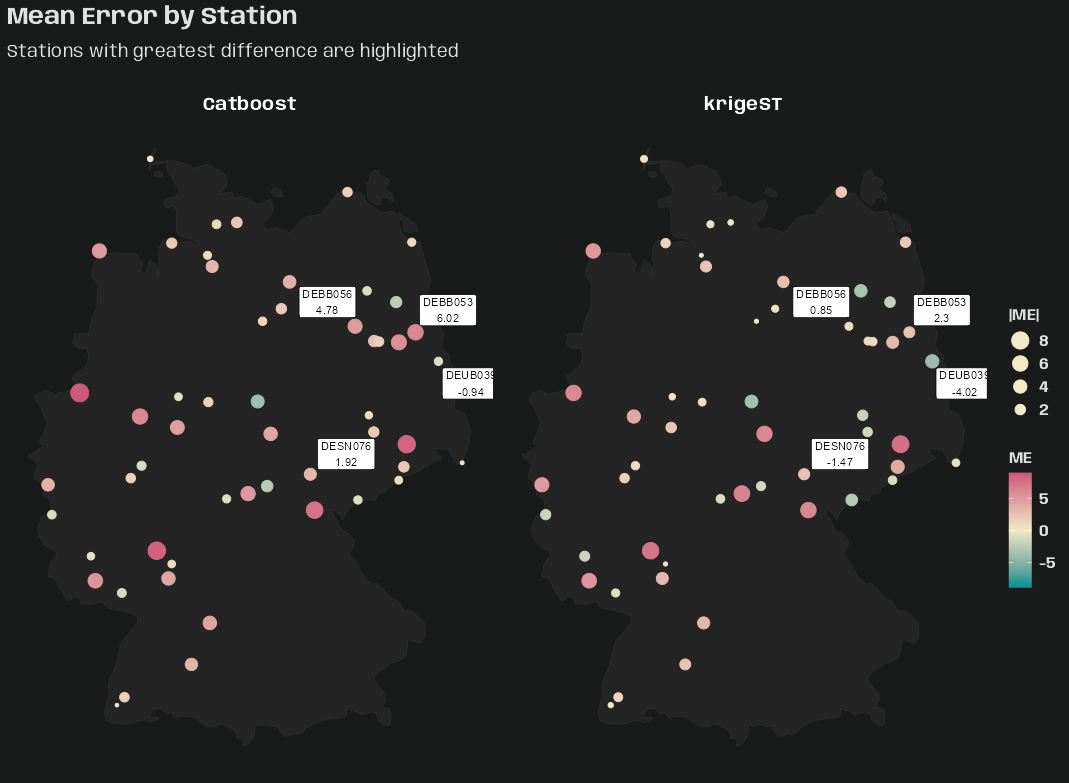

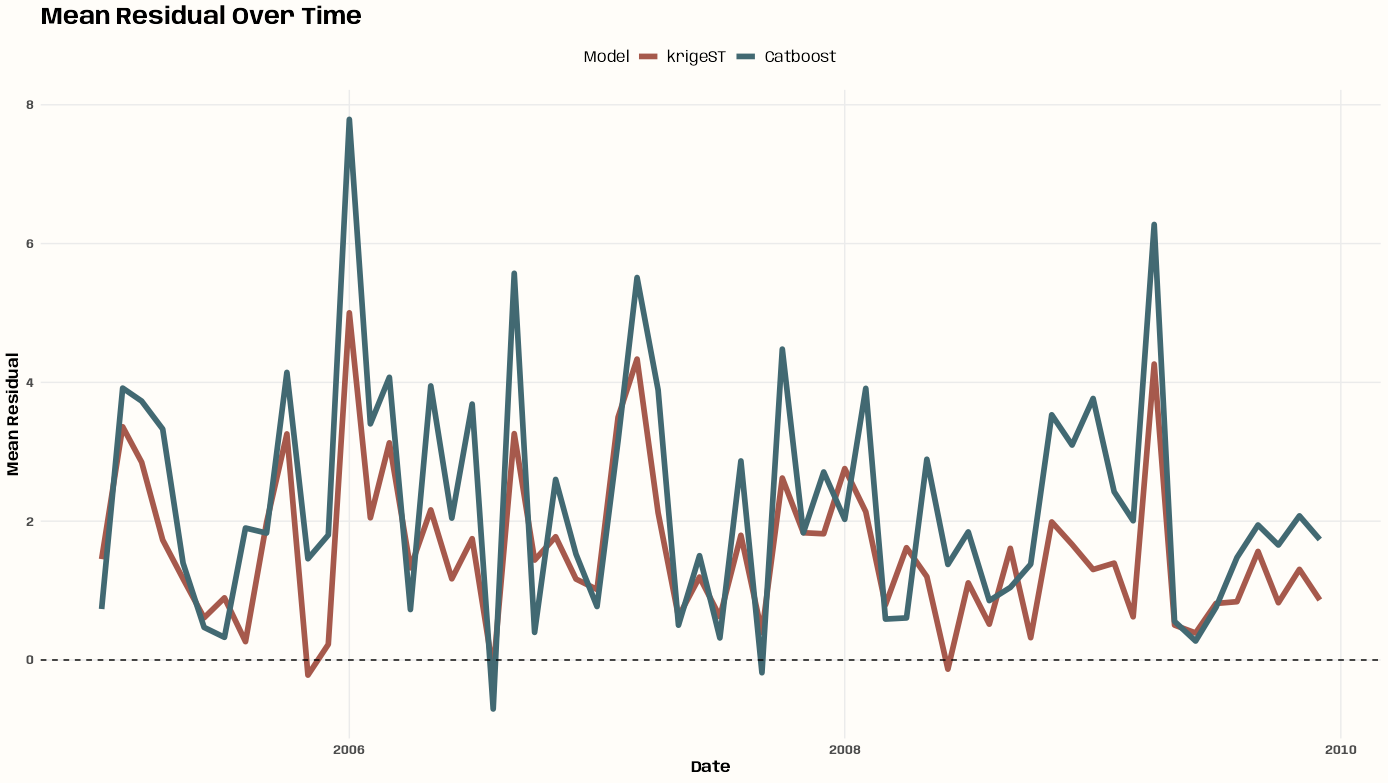

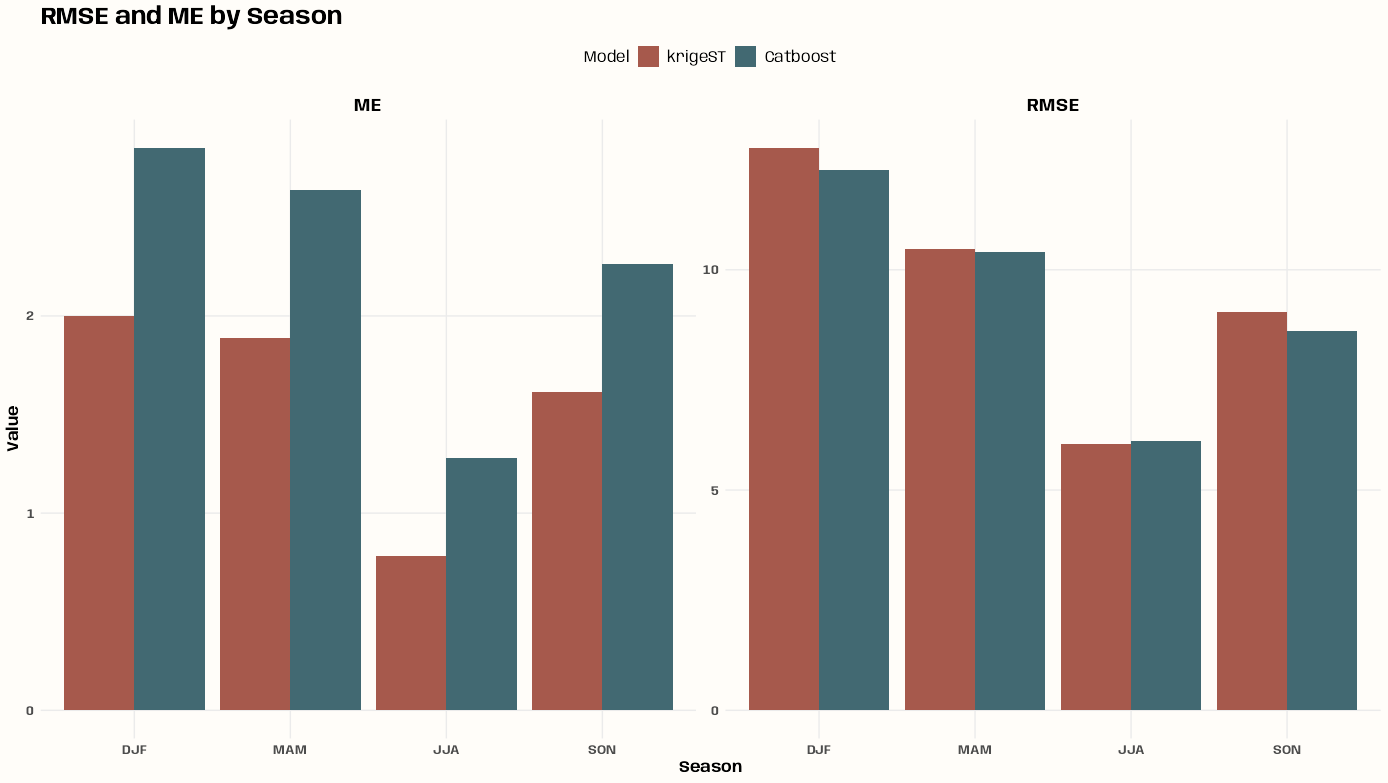

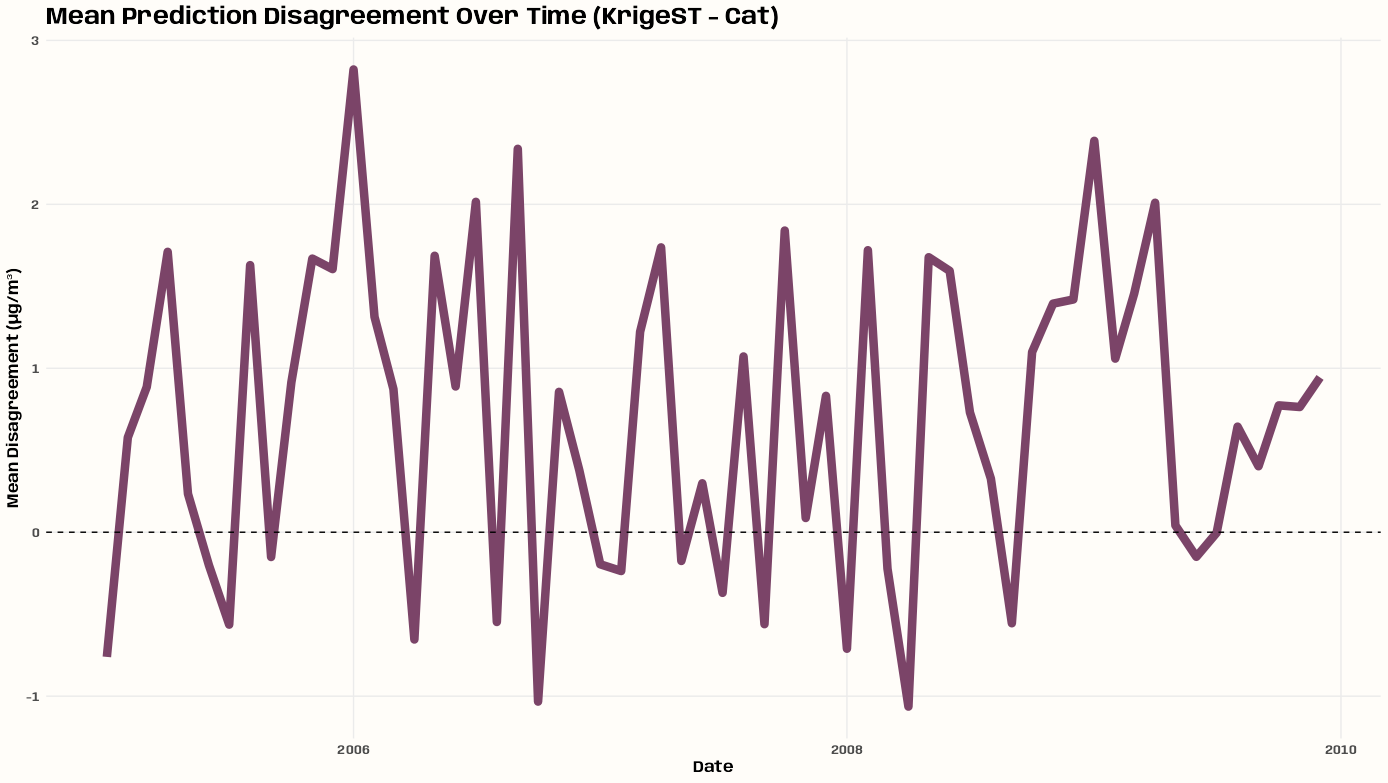

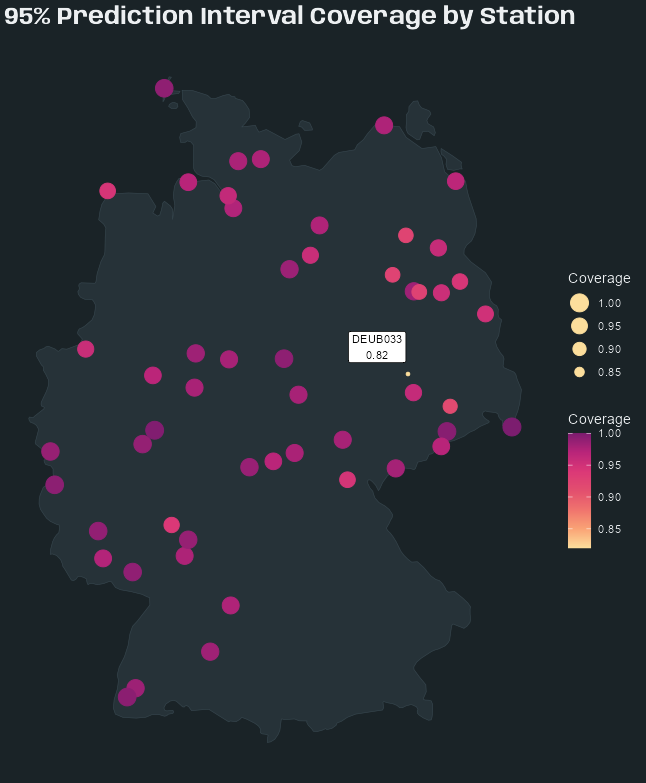

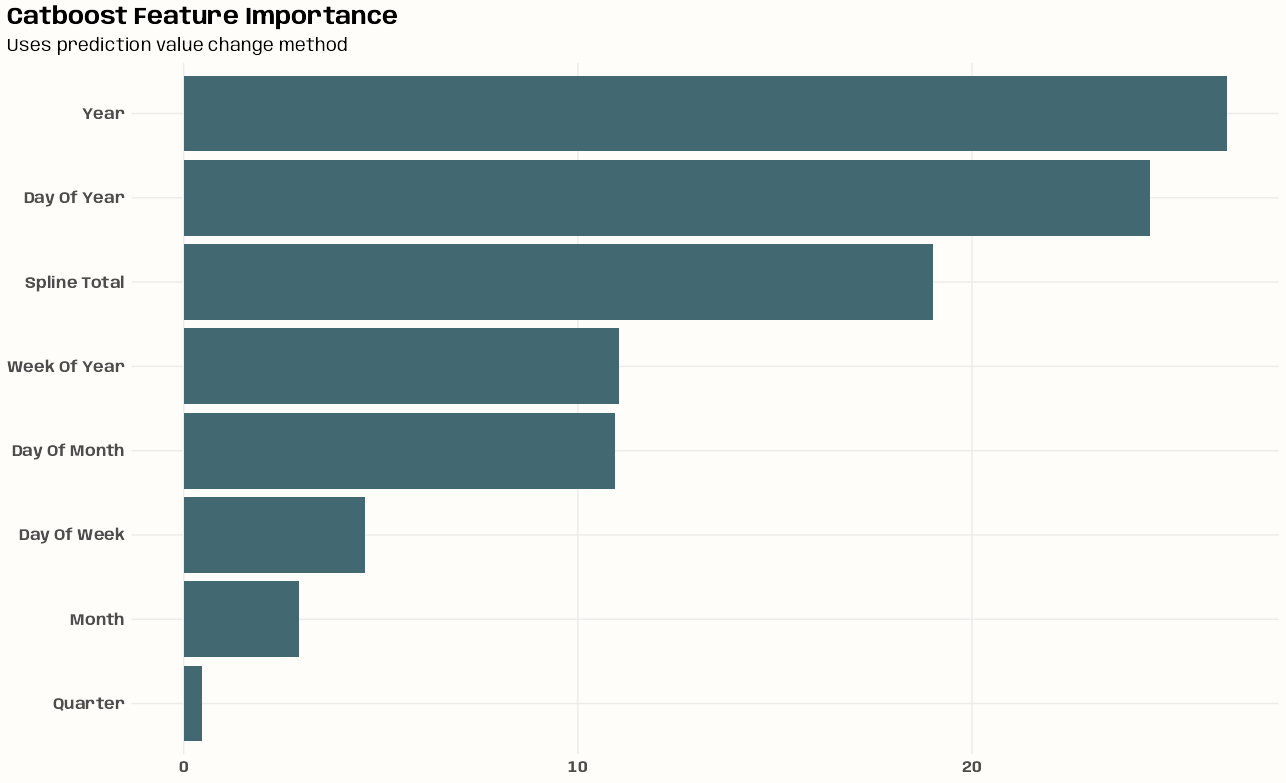

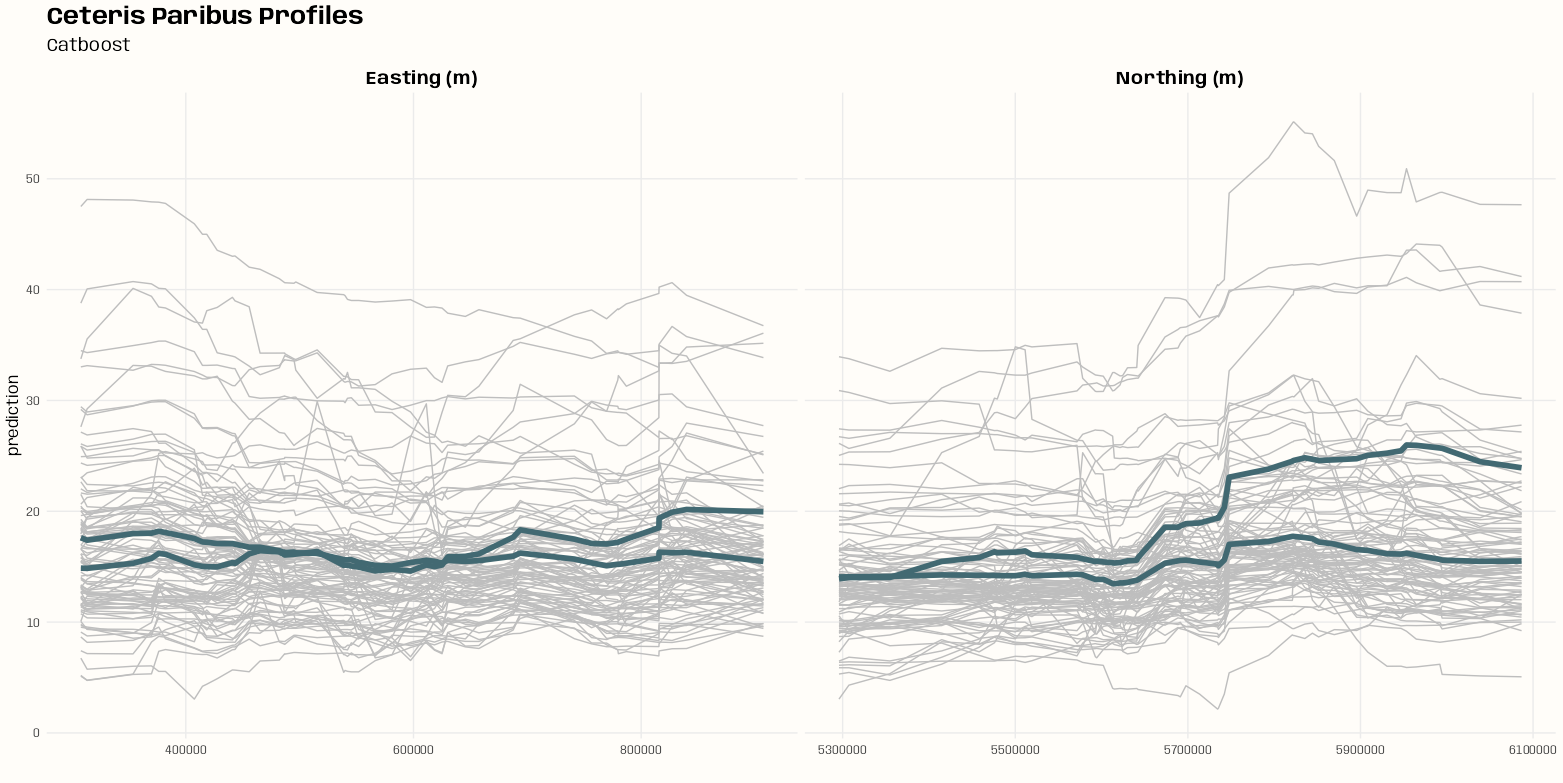

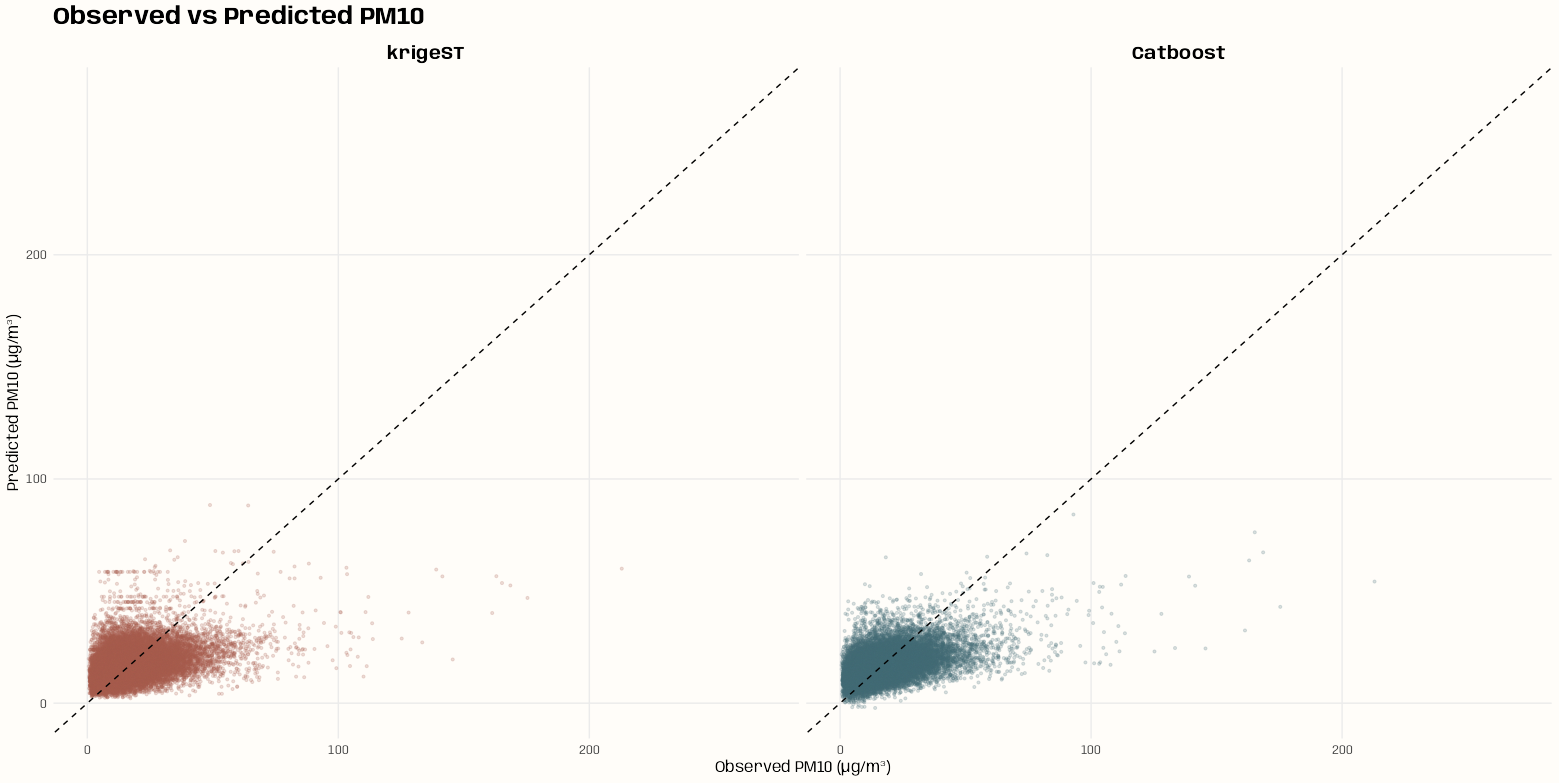

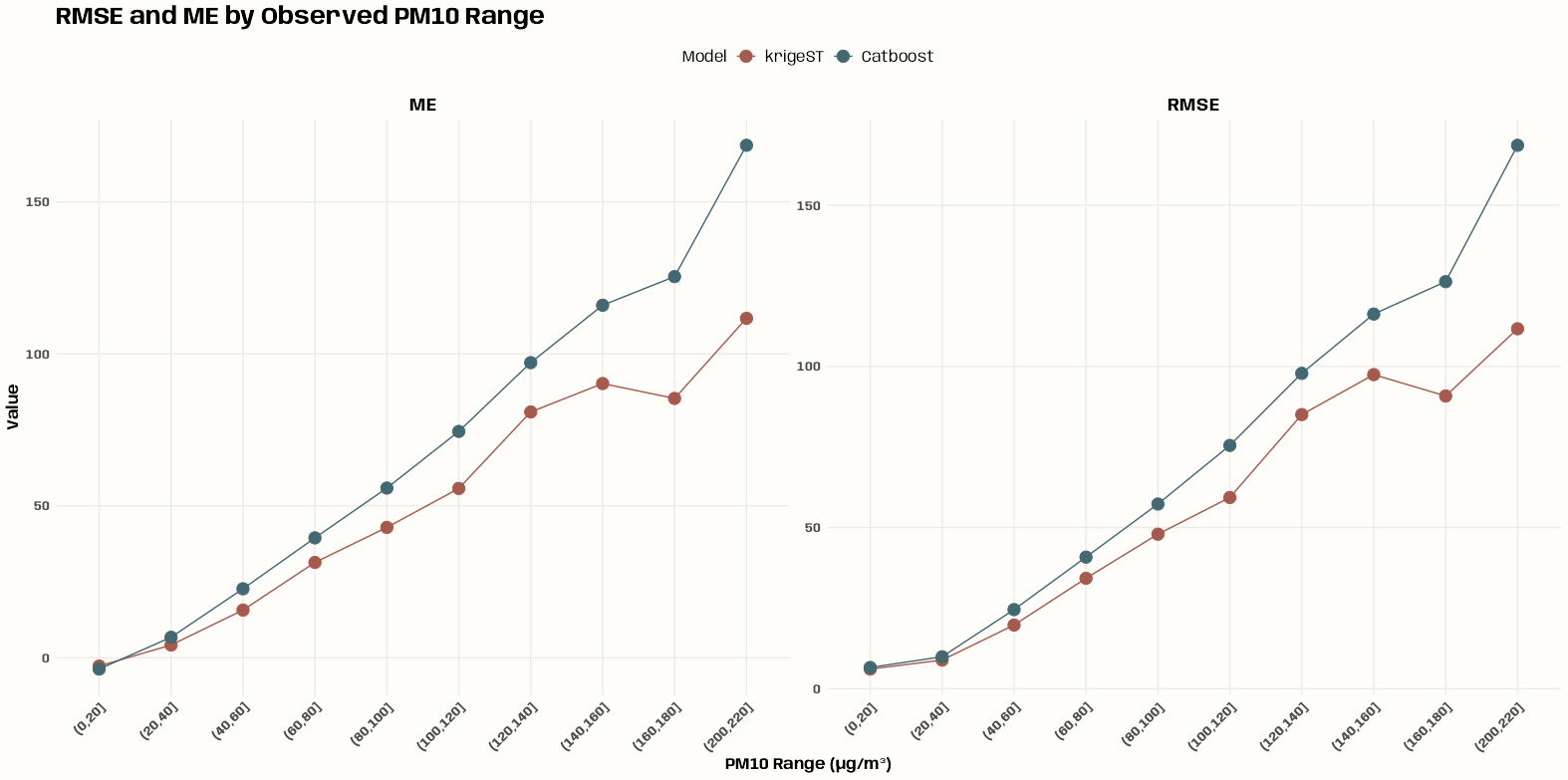

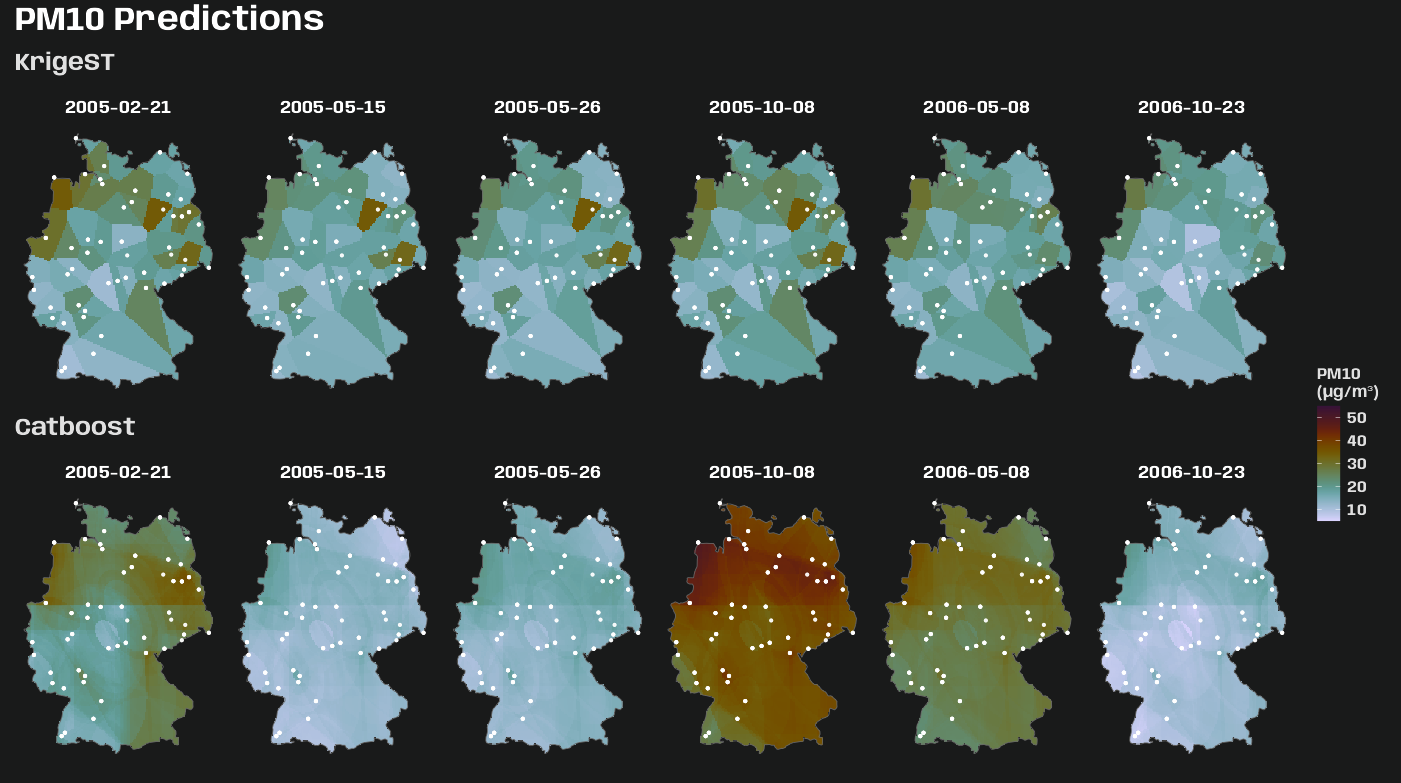

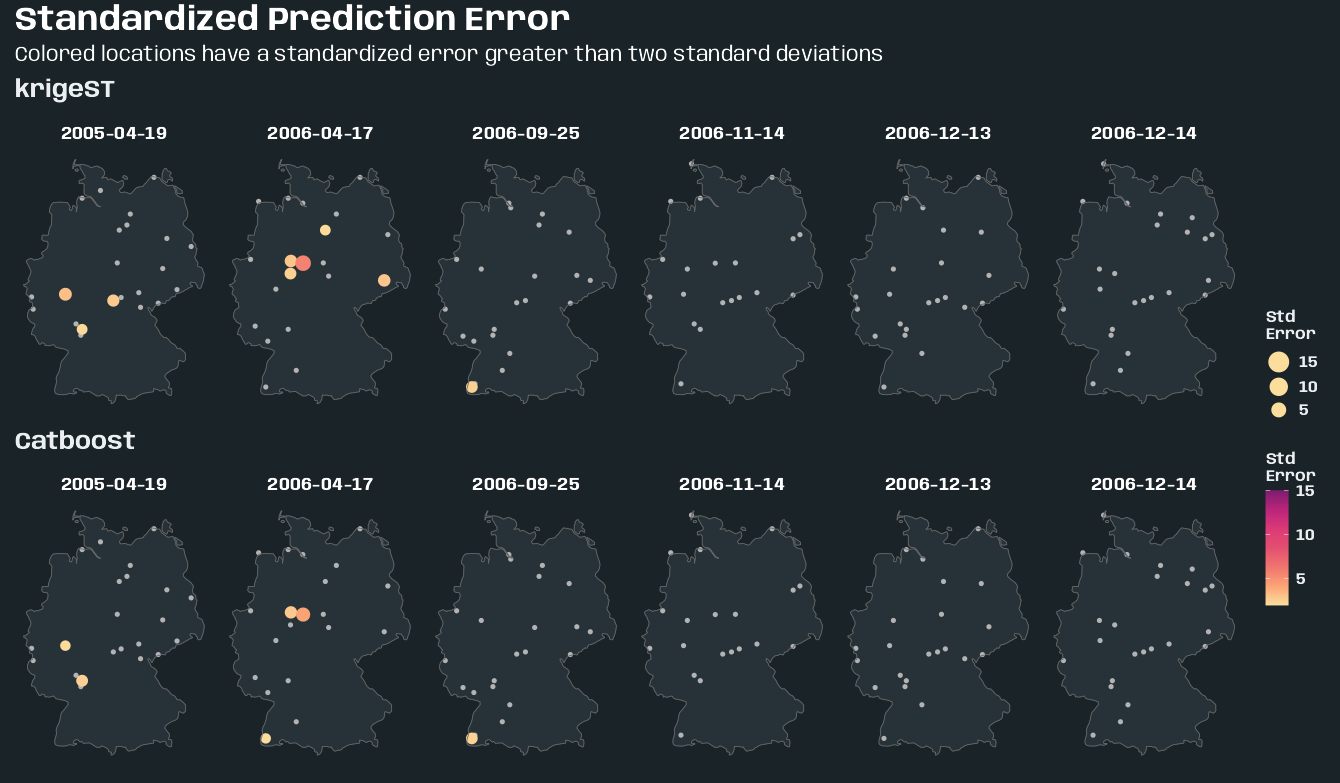

Code